1,000 Micro-Bots Rotate Gear Without Contact: Surgical Swarms Near Human Trials

TL;DR

- Cornell and Max Planck Develop Microrobots Generating Fluidic Torque for Biomedical Manipulation at 300-Micrometer Scale

- Perseverance Rover Achieves 90% Autonomous Driving on Mars, Setting New Record with 331.74m Single-Day Travel

🤖 1,000-Swarm Microrobots Generate Surgical Torque Without Contact: Cornell-Max Planck Breakthrough

1,000 robots smaller than a grain of sand just rotated a gear without touching it. The fluidic torque they generate in water could one day manipulate delicate tissue inside your body—no scalpels required. Wild twist: these bots never physically contact what they move, yet collective vortices exert surgical precision. If swarms this tiny can open grippers and spin racks now, what happens when 10,000 enter your bloodstream? Would you trust invisible robots to perform eye surgery? — Northeast US & Germany leading the charge

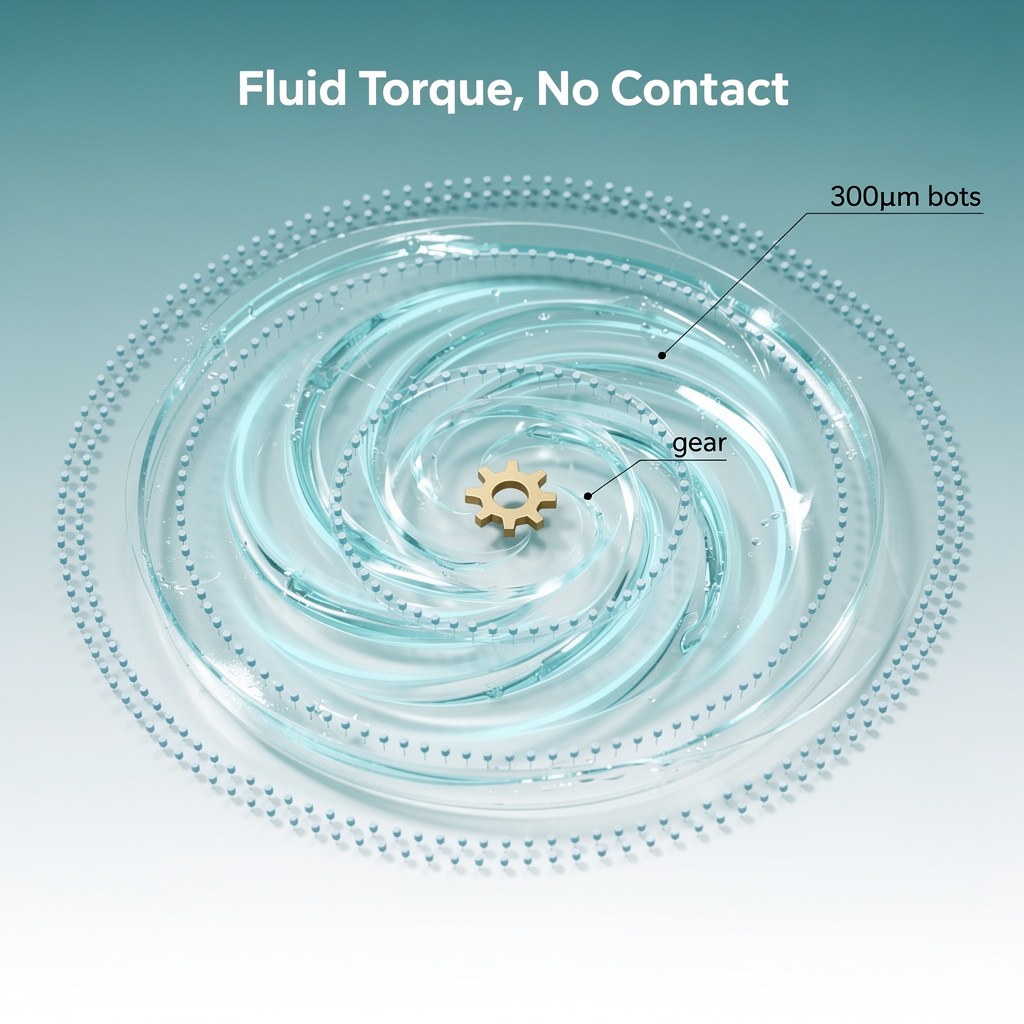

Researchers at Cornell University and Germany's Max Planck Institute have demonstrated a microrobotic system that manipulates objects through fluid dynamics alone. Their 300-micrometer polymer disks—roughly three times the width of a human hair—generate collective fluidic torque sufficient to rotate a 2-millimeter gear analogue in a 1.5-centimeter water pool. The finding, reported February 25, establishes non-contact mechanical actuation at a scale relevant to future biomedical procedures.

What enables this motion?

The robots are 3D-printed polymer disks arranged in concentric rotating rings. When these "wheels" spin, they create coordinated fluid vortices that exert shear forces on nearby objects. This mechanism differs fundamentally from magnetic or acoustic microrobotic systems, which typically require direct field coupling or physical contact. The Cornell-Max Planck team demonstrated four distinct tasks: gear rotation, gripper opening, rack-and-pinion actuation, and assembly of a buoy-like three-dimensional structure—all achieved without the bots ever touching their targets.

Where the technology stands

Performance: Torque scales linearly with robot count up to 1,000 units, reliably rotating millimeter-scale objects.

Control: Current implementation relies on optical tracking and manual bot placement; closed-loop feedback remains undeveloped.

Scalability: Experimental validation beyond 1,000 robots has not been reported, and physiological fluid environments present unmodeled viscosity and confinement challenges.

Parallel developments this month

- Caltech (Feb. 3): Biocompatible microbubbles achieved 60% tumor reduction in mice via ultrasound-triggered drug release, confirming fluid-mediated actuation can integrate with therapeutic delivery.

- Penn-Michigan (Feb. 1): 200×300 µm autonomous swimmers using electro-osmotic propulsion at ~1 body-length per second, demonstrating complementary propulsion strategies at comparable scales.

These converging efforts indicate rapid diversification in sub-millimeter actuation approaches.

What comes next

- 2026–2027: Real-time feedback integration targeting <50 µm positional accuracy; expansion to 5,000–10,000 robots for millimeter-scale tool actuation; initial in-vitro tissue compatibility studies.

- 2028–2029: Biodegradable polymer formulations for safe in-vivo dissolution; computational fluid dynamics validation for non-Newtonian biofluids; confined-space demonstrations in peritoneal or retinal environments.

- 2030–2031: Pre-clinical microsurgical trials combining mechanical manipulation with drug-loaded microcarriers; potential regulatory pathway alignment with existing micro-needle and drug-eluting particle frameworks.

Why this matters beyond the lab

The fluidic-torque paradigm offers reversible mechanical interaction without surface contact—critical for manipulating delicate tissues where shear damage risks permanent impairment. If control precision and biocompatibility thresholds are met, the approach could reshape microsurgical tool design and lab-on-a-chip automation. The same contact-free positioning principles may eventually translate to semiconductor micro-assembly, where contamination avoidance drives yield economics.

🤖 Mars Rover Hits 90% Autonomy: AI Cuts Human Control, Radiation Risks Remain

90% of Perseverance's Mars drives now run without Earth commands. That's 331.74 meters in a single day—nearly triple Opportunity's 2007 record. 🤖 The Enav AI + Claude waypoint system cut planning time in half, while new self-localization keeps error under 10 inches. But here's the catch: one radiation bit-flip can still glitch the whole rover. — Would you trust AI to drive your commute if it occasionally 'forgot' where it was for 2 minutes?

NASA's Perseverance rover has crossed a threshold that redefines planetary exploration: as of October 2024, the vehicle completed 90 percent of its travels without waiting for commands from Earth. This milestone, capped by a 331.74-meter single-day autonomous drive in April 2023, signals a fundamental shift from human-directed teleoperation to self-governing robotic systems operating across interplanetary distances.

How does this work?

The rover's autonomy rests on three integrated systems. The Enav AI navigation algorithm fuses stereo vision, LiDAR-derived depth, and inertial measurements at 10 Hz to detect and avoid hazards in real time. Mars Global Localization (MGL), running on a repurposed Snapdragon 801 processor, compares navigation-camera panoramas against orbital elevation maps to fix position within 25 centimeters in approximately two minutes—eliminating cumulative drift that previously limited drives to roughly 200 meters. More recently, Anthropic's Claude large language model has demonstrated waypoint generation from orbital imagery, processing over 500,000 telemetry variables per drive and compressing mission planning from four hours to two.

What changed on the ground?

The technical advances translate into measurable operational gains:

- Autonomy ratio: 90 percent of all traverses executed without Earth-to-rover command latency, up from Curiosity's 6.2 percent baseline

- Drive distance: Single-Sol record of 331.74 meters—triple Opportunity's 2007 autonomous record of 109 meters

- Localization precision: Position error reduced from roughly 30 meters to under 0.25 meters, enabling longer uninterrupted traverses

- Planning efficiency: AI-generated waypoints halved pre-drive preparation time

The correlation between generative-AI planning and autonomy adoption appears causal: demonstration drives in December 2025 using Claude-derived waypoints subsequently recorded over 95 percent autonomous mode usage for entire months.

Where capabilities and constraints intersect

The system exhibits clear trade-offs:

Strengths: High-fidelity perception, AI-driven planning, and on-board self-localization eliminate Earth-control bottlenecks

Weaknesses: Radiation-induced bit flips in the Snapdragon 801 processor require redundancy checks; occasional 25-bit memory losses have occurred

Opportunities: The Enav/MGL stack transfers directly to the Mars Sample Return lander and future lunar rovers

Threats: Communication delays still mandate Earth-based contingency planning for terrain featuring obstacles beyond 30 meters

What comes next?

- 2026–2027: Perseverance projected to exceed 95 percent autonomous drives by mid-2026, with MGL updates every two Sols becoming routine

- 2028: NASA certification of Enav-Claude integration for the Mars 2028 rover concept, reducing pre-launch route-planning staff by approximately 40 percent

- 2030–2035: Autonomous navigation proportion forecast to surpass 99 percent for surface assets, with Enav-style perception-control loops adapted for lunar regolith-excavation robots and Titan-lake autonomous craft where Earth-to-vehicle latency exceeds 30 minutes

The convergence of AI perception, generative planning, and precise self-localization has established a scalable paradigm for extraterrestrial exploration—one where robots no longer await instructions from Earth, but navigate alien terrain with independent judgment.

In Other News

- On expands robotic shoe manufacturing in South Korea with 32 new robots, producing laceless Cloudmonster 3 Hyper sneakers via automated choreography

- Arkestro Expands in Automotive Sector with Nissan Americas Procurement Modernization

- Anthropic acquires Vercept to enhance Claude’s computer interaction, boosting OSWorld task success from <15% to 72.5%

Comments ()