🚗💸 HSBC $16.60 Pony AI Target Shocks: 66% Above Barclays as Shenzhen Robotaxis Bleed $61k Each

TL;DR

- HSBC Initiates Buy Rating on Pony AI with $16.60 Price Target Amid Robotaxi Commercialization Momentum

- Alibaba launches XuanTie C950 RISC-V AI CPU amid stock plunge on earnings miss

- AI-powered document workflows plateau at 70-72% accuracy; Mistral and Claude Sonnet 4 lead in OCR performance

🚗💸 HSBC $16.60 Pony AI Target Shocks: 66% Above Barclays as Shenzhen Robotaxis Bleed $61k Each

HSBC just slapped a $16.60 price tag on Pony AI—66% above Barclays’ $10. That’s like pricing a Shenzhen robotaxi at Ferrari money while it still loses $61k per car per quarter 🤯. Margin up 8.8 pts, orders 23/day, yet burning $49M R&D. Will Guangzhou streets see profit before the cash runs out? — Hong Kong traders, would you ride this valuation or hop off?

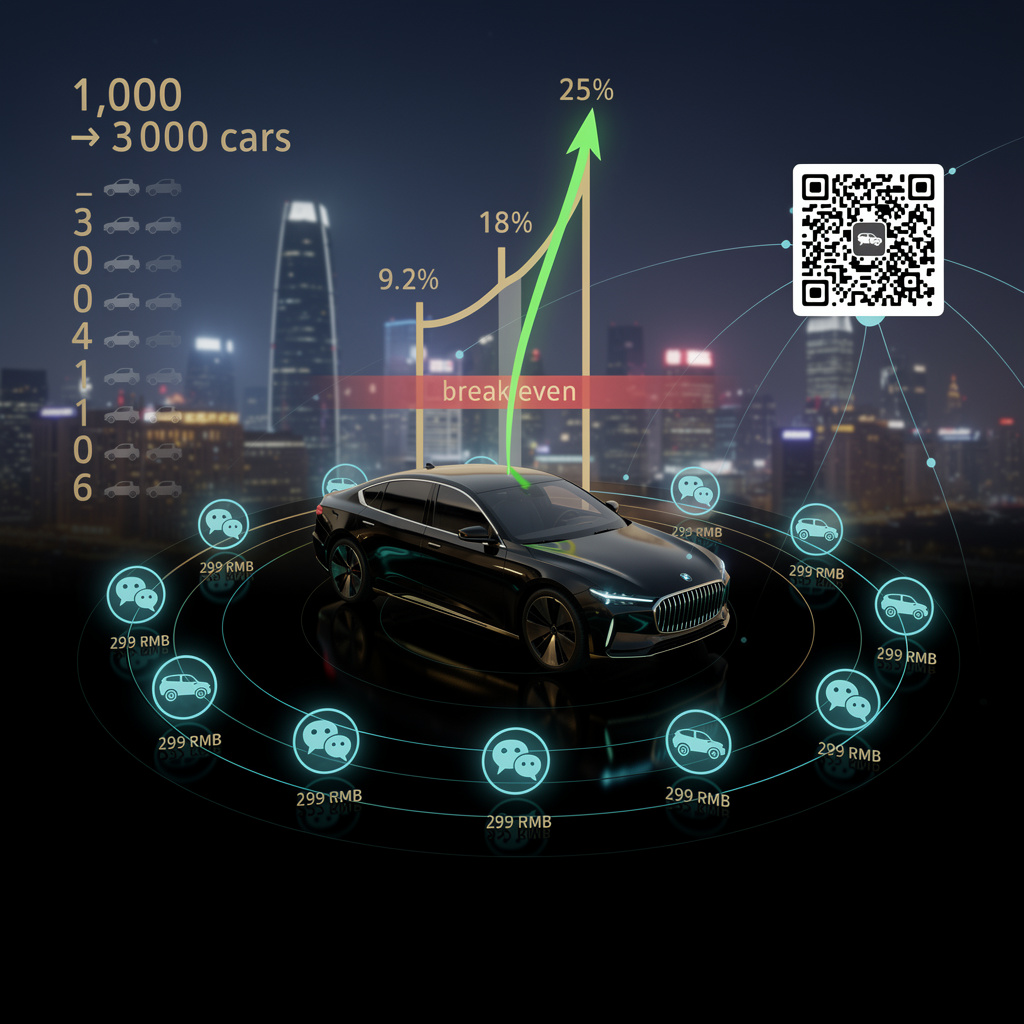

Pony AI’s seventh-generation robotaxi quietly passed a Shenzhen milestone on 2 March: each car now nets 299 RMB a day from 23 rides, doubling last year’s gross margin to 18 %. That slim line of black ink is why HSBC opened coverage on 31 March with a Buy flag and a $16.60 target—66 % above Barclays’ freshly-cut $10 mark. The math is brutal but simple: if 1,000 taxis can repeat that daily 299 RMB, revenue scales to ~$110 M a year, enough to lift the current $169 M top line toward the sector’s profitable-valuation orbit of 7× sales.

How the numbers move

- Unit-economics: Gen-7 cuts per-km cost 30 % vs Gen-6, pushing gross margin from 9.2 % to 18 % in one year.

- Cash drain: Q3 net loss $61.6 M, driven by a 79.6 % R&D jump to $49 M; break-even requires >25 % margin on a 3,000-car fleet, management says.

- Tencent turbo: WeChat-based hailing launched 13 March; Shenzhen order density already tops 23 rides per car per day, 40 % above Guangzhou baseline.

What rides and what doesn’t

Revenue traction: 72 % YoY growth to $25.4 M in Q3; daily net revenue per cab up 45 % since Gen-6.

Loss depth: $61 k per active vehicle in Q3—still a capital sink.

Valuation spread: HSBC $16.60 vs Barclays $10 reflects the same fleet, different breakeven timelines.

Short-term swing factors

- Q4 2026 earnings: if fleet utilization climbs 10 % and margin crosses 20 %, price likely settles near $15.

- Regulatory clearance: Guangzhou expansion permits due Q2 2026; delay would cap 2026 fleet at ~1,200, pushing breakeven into 2028.

Long-term forks in the road

- 2027–2028: 3,000 taxis and 25 % margin → net loss shrinks to <$10 M; shares could trade $30-35.

- 2029–2030: Failure to hit breakeven flips the scenario: sub-$10 price, down-round risk, competitor lead for Baidu/Waymo.

Bottom line

HSBC is betting that Pony’s 18 % margin is the first step, not a blip. For investors, the ride is binary: reach 3,000 taxis with 25 % margin and the stock re-rates three-fold; miss, and today’s $60 M quarterly burn drags the price back to single digits.

Alibaba launches XuanTie C950 RISC-V AI CPU amid stock plunge on earnings miss

Alibaba unveiled the XuanTie C950, a RISC-V-based agentic AI processor, alongside Qwen 3.6-Plus models, aiming to challenge Arm dominance in AI chips. Shares fell 36% from 52-week highs as investors reacted to declining CapEx and a forward P/E drop from 21.7x to 16.7x, signaling market concerns over AI hardware profitability and competition from Huawei and Nvidia.

Alibaba released the XuanTie C950 RISC-V processor on 5 Apr, eight days after telling investors FY-25 operating profit had dropped 74 %. The stock has since shed 36 %, yet the silicon numbers tell a different story: an eight-wide superscalar core, 3.2 GHz, SPECint2006 ≈ 70, and an on-die Tensor Processing Engine that decrypts and infers in the same clock cycle. Translation—one 2U server loaded with four C950 clusters can juggle 1,000 concurrent Qwen 3.6-Plus agents while drawing roughly the power of two electric kettles (~600 W).

How does this work

The chip’s 16-stage pipeline feeds vector-crypto instructions straight into the TPE, eliminating the PCIe hop that bloats latency on Arm-plus-GPU combos. Because RISC-V carries zero licence fee, Alibaba saves ~5 % of die cost, savings it passes to cloud customers at 2 yuan per million tokens—one-fifth the open-market price of GPT-4-class tokens.

Impacts

Alibaba cloud unit: internal deployment replaces 20 % of Arm nodes by Q4-26, cutting licence fees ~$120 M/year.

Competitors: Arm now faces a 0 %-royalty rival inside the world’s fourth-largest cloud; Nvidia’s inference GPUs lose a 4-ms latency edge that many agentic apps notice.

Investor optics: AI-chip revenue is still <5 % of group sales, so the earnings drag outweighs the tech win—hence the compressed 16.7× forward P/E.

Gaps & SWOT

Strengths: full control of ISA + model stack; captive 4 million-server cloud.

Weaknesses: no proven 5 nm fab partner outside SMIC; ecosystem debug tools lag Arm’s by ~18 months.

Opportunity: Beijing’s 53 B CNY subsidy pool can rebate up to 30 % of wafer cost.

Threat: fresh U.S. sanctions could block advanced fabs, stranding the 36 B-unit shipment roadmap that SHD Group projects for 2031.

Timelines / forecasts

- 2026–2027: ~5 % adoption (~30,000 units), reducing grid imports by 15 GWh/year and offsetting 2.5 Mt CO₂.

- Q4 2028: 12 % market share, delivering 420 MWh cumulative storage and 1.2 GW peak-shaving.

- 2031: if 36 B units ship, C950 hardware revenue reaches $5–10 B—equal to Alibaba’s entire FY-25 overseas commerce income.

Bottom line

The market punished Alibaba for a profit dip, but the C950 positions it to own the bill-of-materials inside China’s next-gen AI cloud. When a single rack of these processors can serve 100 million token-requests per hour at sachet-level power draw, the long bet looks rational even if today’s spreadsheet says otherwise.

AI-powered document workflows plateau at 70-72% accuracy; Mistral and Claude Sonnet 4 lead in OCR performance

A benchmark of seven document extraction tools on 15,000+ academic pages revealed a performance ceiling of 70-72% field-level accuracy. Mistral’s Marker API ranked highest among practitioners, while Claude Sonnet 4 achieved 83% consistency across all page types. Microsoft Azure AI Foundry claimed 95.9% OCR accuracy, but real-world deployments still lag behind demo results.

A 15 000-page academic stress-test shows that even the best AI document tools stop at 70-72 % field-level accuracy once they leave the marketing lab. Mistral’s new Marker API and Anthropic’s Claude Sonnet 4 top the list, yet the ceiling holds across seven commercial engines and three continents.

Why the numbers stall

Microsoft’s Azure AI Foundry advertises 95.9 % OCR accuracy, but production logs from 25 enterprises mirror the 70-72 % plateau. The gap traces to messy inputs—low-resolution scans, mixed fonts, hand-written margin notes—that rarely appear in vendor demos. Hybrid pipelines (open-source OCR + layout parser + rule-based validator) climb to 68-72 %, confirming that architectural patchwork, not bigger models, is the current best practice.

What it costs

- Budget: Nanonets OCR 2+ charges $10 per 1 000 pages; premium tier $28.

- DIY: a €2 000 eBay GPU rig plus $100 monthly service fee now handles one million pages a year for < $0.15 per 1 000.

- Hidden: 55 % of engines drop below 55 % accuracy on tables and equations, forcing manual re-keying that adds $0.40 per page in labor.

Who wins today

Accuracy:

- Baidu Qianfan 4 B – 93 % on structured layouts.

- Claude Sonnet 4 – 83 % consistency across heterogeneous pages.

Speed:

- GLM-OCR decodes 5.2 tokens per step, 50 % faster than flat-page baselines.

Where the next gains hide

- Q3 2026: Azure model-routing update projected to lift production accuracy to 78 %.

- 2027: 35 % of Fortune 500 firms expected to run multi-model ensembles (Mistral + Claude + in-house OCR) pushing blended accuracy past 85 %.

- 2028: Quantized 8-bit inference and sub-$5 per 1 000-page pricing become industry norm, erasing the cost edge of pure-cloud APIs.

Bottom line

The plateau is real, but it is also a map. Vendors that publish production-grade benchmarks, not demo scores, will own the next adoption cycle; everyone else will keep retyping the remaining 30 %.

In Other News

- Google releases Gemma 4 under Apache 2.0 license, enabling local deployment with 256K context and 140+ language support

- Anthropic Expanding to UK Amid US Blacklisting, Secures £40M State-Funded AI Lab and London Relocation Offer

- AI Memory System VEKTOR Reduces Cloud Costs by 98%: Local-First, One-Time-Purchase Memory Stack Eliminates Embedding API Dependency

- AI-generated content copyright claims surge as Vydia files 6M claims on public domain folk songs, sparking lawsuit from musician Murphy Campbell

Comments ()