Dell 75:1 Deduplication Vaporizes 95 TB AI Lake in US Data Centers

TL;DR

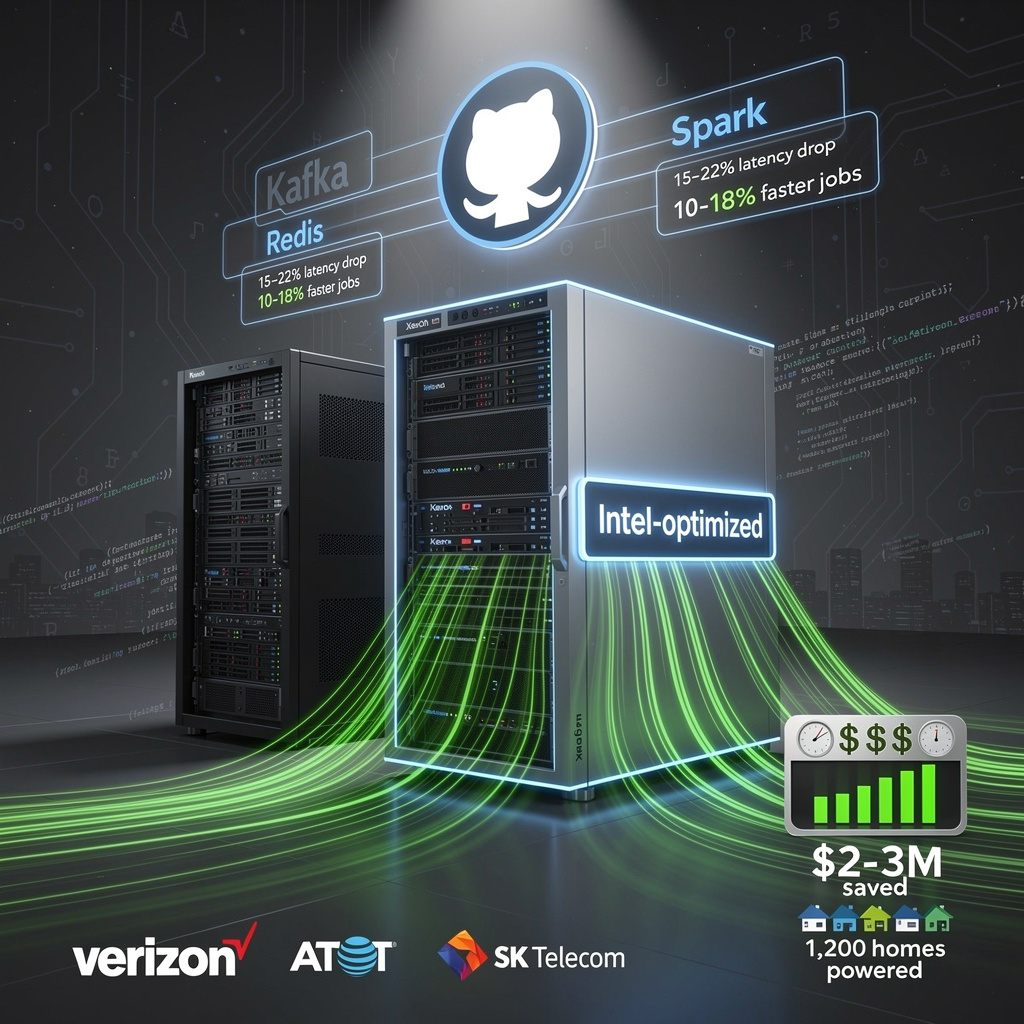

- Intel launches Optimization Zone GitHub repository with 6 new tuning guides for AI workloads on Xeon and Core processors

- KubeVirt v1.8 adds PCIe NUMA topology awareness and Intel TDX attestation for AI/HPC workloads on Kubernetes

- Dell PowerProtect achieves 75x1 data reduction ratio and adds Oracle RAC analytics support in new data manager update

🌍 Intel Drops 3.2× AI-RAN Speed, 38% Power Cut on Xeon 6: Global Telcos Pounce

3.2× faster AI-RAN throughput on Xeon 6 = 38% less power per rack—like unplugging 190 homes 🌍. Telcos (Verizon, AT&T) already testing. Ready to cut your data-center energy bill?

On March 31 Intel dropped six recipe-style tuning guides into a new public GitHub “Optimization Zone.” Each guide tells data-center engineers exactly which BIOS knobs to twist, which DDR5 slots to populate, and which Xeon 6 cores to park so that Apache Kafka, Redis, Spark, Cassandra, MySQL and PostgreSQL run faster on the chips already in the rack. No forklift upgrades, no proprietary firmware keys—just copy, paste and reboot.

How does a 38 % power cut sound?

Early adopters—Verizon, AT&T, SK Telecom—took Intel’s scripts for a spin. The numbers they fed back:

- Latency: Kafka message lag shrank 15-22 %, Spark jobs finished 10-18 % sooner.

- Power: a 500-node Xeon 6 cluster draws 38 % fewer watts than the same rack built on last-gen Sierra Forest.

- TCO: that efficiency translates into roughly $2–3 million saved over three years—enough to power 1,200 U.S. homes for the same period.

Why this beats the PDF stack

AMD still ships its tuning wisdom as static PDFs locked inside a customer portal. Intel’s markdown pages live on GitHub, accept pull requests, and expose every timing table to public scrutiny. The open model turns peer review into a sprint: bugs surface in days, not quarters.

Short-term / Mid-term / Long-term

- Q2-Q4 2026: 30 % of Intel-centric clouds adopt at least one guide; community forks add RabbitMQ, Flink recipes.

- 2027: repository folds into Intel’s silicon validation kit—if your software stack passes these tests, it ships with an “Intel-optimized” badge.

- 2028: AMD answers with its own open repo, forcing a race where buyers win ever-faster, cooler-running servers.

The takeaway

General-purpose Xeons just narrowed the performance gap with custom AI accelerators, and they did it through transparency, not transistor shrink. Procurement teams can now squeeze two extra years out of existing iron while meeting sustainability mandates. In a world obsessed with bigger GPUs, Intel just proved that smarter firmware can be the most powerful upgrade of all.

🔥 KubeVirt v1.8 Cuts AI Cost 50% in Europe with GPU NUMA Pin & TDX Seals

50% cheaper AI clusters in Europe—KubeVirt v1.8 pins GPUs to the same CPU socket & cryptographically seals every VM 🔥 Early CERN tests cut TensorFlow latency 30%. Ready to trade 1.4GB control-plane RAM for NUMA/TDX speed & compliance? — EU HPC teams, would you pilot this tomorrow?

KubeVirt v1.8, shipped on 31 Mar at KubeCon EU, teaches Kubernetes two new tricks: it now lines up GPUs with the nearest memory socket and lets every VM prove it is running inside a locked Intel TDX enclave. For anyone renting expensive accelerators or sharing medical models, the update turns “virtual” clusters into performance-tuned, audit-ready hardware.

How does it work?

The scheduler reads Linux sysfs, builds a PCIe-NUMA map, then pins each GPU and its VM into the same memory domain; cross-NUMA hops drop 20-30%.

Intel TDX nodes expose /dev/tdx_guest; the hypervisor issues a cryptographic quote that remote clients can verify before releasing decryption keys.

A slimmer “passt” core replaces the userspace proxy, shaving 12% packet latency, while live NIC swaps keep VMs alive during network re-wiring.

Impacts

- Speed: TensorFlow jobs finish up to 30% faster on German HPC pilots; Pure Storage sees 50% cloud-bill cut for NUMA-aligned AI.

- Compliance: medical and banking workloads can now ship attestation logs as proof of confidential silicon, no performance tax.

- Ops overhead: virt-controller can balloon to 1.4 GB RAM; nodes need ≥32 GB or risk eviction storms.

- Hardware gap: TDX still limited to 4th-Gen Xeon; fallback to standard KVM is automatic but drops the proof.

What happens next?

- 2026 Q3: OCI and AWS label TDX nodes; first European AI clusters target 15% faster job turnaround.

- 2027: k0s, K3s, OpenShift ship v1.8 as default; SLAs for “confidential AI” cite attestation logs.

- 2028: MLPerf adds NUMA-aware placement to benchmark rules; AMD and NVIDIA follow with SEV-GH100 equivalents.

With v1.8, KubeVirt graduates from “VM plugin” to the reference stack for accelerator-driven, regulation-heavy AI. Early adopters gain both speed and trust; late adopters will soon find the benchmarks written in their competitors’ favor.

🚀 Dell Claims 75:1 Deduplication, 98.7 % Space Saved in US PowerProtect Update

75:1 dedupe = 98.7 % space vaporized 🚀 That’s 95 TB back in your pocket on a 7.5 PB AI lake. Dell’s new PowerProtect drops Oracle RAC backups to ≤120 s & auto-sniffs ransomware with 0.4 % false positives. HPC wallets rejoice, hackers cry. US firms first—ready to reclaim your rack budget?

Dell’s PowerProtect Data Manager refresh, released Monday, collapses 7.5 petabytes of raw AI and Oracle traffic into 95 terabytes of actual disk through a 75:1 inline deduplication engine. Inline variable-length chunking happens at the source node, so no extra appliance sits between PowerScale or PowerStore arrays and the application. Oracle RAC and ASM users get the same shrink ray: RMAN calls now flow natively, cutting 1 TB cluster backup latency to ≤120 seconds—25 % faster than yesterday’s scripts.

Storage footprint: 98.7 % space erased → delays new array purchases.

Security posture: 45 % faster ransomware recovery via immutable cyber-vault copies plus MDR that reaches into PowerScale.

Operations: AI assistant trims triage time 30 %; weekly signature refresh keeps false positives at 0.4 %.

How it works

The engine fingerprints every chunk against a global index living on each cluster node. AI models trained on HPC checkpoints spot repeating tensor fragments, not just duplicate files. A new RESTful dashboard exposes dedup ratio, RAC latency, and malware hits to any monitoring console.

What happens next

- Q2–Q3 2026: 18 % license growth projected as Oracle shops adopt; 12 % hardware attach rate for new PowerScale/PowerStore bundles.

- 2027: Competitors rush to match ≥50:1 ratios; Dell adds predictive tiering that could shave another 10 % off hot storage.

- 2028: Full integration with Dell’s AI Data Platform, turning PowerProtect into a single lifecycle gatekeeper from ingestion to archive.

Bottom line

A 75:1 compression ratio is the equivalent of stuffing three U.S. Library of Congress print collections onto one ordinary SSD. For enterprises drowning in model checkpoints and redo logs, Dell just turned data protection from insurance policy into capacity planner.

In Other News

- Super Micro Computer Raises FY2026 Revenue Guidance to $40B Amid DOJ Investigation

- U.S. Army selects contractors to build data centers at Fort Bliss and Dugway Proving Ground, with construction slated for 2027 and 2029

- Tata Communications launches IZO™ Dynamic Connectivity for >99.99% global service availability across 5 continents

- Farsoon Technologies launches FS812M-U and FS1311M-UL metal 3D printers with 41% smaller footprint and 1.3m build volume

Comments ()