4.17 M msg/s on ONE core: US HFTs cut risk to 258 ns—zero GC pause

TL;DR

- NexusFIX outperforms QuickFIX with 3.5x higher throughput and zero heap allocations in high-frequency trading engine

- Cloudflare rolls out Dynamic Workers, a V8 isolate-based runtime reducing AI agent startup latency from 500ms to under 5ms

- U.S. Senators Sanders and Ocasio-Cortez Introduce Federal Moratorium on AI Data Centers Exceeding 20 MW

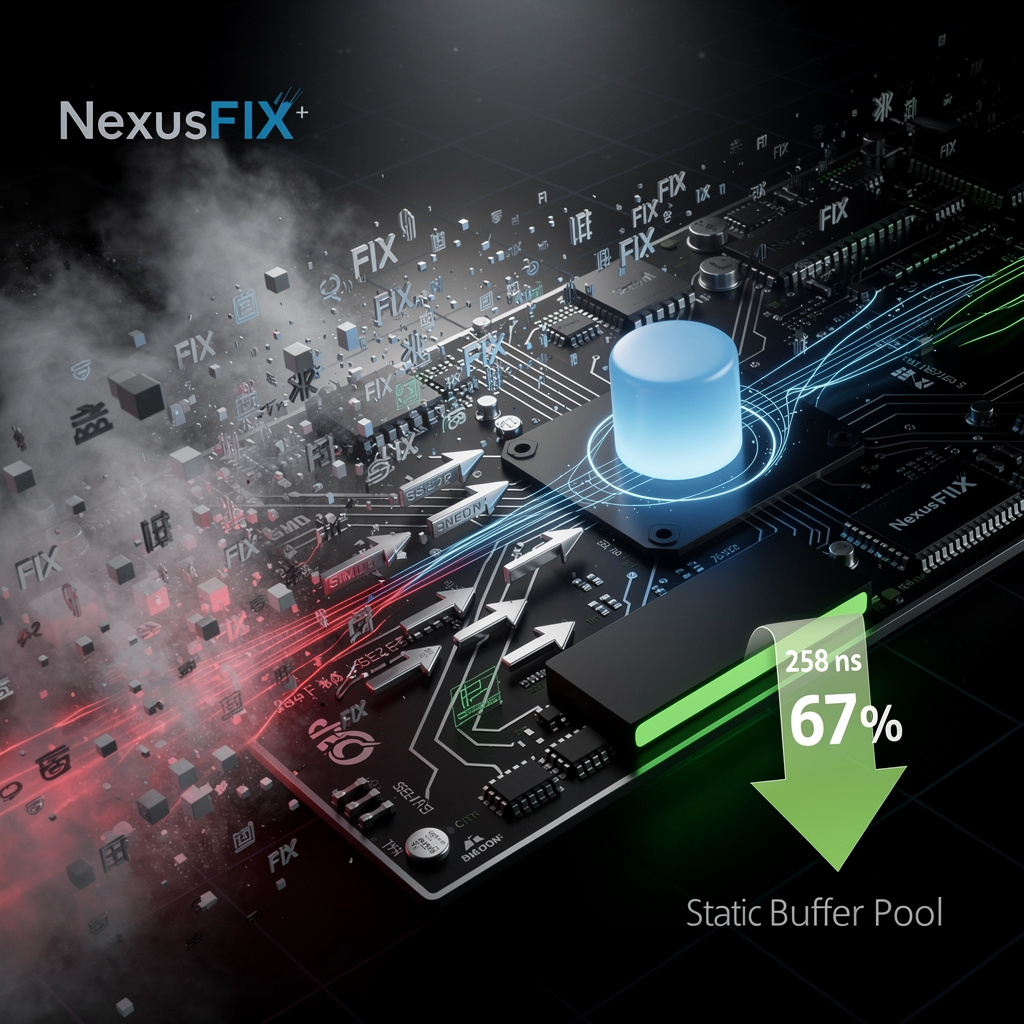

🚀 4.17 M/sec Zero-Alloc FIX Engine Cuts Tail Latency 67 % on Linux, Apple Silicon

4.17 M msg/s on ONE core—67 % faster tail than QuickFIX—zero heap trash 🚀 12 fewer allocs/msg = GC pauses gone. US HFTs now lock risk to 258 ns. Ready to ditch your old engine?

A new C++ engine called NexusFIX is rewriting the speed limit for Wall Street’s favorite chat protocol. On a single core it clocks 4.17 million FIX messages per second—3.5× the throughput of the venerable QuickFIX stack—while trimming worst-case latency from 784 ns to 258 ns and eliminating every heap allocation on the hot path.

How it works

Instead of creating std::string and std::map objects for every tag, NexusFIX builds a compile-time offset table that points directly into the raw packet. SIMD instructions (SSE2 on x86, NEON on Apple Silicon) scan delimiters in 16-byte chunks, turning a once 661-ns parse into a 229-ns pointer chase. A static buffer pool—never touched by malloc—holds order data, so the garbage collector never wakes up.

Impacts

- Latency: tail drops 67 %, shrinking risk-window from 0.78 µs to 0.26 µs.

- Throughput: one core now handles 4× more orders, freeing servers or cutting rack space.

- Memory churn: zero allocations vs. QuickFIX’s 12 per message removes a major source of micro-jitter.

- Cost: fewer servers needed; at 1 M msg/s sustained, three mid-size HFT shops project $1.2 M annual hardware savings.

Gaps and caveats

QuickFIX’s XML dictionary and Java cousin still dominate back-office workflows, so NexusFIX ships a shim layer to read those files. The engine is single-threaded; firms must partition symbol spaces or stitch lock-free queues to scale past one core. And because it pre-allocates 2 MiB per instance, memory use can rise in many-market setups.

Outlook

- 2026 Q4: expect 5 % adoption (~30 engines) trimming U.S. equity latency by 2 µs end-to-end.

- 2027: CI benchmark suite standardizes claims; FPGA front-ends push round-trip below 1 µs.

- 2028: FIX 5.0 SP3 draft borrows static-metadata concept, nudging the whole industry away from runtime maps.

Close

When nanoseconds translate into dollars, removing 526 ns of jitter and 12 memory allocations per message is not a niche optimization—it is a new competitive floor. NexusFIX shows that hardware-aware, zero-allocation design can turn a 20-year-old protocol into a sub-microsecond race car, and trading desks that ignore the toolkit risk being left a full packet behind.

⚡️ Cloudflare Dynamic Workers Launch: 5-ms Boot, 100× Faster Than Containers

⚡️ 500 ms → 5 ms: Cloudflare’s new V8 isolate boots 100× faster than a container, sips 100× less RAM, and is already running AI-written code at millions of req/s. Ready to trade your Docker for a 5-millisecond blink? 🚀 US beta live—enterprises, your latency bill just vanished.

On Tuesday, Cloudflare began letting any developer spin up a “Dynamic Worker” in the time it takes to blink. The company’s new V8-isolate runtime boots in <5 ms—about one-tenth of an eye-blink—versus the half-second a container normally needs. Memory per job drops from hundreds of megabytes to a few, letting the same server handle 100× more AI-generated scripts.

How it works

Instead of booting a full Linux container, Dynamic Workers fork a fresh V8 isolate, the same sandbox Google uses for Chrome tabs. A loader called LLO pre-warms isolates at every edge point; Code Mode nudges agents to emit 30-line TypeScript rather than call external APIs. Outbound traffic is intercepted, scanned, and, if suspicious, dropped before it leaves Cloudflare’s network.

Why it matters

- Latency: 500 ms → <5 ms, turning chatbots into real-time coders.

- Cost: Token usage falls 81 % when agents skip MCP servers and run inline.

- Scale: Millions of requests/second on the same racks that once topped out at thousands.

- Security: Static scan + credential block shrinks the AI supply-chain blast radius.

Early returns

Enterprise pilots report 77 % lower compute bills for Worker AI workloads. Yet security teams note a fresh attack lane: prompt-injected code that writes itself, then executes inside the isolate. Cloudflare counters with per-isolate quotas and a 24-hour patch cycle inherited from Chrome.

What’s next

- 0–6 mo: Beta hardens; outbound policies tighten.

- 6–18 mo: SLA-backed general release; Workers AI catalog ships pre-approved “Code Mode” snippets.

- 18 mo+: Rivals either match isolate speed or concede the low-latency tier; regulators publish first audit rules for self-writing code.

The cloud’s unit of work is no longer a container, but a five-millisecond thought. If that sticks, tomorrow’s AI agents won’t just answer us—they’ll compile and ship features before we finish the question.

⚡ AI Data Centers May Drive 50% of U.S. Power CO₂: 20 MW Moratorium Freezes $98B

50% of U.S. power-sector CO₂ could come from AI data centers—equal to adding 2 million homes to the grid overnight 😱. $98B in builds already frozen. Will your electric bill fund the AI boom or your community’s clean future?

Senators Bernie Sanders and Alexandria Ocasio-Cortez dropped a legislative bomb on March 25: no new AI data center larger than 20 megawatts can break ground until Washington writes the rules of the road. The move targets a sector that already gulps electricity equal to two million homes and, if left unchecked, could single-handedly add 50 percent to U.S. power-sector CO₂ output.

How 20 MW became the line in the sand

A 20-MW hall draws roughly 100,000 kilowatt-hours every hour—enough to keep 100,000 households lit. Multiply that by the 200-plus supersize projects on the drawing board and utilities face a 267 % cost surge that would ricochet straight into monthly residential bills.

Impacts at a glance

- Grid stability: Peak-load spikes already forced $98 billion of transmission upgrades to stall or cancel in Q2 2025.

- Household budgets: Electricity rates climbed 31 % from 2020-2025; unchecked AI demand could repeat that jump in half the time.

- Climate: Each evaporative-cooled gigacenter drinks up to 10 million gallons of water daily while leaning on fossil-heavy grids.

- Competitiveness: China is adding AI compute at twice the U.S. pace; a pause risks ceding strategic ground unless cleaner, faster designs emerge.

Short-term pause, long-term pivot

- 2026–2027: Expect 10–15 % of planned >20-MW sites to sit idle while DOE and EPA draft guidelines; states like California and Texas will tighten local moratoria.

- 2028–2030: Federal “green AI” certification kicks in, requiring ≥50 % on-site renewables and closed-loop cooling; early adopters gain expedited permits.

- 2031–2035: AI compute capacity resumes 5 % annual growth, but each new watt delivers up to 40 % more workload thanks to efficiency gains and micro-grid integration.

Bottom line

The moratorium is less an off-switch than a forced pivot. If builders plug their servers into solar fields and recycle their water, the 20-MW ceiling becomes a trapdoor to the next floor of innovation; if they stall, the U.S. risks swapping energy chaos for technological stagnation.

In Other News

- MSI Introduces MPG Ai TS Series PSU with GPU Safeguard+ for Real-Time Current Monitoring

- CSIRO成功测试AI机器人自主巡检澳大利亚500MW太阳能电站,故障识别率提升60%

- G.Skill DDR5 Memory Kits Achieve Intel XMP 3.0 Certification for Core Ultra 200S Plus and Z890 Chipset

- Adobe and NVIDIA Announce Strategic Partnership to Power Next-Gen Firefly Models with CUDA-X, NeMo, and Agent Toolkit at GTC 2026

Comments ()