33 % AI Ceiling in UK Hospitals & Courts: 67 % Error Risk Sparks Regulatory Alarm

TL;DR

- Anthropic’s Claude Sonnet 4.2 and Google’s Gemini 3.4 lead AA-Omniscience Index with 33/100 factual accuracy, outperforming GPT-5.3 Codex

- Palantir awarded £30K/week contract by UK FCA to analyze financial regulation data using Foundry AI

- Lithosphere Launches Lithic Toolchain for Decentralized AI Applications, Introduces Devnet and Playground

😱 AI Fact Race: Google & Anthropic Hit 33/100 Ceiling, OpenAI Trails at 14 in San Francisco Benchmark

33/100 is now the ceiling—yet that’s still 67 points of WRONG in hospitals & courtrooms 😱. Gemini 3.4 & Claude Sonnet 4.2 hit it; GPT-5.3 stalls at 14. Hallucinations drop 38 %, but every 6 weeks a new model resets the scoreboard. Doctors & lawyers can’t bill for “oops.” Where should regulators draw the red line?

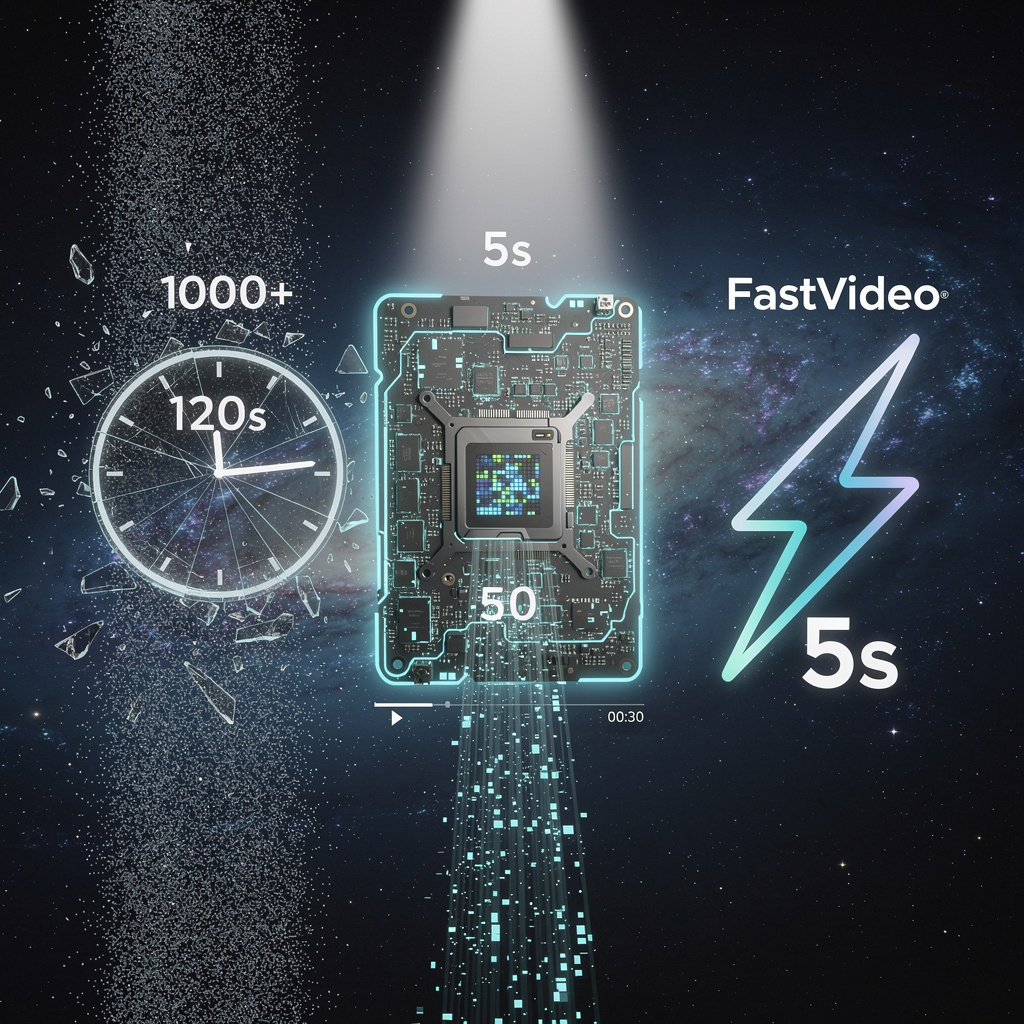

San Francisco’s latest AI report card lands with a thud: even the best models answer only one in three questions correctly across 6,000 expert probes. Anthropic’s Claude Sonnet 4.2 and Google’s Gemini 3.4 share the top slot at 33/100 on the AA-Omniscience Index, while OpenAI’s GPT-5.3 Codex trails at 14. The yardstick, built by Artificial Analysis, keeps 42 professional domains under a microscope and shows hallucinations remain the industry’s Achilles heel.

How the test works

Every release cycle—now every 4–6 weeks—6,000 adversarial questions stress-test law, finance, medicine, physics and code. Scores range from –100 to 100, but the practical ceiling sits at 33; anything higher demands near-zero fabrication. Models compress a million tokens of context and run 205 internal “tenet” checks, yet still slip up most in regulated fields.

Impacts in one glance

- Healthcare: 10–15 points below overall average → mis-diagnoses still probable.

- Legal: persistent hallucination → contract clauses push liability back to vendors.

- Finance: sub-30 scores → trading algorithms fail regulator vetting.

- Cost: Gemini 3.1 Pro proves benchmark can be aced for $892, half the rival price.

- Safety: violation rates dip to 1.9 % (Sonnet 4.6) but climb past 12 % for older GPTs.

What happens next

- Q2 2026: Gemini 3.5 and Claude Sonnet 4.3 debut 1.5 M-token windows; factual bar expected at 35 ± 2.

- Q4 2026: Regulators in U.S. & EU may lock high-risk uses behind a 30-point floor.

- Q1 2027: Token cost projected to fall 10–15 %, letting mid-size hospitals deploy bedside assistants.

The takeaway

Thirty-three percent accuracy now passes for valedictorian. Until the ceiling lifts toward 45, every lawyer, doctor or trader still needs a human second opinion.

📊 £30k-a-Week Palantir AI Trial Puts 42,000 UK Firms Under Algorithmic Spotlight

£30k a WEEK: the FCA just handed Palantir the keys to 10 PB of data on 42,000 UK firms—enough files to binge-read for 19 straight years 📊. A 3-month trial could spot the next Wirecard, but every click sits on a U.S. cloud. Will faster fraud busting outweigh selling out UK finance privacy?

On Monday the Financial Conduct Authority flipped the switch on a three-month, £390,000 trial that lets Palantir’s Foundry AI roam across a 10-petabyte “data lake” covering all 42,000 UK-regulated firms. The goal: spot rule-breakers faster than teams of humans can. The risk: concentrating Britain’s most sensitive financial records inside a single U.S.-built black box.

How does Foundry work?

Foundry ingests filings, e-mails, transaction logs and compliance reports, then builds a living graph of every firm, director and product. Supervised models score the likelihood of misselling or money-laundering; unsupervised models surface hidden networks. An audit trail records who looked at what, and an ontology layer keeps pension-fund data from bleeding into hedge-fund data.

Impact ledger – who gains, who loses

- Detection speed: Early-alert rate projected to rise ≥10%, shrinking the average 14-month gap between offence and sanction.

- FCA workload: Internal models indicate 15% fewer investigator hours per case, freeing 120 staff for higher-value probes.

- Privacy: A breach involving re-identified records could expose >1 million client files → heightened phishing and litigation risk.

- Market fairness: Small brokers fear opaque risk scores will raise compliance costs 5-10% if they are flagged erroneously.

Institutional pulse

Parliament’s Treasury Select Committee has already requested weekly data-access logs. The ICO hints at a statutory audit before summer. City law firms are marketing “Palantir defence” packages, while Snowflake and Microsoft lobby the Bank of England with rival bids. Palantir counters by offering an on-shore UK cloud option to pre-empt data-sovereignty rules.

Timeline – three possible futures

- Jun 2026: FCA publishes pilot dashboard covering top 200 high-risk firms; anticipates 12-month extension at £120k per month.

- Q1 2027: PRA and Bank of England issue twin RFPs; Palantir positioned to add £2-3m revenue if clauses mirror FCA pilot.

- 2028-29: Parliament debates localisation amendment; if passed, UK-only hosting raises Palantir’s cost base ~15%, squeezing margins but locking out offshore rivals.

Bottom line

If the FCA can enforce iron-clad governance, Foundry could compress enforcement lag from years to weeks, making UK markets cleaner. Without it, a single breach may turn the City’s supervisory crown jewel into a cautionary tale of privatised surveillance.

🚀 London’s Lithic Toolchain Launches zk-AI Sandbox, Triples dApp Prototypes in 7 Days

3× more dApp prototypes in ONE week! 🚀 Lithic’s zk-proof AI now caps gas at 30k units—cheaper than a London coffee ☕️—while keeping model secrets locked. DeFi & IoT devs get deterministic, auditable inference without runaway costs. Ready to build AI that can’t cheat?

London-based Lithosphere released the Lithic Toolchain on 23 Mar 2026, handing developers a one-stop kit for decentralized apps whose agents think, pay, and prove their work on-chain. A sandbox devnet and drag-and-drop playground shipped the same day, cutting the usual three-week test cycle to minutes.

How does it work

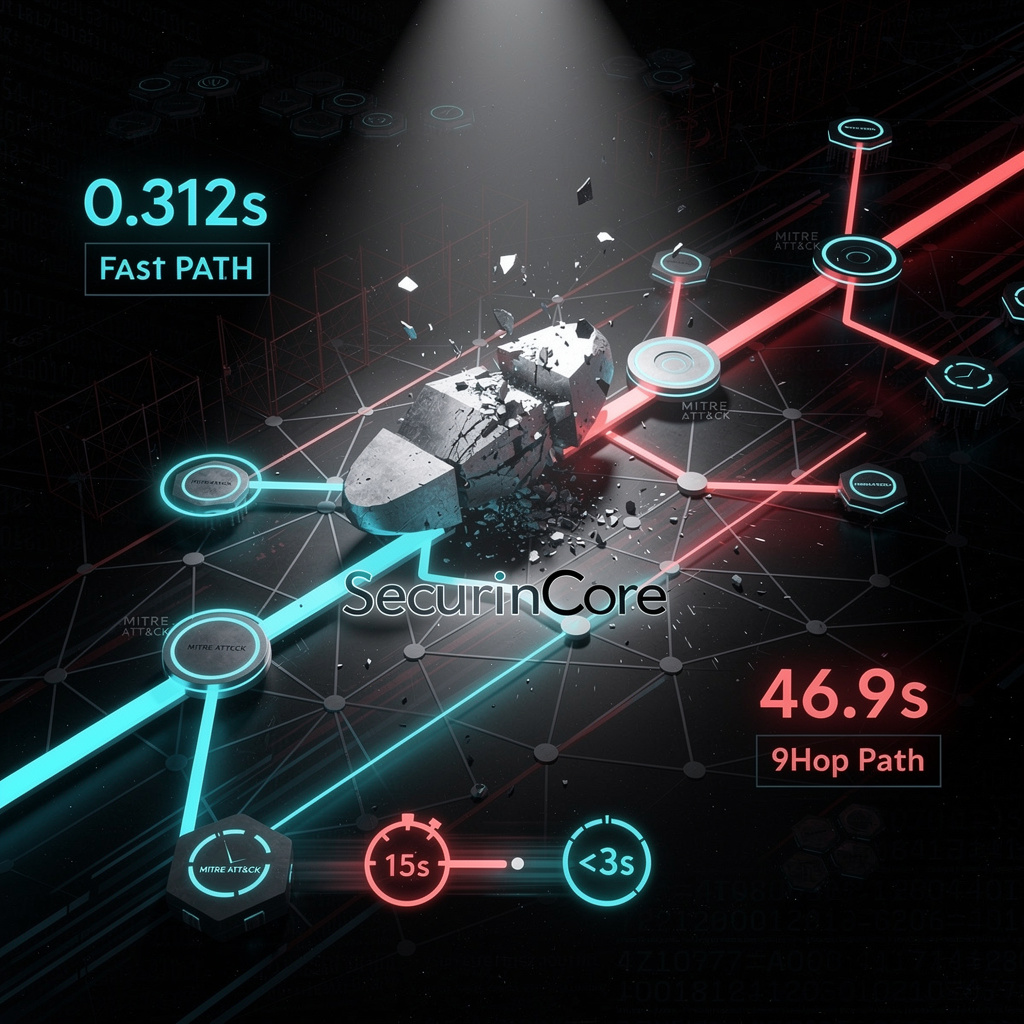

Every AI call runs inside a deterministic lifecycle; the result is hashed and anchored to the Makalu Testnet with an ECDSA provenance receipt. A zk-SNARK certificate (≤200 kB, ≤30 k gas) travels with the output, convincing any verifier that the model was executed faithfully without revealing weights. A cost-governance API lets coders set a hard gas ceiling; exceed it and the runtime aborts, sparing users the surprise $2,000 inference bill that sank earlier experiments.

Early ripples

- Developer traction: prototype submissions tripled in the first week, hitting 1,200 new agents.

- Security: two external audits found zero critical flaws in LSCL modules.

- Market signal: Google and NVIDIA unveiled rival integrated stacks within days, confirming a sector-wide sprint toward verifiable AI.

Short-term outlook

- Q2 2026: ≥50 certified AI providers plug in via LEP100-2; expect cross-chain deployment buttons for Ethereum and Solana.

- Q3 2026: DeFi risk bots and autonomous sensor dApps enter beta, targeting 10,000 daily zk-proofs.

Long-term horizon

- 2027-2028: if LEP100-* specs reach RFC status, “AI-verified” lending pools and insurance contracts could handle $5 billion in on-chain principal, each proof-backed decision reducing compliance overhead by an estimated 35 percent.

- 2029: specialized WASM-AI runtimes may push inference latency below 5 ms, opening real-time algorithmic trading to deterministic, auditable agents.

Sectoral takeaway

By fusing cryptographic receipts with developer-friendly tooling, Lithosphere isn’t merely adding AI to blockchain—it is turning smart contracts into accountable data scientists. Industries that treat auditability as currency, from trade finance to medical supply chains, may soon judge a model not by its accuracy alone but by the ease with which it can prove itself.

In Other News

- AI scribes now used by 40% of Australian GPs, raising regulatory concerns over bias and patient consent

- Australian children aged 10–17 show 79% AI companion bot usage, with 50% reporting negative psychological impact

- AI-Powered Linux Kernel Bug Detection Tool Sashiko Identifies 53% of Bugs Missed by Human Reviewers Using Gemini 3.1 Pro

- Kupando raises €10M to advance AI-driven agritech automation in Sub-Saharan Africa

Comments ()