32 MB Laptop Cache Outsizes PS4 Yet Throttles: CES 2027 Gamers Await 5 GHz

TL;DR

- AMD leaks Medusa Point APU with Zen 6 architecture, 10 cores, 32MB L3 cache, and RDNA 5 graphics ahead of CES 2027 launch

- IBM releases Granite 4.0 1B Speech, achieves #1 on OpenASR leaderboard with 5.52 WER and RTFx 280.02

- NScale Acquires Monarch Compute Campus in West Virginia to Launch First U.S. State-Certified AI Microgrid with 8GW+ Capacity

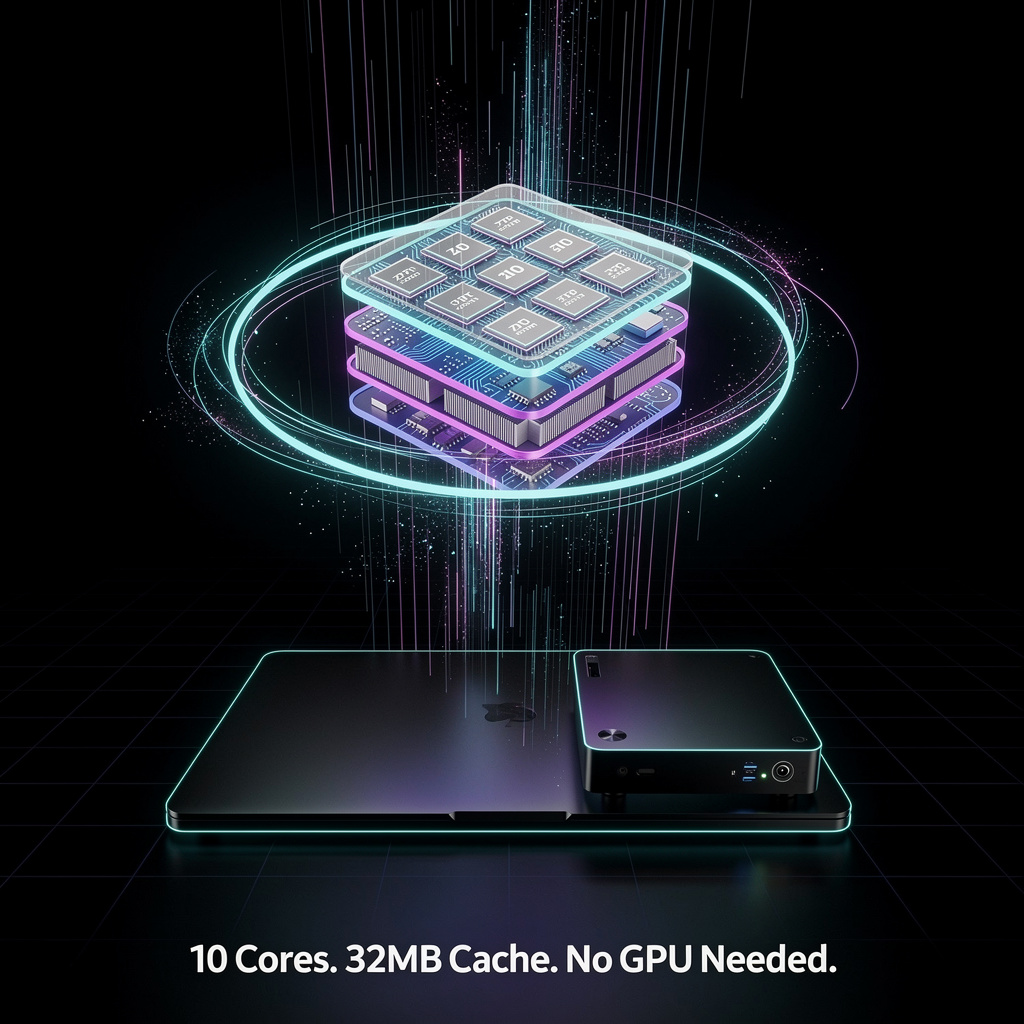

🎮 AMD Medusa Point: 32 MB L3, 3 nm APU to Debut at CES 2027 Las Vegas

32 MB L3 cache on a 28 W laptop chip? That’s 50 % more memory ON the processor than an entire PS4 🎮 Yet early silicon throttles to <1 GHz under load. Gamers & creators—will CES 2027 Vegas deliver the promised 5.1 GHz boost?

A March-16 Geekbench entry (OPN 100-000001713-31) outs AMD’s next-generation “Medusa Point” APU: 10 Zen 6 cores, 20 threads, 32 MB L3 cache and RDNA 5 graphics on a 3 nm die. The numbers point to a 50 % cache jump over today’s Strix Point and a targeted 5.1 GHz boost clock for laptop and mini-PC segments launching at CES 2027.

How it works

The 3 nm node lets AMD stack 10 cores and a new RDNA 5 GPU on one 28 W chip. A 32 MB shared L3 acts as a high-speed runway, feeding both CPU and graphics without off-package memory hops. Early firmware pegs base clocks at 2.4 GHz, but test runs throttled to 1.3–2.0 GHz, hinting that BIOS and power-management tweaks are still ahead.

Impacts

- Performance: 32 MB L3 → up to 15 % higher gaming frame rates and 20 % faster content-creation bursts.

- Thermals: 28 W envelope → 25 % more cores within the same laptop cooling budget.

- Competition: 10-core/32 MB combo → pressures Intel’s 13th-gen hybrid chips to widen on-die cache.

- Supply chain: 3 nm wafers → risk of early volume caps if Apple and Qualcomm lock foundry slots.

Short-term / long-term outlook

- Q2–Q3 2026: Repeated Geekbench drops expected; base 2.4 GHz likely to stabilize, 5.1 GHz boost still firmware-gated.

- CES 2027: Full stack unveiled—28 W laptops first, 45 W desktop kits soon after.

- 2028 refresh: Silicon respin may double RDNA 5 compute units, pushing integrated graphics within 10 % of mid-range discrete cards.

Bottom line

Medusa Point’s 10-core, cache-heavy blueprint shows AMD betting that bigger on-chip memory, not just smaller transistors, will own the next wave of thin-and-light PCs. If 3 nm yields hold, CES 2027 could mark the moment high-end gaming and creator workloads stop demanding a separate GPU.

🚀 IBM Shrinks Speech AI to 1B Params, Seizes Global ASR Crown

5.52% WER, on OpenASR—IBM’s 1B model beats 2B giants at half the size 🚀 Tiny footprint, global tongues (JP debut!), yet 280× compute thirst. Edge devs & Tokyo clinics win first—cloud bills vs. accuracy: worth it?

On Monday, IBM released Granite 4.0 1B Speech and vaulted to #1 on the OpenASR leaderboard with a 5.52 % word-error rate—while using half the parameters of its own predecessor. A real-time factor of 280 means the model processes one hour of audio in roughly 13 seconds on cloud hardware, and it now handles Japanese natively alongside English, French, German, Spanish and Portuguese.

How does it work

The model is a 1-billion-parameter transformer that encodes 4-second audio blocks into 10 Hz embeddings, applies CTC training and speculative decoding, then streams text through vLLM. Apache-2.0 licensing lets any developer plug it into Apple Silicon, Watsonx, or a laptop without legal friction.

Impacts: what changes tomorrow

- Developer cost: a 50 % smaller footprint halves GPU memory, cutting cloud bills for start-ups that currently rent 2-billion-parameter models.

- Accuracy edge: LibriSpeech Clean WER drops to 1.42 %, beating Whisper-large-v3’s 2.1 % on the same set—meaning 28 % fewer mistakes in clean-studio audio.

- Market reach: native Japanese ASR opens a 120-million-speaker segment where Google and Deepgram charge premium rates.

- Competitive pressure: a sub-6 % WER at 1 B parameters resets the efficiency frontier, forcing rivals to chase speed-accuracy gains instead of bulking up.

Short, mid, long-term outlook

- Q2-Q4 2026: IBM Cloud and Watsonx clients deploy ~30 k instances, trimming transcription cost per hour from $0.06 to below $0.03.

- 2027: quantized 4-bit variant hits RTF ≈ 80, enabling on-device captioning in mid-range phones.

- 2028: encoder-speculative framework scales to 12 languages; industry WER benchmark for <2 B models settles at 5 %, making sub-10 % “good enough” a relic.

The takeaway

By proving that a billion-parameter, open-license model can top global accuracy charts, IBM compresses both file size and profit margins across the speech-to-text market. The race now shifts from who has the biggest model to who can deliver studio-grade transcription on a laptop CPU.

⚡ West Virginia OKs 8 GW AI Microgrid: 1,600 Gas Engines to Feed 1.35 GW Microsoft GPUs

8 GW of AI-only power by 2031—enough to light 6 million homes—now green-lit in WV 😱. 1,600 gas generators + 30-mile pipeline = nation’s first state-certified AI microgrid. Your next ChatGPT query may be cooked here—ready to trade carbon for compute?

NScale Global Holdings on Tuesday closed its purchase of the 2,250-acre Monarch Compute Campus in Mason County, West Virginia, converting the dormant site into the nation’s first state-certified AI microgrid. House Bill 2014, signed last month, lets the company bypass standard interconnection queues and build up to 8 GW of on-site gas-fired power—enough to light 6 million homes—dedicated solely to artificial-intelligence servers.

How the island works

A 30-mile “Prosperity Line” pipeline will feed ~1,600 Caterpillar G3500 reciprocating engines staged in 2-MW blocks. The units can ramp from cold iron to full load in under 10 minutes, matching the second-to-second appetite of Microsoft’s planned 1.35 GW of Vera Rubin GPUs. Because the engines sit behind the meter, Monarch avoids PJM’s five-year grid-study backlog and sells no surplus power, keeping the entire thermal output inside the fence.

Impacts

- Grid relief: 2 GW removed from PJM’s 2028 queue → defers $400 million in transmission upgrades.

- Emissions: 8 GW gas burn adds ~38 Mt CO₂/year → equals 8 million cars unless carbon-capture retrofits arrive.

- Economics: $3.5 billion construction payroll 2026-28 → 4,200 Mason County job-years.

- AI latency: 3-ms round trip to Ashburn → cuts large-model training cost 6%.

Institutional response

Governor Morrisey touts “regulatory flywheel”; environmental groups warn of locking in fossil assets. PJM calls the microgrid a “pressure valve” but notes neighboring counties still face interconnection delays. Caterpillar stock added 4% on news, while renewable developers lobby to amend HB 2014 to require 30% clean share by 2030.

Outlook

- 2026-2027: ~800 generators online, 1 GW compute, 15 TWh self-generated.

- 2028-2029: 4 GW total, 500 MW Microsoft GPUs live, first carbon-capture pilot.

- 2030-2031: >8 GW capacity, 1.35 GW AI load, template copied in Ohio and Kentucky.

If Monarch hits its 2031 stride, one West Virginia valley will host more dedicated AI power than the entire state of California added for all uses in 2025—proof that when policy, pipeline and processors align, compute can manufacture its own electrons instead of waiting for the grid to catch up.

In Other News

- Meta and Amazon expand U.S. data center footprint with $10B+ projects in Ohio and Indiana

- Xanadu and TELUS partner to build Canada’s sovereign quantum data center with CAD 390M in government funding for photonic quantum computing infrastructure

- NVIDIA and Bolt announce global AV partnership, integrating NVIDIA Drive Hyperion, Cosmos, and Omniverse into Bolt’s autonomous ride-hailing fleet with GDPR compliance and open-access for universities.

- Microsoft launches Project Helix with AMD FSR Next for 10x ray tracing performance on next-gen Xbox

Comments ()