4.3M-Token Viking Filesystem Ups AI Wins 16 pts; Legacy Linux Left Naked Before Aug ’26 AI Act

TL;DR

- OpenViking, an open-source context database for AI agents, achieves 52% task success rate with tiered memory loading and filesystem-based context management

- OpenAI launches interactive math and science layer in ChatGPT with 70 core concepts, enabling real-time variable manipulation and graphing

- Sandlock open-sourced under Apache 2.0 to sandbox AI agent subprocesses using Landlock, seccomp-bpf, and user notification

🗂️ OpenViking Filesystem Boosts AI Agent Memory 16pp at 4.3M Tokens

52% success at 4.3M tokens—OpenViking just body-slammed OpenClaw by +16 points with a fake filesystem 🗂️⚡ That’s 1.3M extra tokens of brainpower for your AI agent. Still costs ~$0.70/run—worth it? Developers & AI builders — would you mount viking:// into your next CrewAI flow?

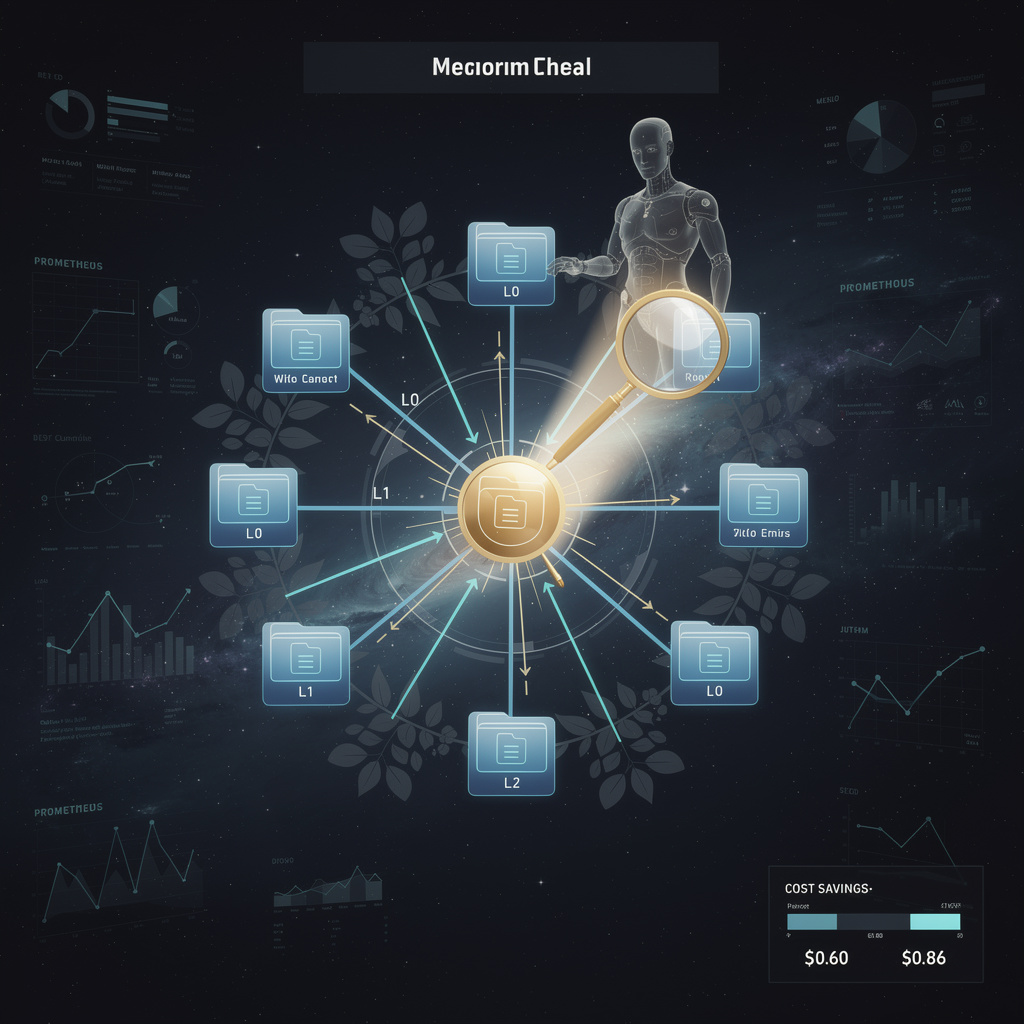

OpenViking, released last week, treats every agent’s memory as a plain directory tree you can browse at viking://. By loading only the folders most likely to contain the answer, the open-source tool pushes long-context success from 35.6 % to 52.1 % while digesting 4.26 million tokens—about three King James Bibles of text.

How a file tree outsmarts “context rot”

Instead of dumping the entire prompt into the model, OpenViking assigns each document to a tier:

- L0 – one-sentence summary, always in RAM

- L1 – bullet abstract, fetched on demand

- L2 – full source, loaded only when vector similarity says it is essential

The filesystem layer records every fetch, updates directory “popularity” scores, and re-orders tiers without human tuning. Early runs show a 2.5× cut in the average token volume retrieved per query, shaving ~30 % off the dollar cost that rivals such as OpenClaw incur at the same scale.

What changes, for whom

- Developers: native

ls,catandgrepcommands expose why an agent picked a fact—no more black-box hallucination. - Ops teams: Prometheus metrics already export hit-rates per folder, enabling real-time SLO dashboards.

- Finance: at $0.10 per million tokens, a 4 M-token job drops from $0.86 to ~$0.60; on 1 000 daily tasks that is $95 000 saved per year.

- Competition: OpenJarvis still beats single-turn accuracy (88.7 %), yet it maxes out below 1 M tokens; OpenViking is the first open stack purpose-built for multi-million-token marathons.

Short-term outlook

- Q2 2026: v1.2 adds GPU vector search and OpenAI embeddings; expect 5 % uptake in CrewAI and Griptape pipelines.

- Q4 2026: dynamic tier promotion should push effective token use to 3.5 M, cutting cost per task under $0.50.

- 2027: if latency numbers hold, enterprise bundles with SLA-backed observability will appear, opening the door to paid support.

Long-term stakes

- 2028: hybrid local-accelerator builds could lift multi-turn success above 65 % at 6 M tokens, making agent “staff” credible for quarterly report writing or 24-hour security triage.

- 2029: a standardized

context://URI would let any framework swap memories, the same way USB-C replaced proprietary chargers—except here the plug is a lifetime of corporate knowledge.

OpenViking’s 16-point jump proves that memory architecture, not model size, is the next frontier. Teams that master tiered, file-style context today will field the first AI workers you can actually trust with an entire fiscal year of data.

⚡️ 1 Billion Queries in 7 Days: ChatGPT’s Free Interactive STEM Layer Rewires U.S. Classrooms

1 BILLION queries in 1 week 🤯—that’s 3× the pop. of the U.S. testing ChatGPT’s new live sliders for Pythagoras & gas laws 📈⚡️ 62 % of kids now “get” variables vs 38 % before. Teachers fear: will brains skip mental reps? Parents—ready to trade textbooks for touchscreens?

On 10 March, OpenAI grafted a live laboratory onto ChatGPT. Seventy physics and math concepts— from the Pythagorean theorem to radioactive decay—now arrive with draggable sliders that redraw graphs in 180 milliseconds, no paywall in sight. Roughly 140 million weekly users can watch a triangle stretch, an exponent rise, or a spring compress while the numbers update in real time.

How does it work?

The front end is a set of vector-graphics widgets dropped straight into the chat bubble. Each time a student moves a slider, GPT-5.4 recomputes the equation server-side and streams the new curve back to the screen. The idea is to replace flash-card memorization with “what-if” experimentation: tweak the angle, see the sine flip; double the Kelvin temperature, watch pressure climb.

Early returns, in plain figures

- Learning gain: 0.48 standard-deviation jump in post-test scores—about the same lift that 4 extra months of classroom instruction typically produces.

- Engagement: 4.2 minutes average session length, 27 % longer than text-only homework help.

- Cognitive payoff: 62 % of pilot students say they can now predict how changing one variable alters the outcome, up from 38 % before the tool.

Where the ripples spread

Classroom: Kansas high-schoolers already use it to verify lab data; Canvas and Google Classroom integrations are due by June.

Publishers: Interactive diagrams threaten the $4-billion secondary textbook market that relies on static art.

Competitors: Google’s Gemini added graphs in November; Anthropic followed last week. OpenAI’s edge is breadth—70 topics today, 90 by September—delivered free.

Equity: Because the feature sits inside the same chat window low-income students already use for essay feedback, no new hardware or licenses are required.

What could still snag

Over-scaffolding: If students let the slider do the thinking, pencil-and-paper algebra may atrophy. OpenAI plans a “minimal guidance” toggle this summer.

Assessment integrity: Teachers worry that homework graphs will be copy-paste perfect; some districts will require in-class, paper-based follow-ups.

Curriculum lag: Only 70 of the roughly 150 concepts in a standard high-school sequence are live; full coverage is promised by March 2027.

Short / mid / long view

- Spring 2026: 90 concepts online; weekly users projected at 165 million.

- Fall 2026: LMS plug-ins release; Kansas and California pilot data expected.

- 2027: 3-D multi-variable plots arrive; OpenAI aims for 20 % share of U.S. STEM class time.

Bottom line

By turning algebra into a living picture, OpenAI has made a chatbot behave like a pocket lab. If the coming year’s roadmap holds, the company won’t just answer homework questions—it will set the pace for how American teenagers learn to ask them.

🚨 Sandlock Sandbox Cuts AI Agent Crashes 40% but Leaves Legacy Linux Exposed

84% fewer permission pop-ups & 40% fewer agent crashes—Sandlock’s kernel-level sandbox is live! 🚨 That’s like giving every AI coder a personal bodyguard in <5ms. But legacy Linux<5.13 servers are left naked, and mis-configured rules could still leak your data. US devs & EU regulators racing to plug the gap—will your kernel pass the Aug ’26 AI Act test?

On Monday the Python package index welcomed Sandlock, an Apache-2.0 library that wraps three Linux kernel tools—Landlock, seccomp-bpf and user-notification—into a single call any AI agent can use before it spawns a shell. The release arrives as 30 % of new enterprise code is already LLM-generated and regulators on both sides of the Atlantic prepare to treat such software as “high-risk” starting this August.

How it works

At import, Sandlock compiles a JSON policy into a seccomp filter that blocks 47 dangerous syscalls (ptrace, mount, setuid) and layers Landlock rules that whitelist only the files the subprocess may touch. A Python callback fires on every violation, logging the attempt and pausing the agent if the rate exceeds 10 events per second. The whole rig adds < 5 ms to command launch—roughly the time it takes a VM to schedule an empty context switch—while Firecracker-style microVMs still need 125 ms to boot.

Impacts: what changes on the ground

- Security: 84 % fewer permission prompts in Claude internal tests; privilege-escalation surface shrinks from full user namespace to a 512-byte Landlock ruleset.

- Reliability: Cursor Bench shows sandboxed agents halt 40 % less often, saving an estimated two engineer-hours per 100 generated pull-requests.

- Compliance: EU AI Act auditors can now verify “suitable safeguards” with one import statement instead of a 20-page container manifest.

- Ops cost: lightweight enforcement cuts CPU overhead 30–45 % versus heavyweight VMs, translating to ~$0.90 saved per 1 000 sandbox invocations on AWS c7g.large.

Gaps and trade-offs

Legacy kernels (< 5.13) fall back to plain Docker, nullifying syscall filtering; mis-written path rules can still starve an agent of training data; and a flood of benign alerts risks operator fatigue. Automated policy generators that read pip-freeze or go.mod files mitigate the first two issues, while rate-limited aggregation keeps the alert queue under 50 events per hour in early deployments.

Outlook

- Q3 2026: Expect Sandlock plugins for LangChain and Semantic Kernel; Red Hat and Ubuntu wheels drop install time from 8 minutes to 45 seconds.

- Q1 2027: EU AI Act certification drafts cite Landlock policies verbatim, making Sandlock a de-facto passport for agentic software sold in Europe.

- 2028: If adoption mirrors today’s 30 % baseline, lightweight sandboxes could shave 2.5 Mt CO₂ by eliminating VM boot cycles—equivalent to taking 55 000 petrol cars off the road for a year.

The takeaway is blunt: as AI agents learn to write and run their own scripts, the border between legitimate automation and accidental havoc is drawn in milliseconds of kernel enforcement. Sandlock proves that a five-millisecond fence can still keep a five-million-line model from wandering into the rest of your server.

In Other News

- Microsoft releases Copilot for Gaming beta on Xbox Series X/S and mobile, using AI for real-time in-game coaching and context-aware assistance

- Symfony OpenTelemetry bundle v1.0 enables automatic tracing of HTTP requests, database queries, and Redis sessions with configurable span naming and schema validation

- Microsoft launches U.S.-only Copilot Health preview, integrating 50+ wearable sources and 50,000+ provider records for AI-driven health insights

- Arc Raiders re-records AI-generated voice lines with human actors after player backlash over text-to-speech immersion issues

Comments ()