India’s Data Centers Demand 1,280 MW — Equal to 6.5M Homes — Amid Water Crisis and $220B AI Rush

TL;DR

- India's data center capacity nears 1,280 MW with 44 petaflops of supercomputing power across 38 facilities

- Fujitsu and 1FINITY unveil MONAKA CPU with 144 Armv9-A cores and 2nm TSMC fabrication for HPC and AI workloads

- Rittal expands Blue e+ Chiller range with 70% energy reduction and ±0.5K precision for HPC and data center cooling

🌍 India’s Data Center Surge: $220B AI Boom Powers 1,280 MW Demand — But Water and Grids Are Straining

1,280 MW of power just to run India’s data centers — equivalent to 6.5 million homes. 🌍 That’s double in 4 years. And now, $220B in AI investments are pouring in — Google, Microsoft, Amazon, Reliance — all racing to build gigawatt-scale campuses. But where will the water come from? AWS used 7.5B liters in 2023. Rural India gets 135L/person/day. Who bears the cost — consumers, farmers, or the grid? 🤔

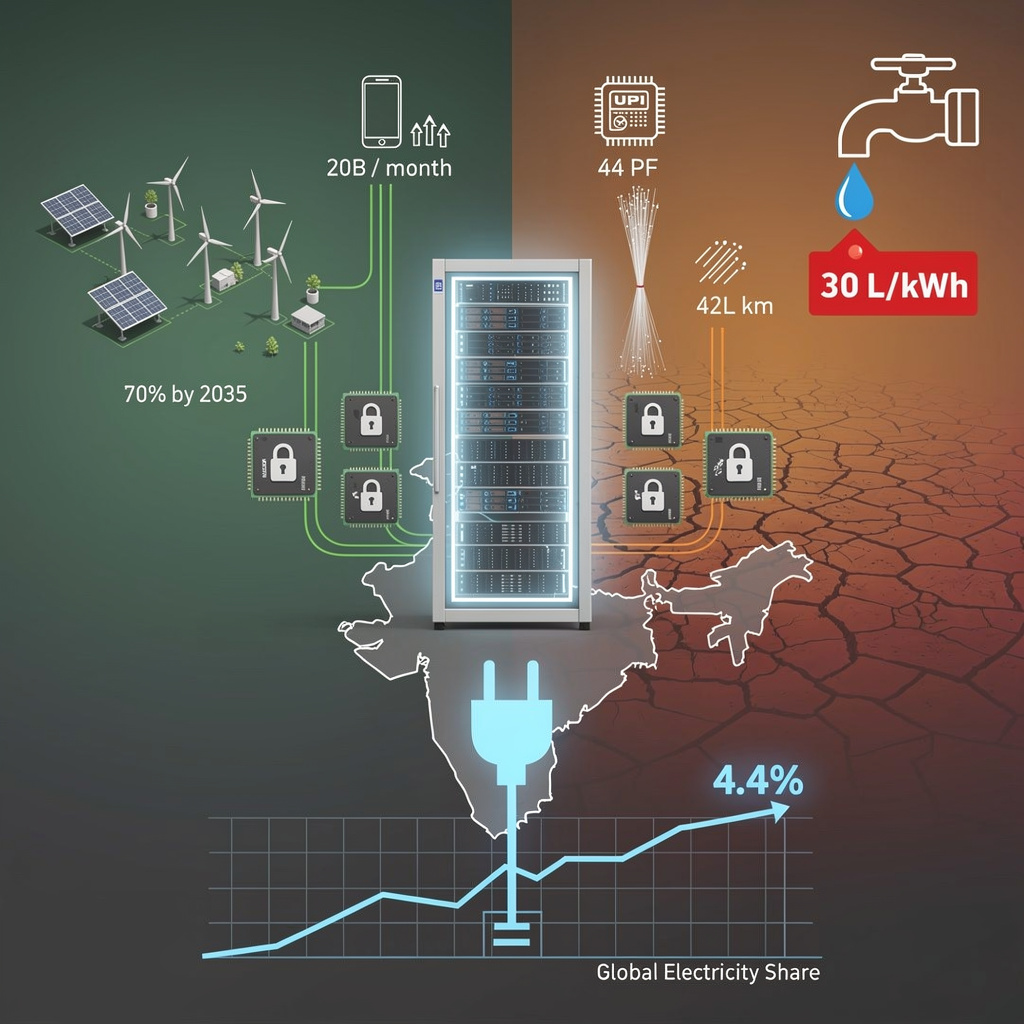

India’s data-center fleet has quietly doubled to 1,280 MW in four years, equal to the power demand of 6.5 million homes. Thirty-eight institutional supercomputers add 44 petaflops—enough raw cycles to process every UPI transaction, genome sequence and weather model the country produces. Yet the same racks that enable 20 billion monthly UPI payments are on track to guzzle 4.4 % of global electricity by 2035, up from 1.5 % today. The question is no longer whether India can scale compute, but whether the grid and the tap can keep up.

How the boom is wired

Policy acts as the circuit breaker. A tax holiday on foreign cloud revenue routed through Indian sites lured Google-Adani, Microsoft and Amazon to commit $67.5 billion in two years. BharatNet’s 42.36 lakh km of fibre—up from 19.35 lakh km in 2019—gives those data halls a 99.9 % district reach. The payoff: India already generates 46 % of Asia-Pacific AI traffic while hosting only 3 % of the world’s server halls.

What scales, what strains

- Energy: 1 GW more capacity by 2027 will raise Delhi-NCR peak load 8 %—equal to adding a new Noida-sized city each quarter.

- Water: AWS used 7.5 billion litres in 2023; upcoming hyperscale sites could push daily demand to 135 L per urban resident.

- Chips: 100 MW of OpenAI-bound Tata silicon arrives in 2026, yet every advanced GPU still ships through export-control chokepoints.

Short-term surge, mid-term squeeze

- 2026–2027: Capacity hits 1,470 MW; 45 % renewable share cuts 15 GWh of annual coal burn.

- 2028–2030: 3 GW online, two exascale machines; AI services add 1 % to GDP.

- 2031–2035: 5–6 GW cluster, 70 % green power; water-recycling must drop usage below 30 L per kWh or face municipal curbs.

Keep the lights on, keep the taps closed

Mandatory environmental audits for AI loads above 50 MW, recycled-water tariffs and solar-plus-storage PPAs are already on the policy whiteboard. Without them, India’s next data megawatt could cost a megaton of CO₂ and a megalitre of scarce water. The cloud race is won in the power and plumbing trenches—hardware alone no longer suffices.

🤯 144-Core MONAKA CPU Hits 415.53 PFLOPS—TSMC’s 2nm Breakthrough Reshapes Global HPC Race

415.53 PFLOPS of FP64 power in a single CPU—more than the entire Top500 supercomputer list in 2005 🤯. MONAKA’s 144-core Armv9-A chiplet stack, built on TSMC’s 2nm node, delivers exascale AI performance in one socket. Dual-socket systems hit 288 cores. But who gets access? Japanese HPC labs—or global cloud giants with deeper pockets?

Fujitsu and start-up 1FINITY flashed a fist-sized silicon wafer on Monday that crams 144 Armv9-A cores into a 2-nanometre footprint, the first public proof that TSMC’s newest node can leave the lab and head straight for exascale supercomputers.

Built as four 36-core chiplets stacked face-to-face with hybrid copper bonding, the processor marries a 2 nm logic layer to 5 nm cache and I/O tiles, a hybrid recipe that keeps yields high while harvesting the 15 % speed-up TSMC advertises for 2 nm over 3 nm.

Broadcom’s XDSiP package shuttles data between dies at PCIe 6.0 speeds and gives each socket twelve DDR5 channels plus CXL 4.0 memory expansion, a combination that turns a single motherboard into a 288-core node without extra silicon.

How the numbers translate to real-world clout

- Raw compute: 415 PFLOPS of FP64 and 1.4 EFLOPS of FP16—enough to train a 175-billion-parameter language model in days, not weeks.

- Core density: 144 cores per socket → a 42U rack can house >5,000 Arm SVE2-enhanced cores, shrinking a 2019-sized computer room into two rows of cabinets.

- Power: 2 nm cuts core-level watts by roughly one-sixth; at data-centre scale that equals 1.2 MW saved per 10,000-processor cluster, or the annual consumption of 300 Tokyo apartments.

Competitive scorecard

- Versus Intel Xeon “Diamond Rapids”: 2.3× the cores per socket, 1.8× the memory bandwidth, but software stack still maturing.

- Versus Nvidia Grace: equal Arm SVE2 support, yet MONAKA adds CXL 4.0 today while Grace stops at 3.0; Nvidia keeps the CUDA moat.

- Versus AMD Bergamo: 144 v. 128 cores, both on chiplet roadmaps; AMD’s 3 nm gives up the node lead but ships this year, not next.

Timeline to touch down

- Summer 2026: mass-packaged wafers roll out of Broadcom’s U.S. line.

- Q1 2027: first commercial clusters online in Japan, ~5 % share of new HPC nodes.

- 2028: if yields hold, 12 % of AI-accelerated servers could be Arm-based, nudging Intel and AMD to fast-track their own 2 nm multi-die designs.

MONAKA is more than a wafer photo-op; it is a working template for 2 nm chiplet computing. If Fujitsu can keep the foundry taps open, the next arms race in semiconductors will be fought not on transistor size alone, but on who can stack, cool and program the most cores per watt.

💡 70% Energy Drop in AI Cooling: Rittal’s Blue e+ Chiller Lands in Australia & NZ—But Can It Handle 120kW Racks?

70% less energy? 🌡️💡 That’s like powering 1,200 homes annually with one chiller—while cooling AI racks at ±0.5°C precision. In Australia & NZ, where summer heat breaks cooling systems, this tiny chiller survives 50°C and cuts OPEX by half. But can it scale beyond 7kW? Data centers with 120kW+ racks—can you afford to wait?

Rittal’s March 10 launch of three Blue e+ Hybrid IT chillers (1.5-7 kW) cuts data-center cooling electricity by 70% while holding water temperature within half a degree—tight enough to stop the latest AI GPUs from throttling. The units also shrink refrigerant charge 55% and chassis volume 90%, a direct answer to racks now hitting 120-240 kW in Australia and New Zealand.

How the numbers add up

Inverter compressors switch to free-cooling whenever ambient drops below 18°C, eliminating compressor work for up to 3,500 hours a year in temperate zones. A 5 MW facility swapping legacy computer-room air conditioners for a row of 7 kW Blue e+ modules saves roughly AU$500,000 in annual power at today’s AU$150/MWh industrial tariff—enough to fund 200 extra high-density servers.

Impacts at a glance

- Energy: 70% cut equals 2.6 GWh saved per 1 MW of IT load each year → 1,300 t CO₂ avoided, or taking 280 cars off the road.

- Space: 90% smaller footprint frees one full rack per 20 kW of cooling, letting operators add GPUs instead of chillers.

- Precision: ±0.5 K stability keeps GPU core temps below 80°C, sustaining 99% utilisation during training runs.

- Refrigerant: 55% lower charge future-proofs against pending F-gas phase-downs, trimming potential carbon penalties.

Short, mid, long view

- 2026-2027: 10-15% of new ANZ cooling spend; Melbourne and Auckland pilots validate savings under 35°C wet-bulb summers.

- 2028-2032: Modular clusters scale to 21 kW blocks; certification for 45°C water loops taps high-temperature trend.

- 2033-2035: 25-30% share of ANZ liquid-cooling market, setting the ±0.5 K benchmark for global ASHRAE standards.

Bottom line

With AI racks already breaching 50 kW and electricity prices climbing, Rittal’s fridge-size chiller turns the cooling problem into a competitive edge—less power, more compute, zero degrees of waste.

In Other News

- Texas Instruments launches MSPM0G5187 and AM13Ex MCUs with TinyEngine neural processors for edge AI, reducing energy use by 120x

- Microsoft defaults hotpatch updates for Windows 11 devices starting May 2026, eliminating restart bottlenecks for 10M production devices

- NIO Mass-Produces 150 kWh Semi-Solid State Batteries with 360 Wh/kg Energy Density

- Oracle and OpenAI scrap $300B Abilene data center expansion due to power constraints, cap capacity at 1.2GW

Comments ()