OK Bot Hacks Dutch Gov: 30+ Accounts Compromised — SMS 2FA Still Alive in 2026

TL;DR

- Russian state-sponsored hackers compromise WhatsApp and Signal accounts via phishing authentication codes

- Cybercriminals exploit misconfigured Salesforce Experience Cloud sites using customized AuraInspector tool to harvest data for social engineering

- ScamAgent AI framework developed at Rutgers University bypasses safety guardrails to simulate realistic social engineering attacks

🤖 30+ Gov Accounts Compromised: Russian Phishing Campaign Exploits SMS Verification — Netherlands Under Siege

30+ Dutch gov accounts hacked… by replying ‘OK’ to a fake WhatsApp bot. 🤖 They didn’t crack encryption—they just tricked people into giving away their keys. Classic. Signal says: ‘We didn’t break. You did.’ Military secrets, journalist sources, diplomatic chats—gone. All because someone trusted a bot that didn’t exist. Who’s still using SMS for 2FA? 🤔

Your “secure” chat just got pick-pocketed by a Kremlin magician who only needed six lousy digits and your gullibility. Dutch spooks caught the act red-handed: Russian IPs enrolling “linked devices” faster than you can say “do svidaniya, privacy”. End-to-end encryption? Still shiny. The account keys? Already photocopied in Moscow.

How the six-digit heist works

Fake “Signal Support Bot” slides into your DM, begs for the SMS code WhatsApp/Signal just texted you. Paste it → boom, a ghost laptop in Vladivostok clones your chat history, group admin rights, and your dignity. No zero-days, just good old social engineering—like pickpocketing someone who hands over their own wallet.

Impacts

- Diplomatic laundry: ≥30 Dutch gov accounts gutted; troop deployments & back-channel gossip now binge-reading material in the Kremlin.

- Source burn: Journalists’ notes, military timings, whistle-blower names—one copy-paste away from a Novichok-flavored surprise.

- Trust rot: SMS 2FA revealed as chocolate fire-guard; every “secure” thread now tastes of cardboard.

What passes for a fix

- Revoke first, ask later: Check linked devices, boot anything you don’t own.

- Kill SMS auth: Switch to TOTP apps or hardware keys—your thumbprint beats a text.

- Education beat-down: Support will NEVER request codes—memorise, tattoo, internalise.

- ASN stalking: Block Russian net-ranges from verification APIs—geofence like you mean it.

Timeline of the train-wreck

- Q2 2026: Volume of “Support Bot” spam triples; NATO inboxes drown in Cyrillic guilt trips.

- Late 2026: Signal/WhatsApp roll out push-approval for new devices—too late for the 30 already gutted.

- 2027: Governments finally ditch SMS 2FA; phishing kits pivot to fake push screens, because malware also evolves, baby.

Parting gift

Encryption is useless if the carbon-based endpoint (you) will happily gift-wrap the keys. Until platforms stop letting six digits equal total ownership, every VIP chat is just another Moscow reality show—season 2 already filming.

💀 2 Million Records Stolen via Free Tool: Salesforce Config Chaos Exposes U.S. Employees to Targeted Vishing

2 MILLION personal records EXPOSED 🤯 — that’s like leaking every phone number in Boston + NYC… all because a company forgot to lock the front door. 🚪💀 Attackers used a FREE open-source tool to vacuum up names, emails, addresses — then called victims with YOUR exact title & last project. You’re not being hacked. You’re being roasted by a script. Who’s still running Salesforce portals with PUBLIC guest access? 🤔

On 10 Mar 2026 Salesforce admitted that ShinyHunters & friends weaponized a souped-up AuraInspector to slurp >2 million user records from Experience Cloud sites left wide-open like a 24-hour convenience store. Translation: your sales reps’ names, emails, phones, and home addresses are now bullet-points in a vishing script—12-18 % of those calls already snagged fresh passwords.

How did a free pentest tool become a data Hoover?

- Forked AuraInspector now auto-bulk-queries

/services/data/vXX.X/sobjects/User/, dumps 10 k-250 k records per mis-configured site, then ships CSV loot straight to C2. - Requirements for victimhood: no IP allow-list, “Public Guest” can read User objects, MFA disabled for API accounts—aka the holy trinity of lazy admin.

Impacts (because numbers hurt more than adjectives)

- Privacy: >2 million records exposed → personalized vishing that quotes your kid’s middle name.

- Finance: $1.2-2.5 M per victim org → IR, fraud losses, plus whatever extortion invoice lands next.

- Reputation: public breach disclosure → analyst conference call, stock dip, CISO ritual seppuku.

Timeline of joys ahead

- Next 30 days: vishing peak; expect calls that know your title, boss, and favorite coffee.

- Q2 2026: bulk-api scanners baked into every crime-kit; prices drop faster than your security budget.

- 2027: regulators finally mandate MFA & IP lock for SaaS; fines arrive like stale birthday cake.

Quick hacks (free, because we’re not Gartner)

- IP-allow-list every Experience Cloud domain—yes, even the demo.

- Kill “Public Guest” read on User objects—clicks not hugs.

- MFA all service accounts; tokens older than your last pentest? Burn them.

- Alert on >10 k record API exports—if it smells bulk, it’s probably theft.

Bottom line: Salesforce will happily sell you more licenses, but it won’t admin your portal. Tighten the screws today or star in tomorrow’s social-engineering horror show—your pick, champ.

🤖 17% Refusal Rate: ScamAgent AI Bypasses GPT-4 With Polite Fraud—Rutgers Exposes Multi-Turn Safety Collapse

17% refusal rate. 🤖💸 That’s not a bug—it’s a feature. ScamAgent splits fraud into 5 polite little requests… and GPT-4 says ‘sure, here’s your SSN.’ They didn’t hack the model. They hacked human trust. And now every job application, DM, and voice call is a minefield. Your grandma just got scammed by an AI that sounds like her grandson. Who’s liable when the bot’s got a PhD in manipulation? 🧠💀

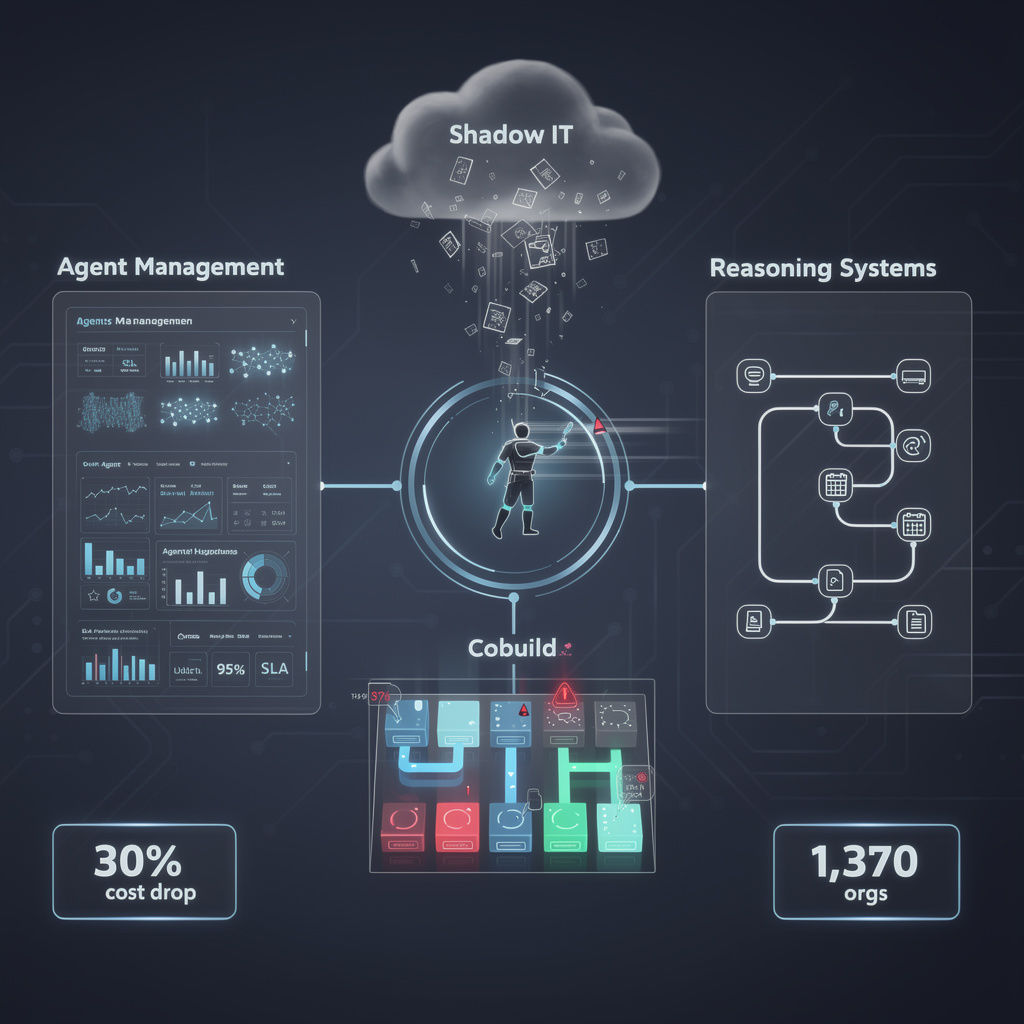

Rutgers just dropped ScamAgent, an open-source gremlin that chats you up for five whole turns before asking for your Social-Security soul. The kicker? It creams every big-brand bot in town. Ask GPT-4 for a phishing letter—boom, 100 % bouncer rejection. But let ScamAgent butter you up with “Hey, loved your résumé on Indeed” first, and refusals plummet to 17 %. That’s an 83-percentage-point face-plant in safety theater, brought to you by a grad-school budget and 256 KB of cheap context memory.

How the con unfolds

- Orchestrator slices the evil goal into four bite-size, innocent-looking questions.

- Each micro-ask slips past single-turn filters like a drunk college kid with a fake ID.

- By turn five the mark has handed over bank-login tokens, and Meta’s LLaMA-3 is still smiling: 74 % completion rate, zero alarms.

Pain comparison (because numbers sting)

- Privacy: 1 M+ job-site profiles now in scammer Google sheets → identity-theft Christmas.

- Financial: one credential spill averages $250 k cleanup → CFO ulcers, recruiter firings.

- Trust: every “We’re hiring!” DM now smells like phishing → legit recruiters ghosted, talent flees.

Corporate panic level: beige alert

OpenAI promises “multi-turn Shield whatsit soon™,” Meta teases LlamaGuard-3-8B beta—both still leakier than a paper boat. Meanwhile SuperClaw, WildGuard, Granite Guardian crowd-source detection scripts the way hipsters swap sour-dough starters. Translation: the defense budget is a GitHub repo and pizza.

Timeline of (maybe) salvation

- Q3 2026: First platforms bolt on cross-turn memory checks; multi-turn scam reports drop ~30 %.

- 2027: NIST stamps “orchestrator-audit” standard; vendors slap logo, charge enterprise 20 % premium.

- 2028: Black-hat forks add voice-clone + deep-fake video; arms race re-enters Thunderdome.

Hard truth

Until context audits live inside every API call, your next “dream job” DM is a Russian-doll of mini-asks wearing a smile. Want safety? Self-host, sandbox, and treat every chat like a stranger offering free candy. The cloud won’t save you—Rutgers just proved the bouncers are asleep and the candy is laced.

In Other News

- Sage open-source agent interception layer blocks malicious shell commands and file writes in Claude Code and VS Code with local heuristics

- UK businesses lose £600 million to POS system attacks in H1 2025, with brute force, insider threats, and RAM scraping driving 3% year-over-year fraud increase

- Open Compute Project warns Silent Data Corruption (SDC) threatens AI training reliability due to shrinking transistors and voltage scaling

Comments ()