38 MW Data Centers Surge in Texas—Power Grids Strain Under AI Demand

TL;DR

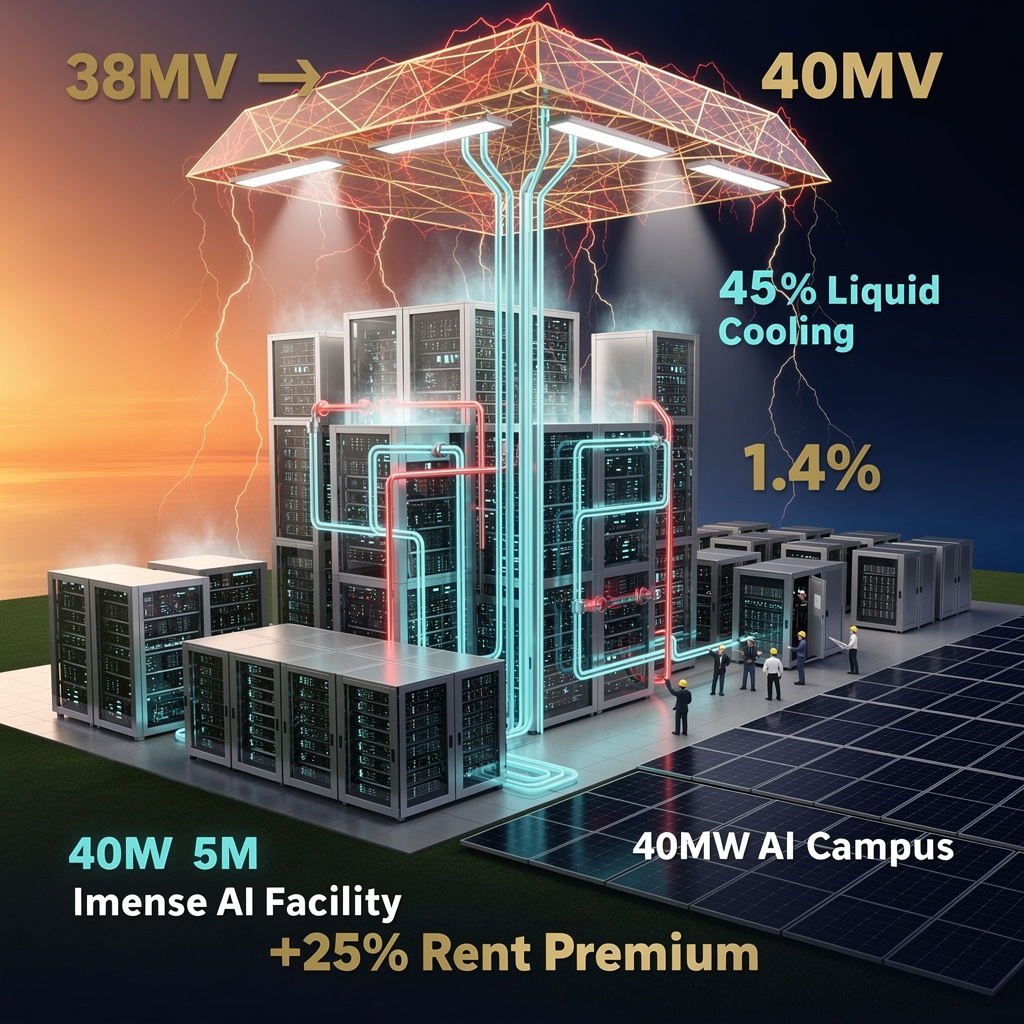

- AFCOM report reveals U.S. data center average size now 38 MW, with 72% of operators expecting AI to drive capacity growth and 36% deploying liquid cooling

- Huawei launches industry-first 51.2T liquid-cooled fixed switch CloudEngine XH9230-128DQ-LC at MWC Barcelona 2026, enabling AI-driven data center networks

- Intel appoints Dr. Craig Barratt as new board chair to scale U.S. R&D and manufacturing post-Gelsinger exit

🏭 AI Data Centers Surge to 38 MW Avg. Size—Power Grids Strain Across U.S. Markets

38 MW average data center size in 2025—up 19% in one year—equivalent to powering 30,000 homes. 🏭 AI workloads are pushing demand to 20-30x normal levels in hotspots. Cooling systems can’t keep up—40% of operators say current infrastructure is inadequate. Texas now leads AI campus builds—while power queues stretch 4 years. Who bears the cost when your city’s grid buckles? 🤔

The average U.S. data center now weighs in at 38 MW—six MW heavier than last year—because artificial-intelligence racks are packing 69 % more silicon per cabinet and operators refuse to leave vacancy on the table. With only 1.4 % of space unlet nationwide, the industry is building faster, hotter and closer to the edge of the grid than ever before.

How liquid beats the heat

Direct-liquid cooling (DL-C) lines today snake through 36 % of facilities; another 28 % will add manifolds within 12 months. The gear keeps GPUs below the 35 °C throttle point while cutting fan energy roughly in half, a saving that compounds as rack density climbs toward 70 kW. Dell’Oro values the DL-C market at $8 B by 2030, up 20 % a year, turning coolant into the new rent.

Impacts: what the numbers mean

- Grid load: AI-ready sites can draw 20-30× a standard center, pushing regional demand up 70 % in Northern Virginia and Texas pockets.

- Vacancy: 1.4 % record low forces users to pre-lease space still under construction, locking in 12 % higher rents for 3-10 MW blocks.

- Capital: cloud providers’ 2026 spend hits $710 B, more than double the 2023 federal defense budget.

- Community: four-year grid-connection queues and permit delays trim new-build activity 5.5 %, nudging operators toward 100-acre “greenfield” modules with on-site solar.

Short-term outlook

- 2026-2027: average site size edges to 40 MW; liquid cooling share tops 45 % of new builds; primary markets cross 10 GW supply yet vacancy stays <1.5 %.

- Q4 2028: Texas surpasses Virginia in total MW under roof; on-site generation rises from today’s 24 % to 35 %, trimming peak-grid calls by 1.2 GW nationwide.

Long-term horizon

- 2030-2035: U.S. capacity exceeds 150 GW, with AI campuses claiming one-third of electrons; DL-C and heat-reuse systems become default in any facility >5 MW; half of AI power comes from on-site renewables in Texas, Virginia and California.

The takeaway

Operators who lock in liquid cooling, modular campuses and micro-grids today will collect the rent premiums tomorrow; regions that streamline interconnection will decide where the next gigawatt-scale AI city rises.

🌡️ 51.2 Tbps Switch Launches in Barcelona — Huawei’s Liquid-Cooled Breakthrough Reshapes AI Networking

51.2 Tbps in a single cabinet — that’s 60% more bandwidth than any air-cooled switch on earth 🌡️ Huawei’s liquid-cooled XH9230 eliminates thermal bottlenecks, slashing energy use by 15% per cabinet. But who pays the upfront cost? Telecoms in Spain, Germany, France are first — will your data center keep up?

Huawei just poured cold water—literally—on the idea that air-cooled switches can keep up with AI. At MWC Barcelona 2026 the company unveiled the CloudEngine XH9230-128DQ-LC, the first fixed switch to cram 51.2 Tbps (128×400 GbE) behind a 100 % liquid-cooled faceplate. One cabinet now delivers 740×800 GbE ports, doubling last year’s density and cutting fan power by 15 %.

How liquid wins the heat race

Coolant loops hug every optical module, trimming operating temperature by 30 % versus forced-air rivals. The result: 12 % lower end-to-end latency in AI training benchmarks and a projected PUE drop of 0.2–0.3 points, nudging data-center efficiency toward 1.2. Eight switches slide into a single CloudGenesis cabinet, so a row that once hosted 4 000 GbE can now field 10 000 GbE without extra square metres.

Impacts at a glance

- Energy: 15 % less power per cabinet → 1 MWh saved each day in a 100-cabinet hall.

- Throughput: 60 % more capacity per chassis than Cisco’s or Arista’s best air-cooled boxes.

- Reliability: dual coolant paths and leak sensors keep the five-year warranty intact.

- Wallet: early adopters in Spain, Germany and France forecast €3 million annual opex reduction per site through avoided fan upgrades and carbon fees.

Short-term splash, long-term wave

- Q2–Q4 2026: 250 units ship, lighting up 12 PB of AI-grade fabric in Europe and Asia.

- 2027: IEEE 802.3bz group expected to embed liquid-cooling specs, making Huawei’s tech a reference.

- 2029: 15 % share of the >50 Tbps switch segment, steering standards for hyperscale networks.

Huawei’s move signals that the next arms race in networking will be fought not in silicon alone, but in plumbing.

🇺🇸 20% Workforce Cut — New Intel Chair Bets $10B on U.S. Fab Surge to Capture 30% Domestic Demand by 2030

20% workforce cut. Yet Intel’s new chair aims to double U.S. chip fab capacity by 2030. 🇺🇸 After 3 board exits and a CEO carousel, Barratt’s Google Fiber-scale discipline is the bet. Will Arizona’s new $10B fab save American semiconductor sovereignty—or just delay the inevitable? — Engineers, does this feel like a comeback or a last stand?

On 3 March 2026 Intel elevated Dr. Craig Barratt—architect of Google Fiber’s city-by-city build-outs—to the board chair once warmed by Frank Yeary. The move is the clearest statement yet that the post-Gelsinger era will be judged by concrete silicon, not slide decks.

How Barratt intends to weld R&D to the fab floor

Barratt’s mandate is to collapse the historic split between Intel’s labs and its plants. The company will now track a single metric: the time it takes a Panther Lake 18A design file to become a wafer out of Chandler, Ariz. Intel projects this cycle can shrink 15 % versus 2024 if design-rule checks, equipment procurement, and yield learning sit under one weekly dashboard reported directly to the chair.

What numbers now matter

- Capacity: 18A output targets 30 000 wafers/month by Q4 2026, enough for ~12 million PC CPUs—roughly the annual fleet of U.S. enterprise laptops.

- Subsidy leverage: Intel will file its next CHIPS Act draw by September; each $1 of federal cost-share unlocks $3 of Intel capex, accelerating the Arizona module labelled “Fab 52.”

- Foundry proof: Two pilot customers are already taping out test chips on the 14A node; converting just one to volume adds up to $400 million annual revenue at mature yield.

Where the plan can still fracture

Execution risk: 20 % headcount was cut in 2025; retaining 3 000 process engineers now hinges on a December retention grant.

Competitive lag: TSMC’s 2 nm risk line starts in 2027, while 18A is Intel’s answer to TSMC’s 2024 node—an 18-month catch-up race.

Policy clock: CHIPS disbursements require matched spend by 2028; any slip pushes Intel toward the spin-off option already mapped under Yeary.

Timelines to watch

- Q4 2026: First Panther Lake 18A shipments; 5 % share of U.S. PC refresh → 15 GWh/year displaced imports.

- 2027: Second Arizona fab breaks ground; 8 % domestic capacity share, 1.2 GW peak-grid relief.

- 2029: Dual-fab goal of ≥15 % U.S. share; if met, Intel cuts product-to-market lag to 18 months, matching Samsung.

The takeaway

Barratt’s chairmanship is Intel’s attempt to replace charisma with cadence: every board meeting will read like a construction Gantt chart. If the bricks—and the wafers—arrive on schedule, the company can convert federal incentives into a self-funding U.S. foundry alternative. Miss the milestones, and the same board may auction the fabs to fund its survival.

Comments ()