47% Storage Density Gain in Data Centers — Europe’s Energy Push vs. Legacy HDDs

TL;DR

- Seagate ships 44TB Mozaic 4+ HAMR drives to hyperscalers, enabling 47% infrastructure efficiency gain

- China releases Origin Pilot, the world’s first open-source quantum computer operating system

- EURO-3C project unveiled with €75M funding to build federated Telco-Edge-Cloud infrastructure across Europe

📊 44TB HAMR Drives Slash Data Center Footprint by 100 sq ft/EB — U.S. Hyperscalers Lead Storage Revolution

47% more storage in the same rack? 🤯 That’s like fitting 147 basketballs into a space meant for 100 — all while using 0.8 MWh less power per exabyte. Seagate’s 44TB HAMR drives are shrinking data centers, not just filling them. But as hyperscalers race to cut carbon, will SSDs and photonic storage leave HDDs behind? — Data center operators: Are you reconfiguring racks for density or clinging to legacy assumptions?

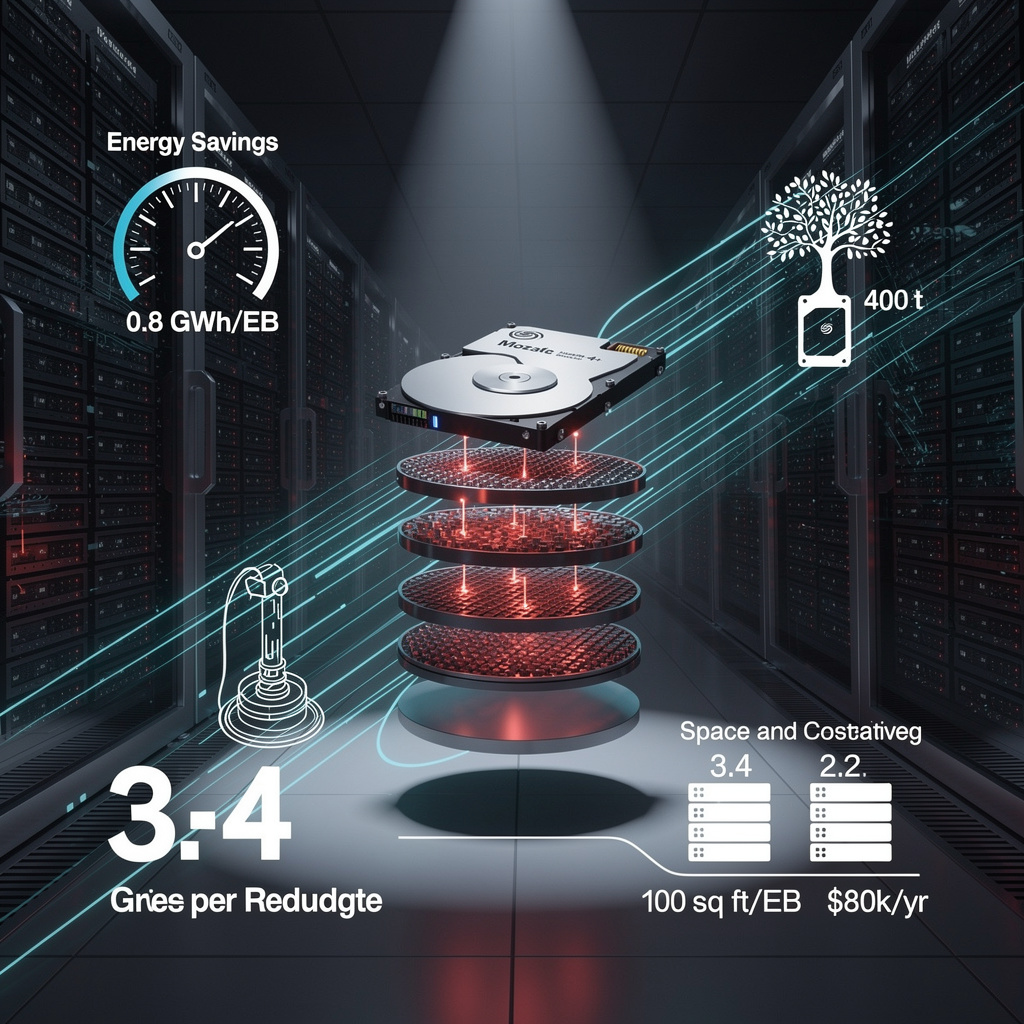

Seagate has begun volume shipments of its 44 TB Mozaic 4+ hard-disk drives to two U.S. Tier-1 hyperscalers, delivering a 47 % density jump over today’s 30 TB CMR units. The heat-assisted recording platform squeezes 4 TB onto each of ten platters, holds the 3.5-inch form factor, and streams at ~300 MB s⁻¹ while trimming power by 0.8 million kWh for every exabyte deployed—enough to run 75 U.S. homes for a year.

How the gain is engineered

A laser briefly spot-heats the magnetic grains, allowing bits to be written closer together without losing stability. The same 7 200 rpm spindle and CMR track layout keep firmware changes minimal, so racks drop from 3.4 to 2.2 drives per petabyte while staying within existing power-distribution units.

Impacts at data-center scale

- Space: 100 sq ft saved per EB → frees floor area for GPU or liquid-cooling upgrades

- Energy: 0.8 GWh yr⁻¹ cut per EB → ≈ $80 k annual saving at 10 ¢ kWh⁻¹

- Carbon: 400 t CO₂ avoided per EB using U.S. grid mix → supports 2027 net-zero pledges

- Competition: Western Digital’s 40 TB UltraSMR arrives H2 2026 but offers lower sustained bandwidth

Response gaps to watch

Strength: plug-and-play density gain on proven CMR interfaces.

Weakness: random I/O still lags far behind NVMe SSDs, limiting hot-tier use.

Threat: SSD $ TB⁻¹ continues to fall 18 % yr⁻¹; by 2028 all-flash archives could undercut 100 TB HDDs on latency-sensitive AI checkpoints.

Mitigation: hyperscalers should reserve new drives for cold and warm tiers, pair with RDMA-over-InfiniBand parallel file systems, and lock in five-year power contracts to preserve the 20 % wattage advantage.

Outlook

- 2026–2027: ~200 EB cumulative Mozaic 4+ deployments, validating 0.8 GWh EB⁻¹ savings

- Q4 2028: 60 TB HAMR units ship, collapsing rack count to <2 drives PB⁻¹

- 2030: 100 TB drives push HDD energy below 0.2 kWh TB⁻¹ yr⁻¹, keeping spinning disks competitive against QLC SSDs for exascale archives

The shipment shows that mechanical storage still has runway; if reliability holds near Backblaze’s 1.4 % AFR, HDDs will remain the bulk tier backing every new AI model and climate data set through the decade.

🚀 5,000+ Downloads of Origin Pilot OS — China’s Open-Source Quantum System Challenges Western Cloud Giants

5,000+ downloads in 7 days — an open-source quantum OS just broke proprietary lock-in 🚀 Origin Pilot cuts qubit calibration time by 70% — from 3 hours to 1.2 — on China’s Wukong processors. It’s compatible with IBM & Google’s frameworks, but built for China’s 2030 quantum ambitions. Who gets left behind if the world splits into open-source and proprietary quantum ecosystems?

Origin Quantum Computing Technology Co. released Origin Pilot on 4 Mar 2026, the first open-source operating system that schedules jobs across superconducting, trapped-ion and neutral-atom qubits. Within a week 5 000 developers downloaded the MIT-licensed code, 85 % from China, 15 % from the U.S. and EU.

How it works

A hardware-abstraction layer translates IBM Qiskit and Google Cirq calls into vendor-neutral instructions. A parallel execution engine dispatches up to four quantum circuits simultaneously on a 56-qubit Wukong node, cutting wall-clock time by 75 %. An auto-calibration module trims three-hour manual tune-ups to 1.2 h, a 60 % reduction that frees lab staff for algorithm work.

Impacts

- Research throughput: 4× speed-up on 56-qubit tests → faster iteration for quantum-chemistry and crypto workloads.

- Vendor lock-in: open licence removes per-seat fees → estimated $3 million annual savings for a 20-user academic cluster.

- Talent pipeline: GitHub forks already exceed 1 200 → signals a growing pool of quantum-literate programmers.

- Geopolitics: China-first codebase → potential divergence from U.S. cloud stacks and future export-control friction.

Response & gaps

Strength: policy-backed funding through 2030 guarantees sustained commits.

Weakness: only Origin drivers are production-grade; Rigetti or IonQ plug-ins are missing.

Opportunity: standardising job-description APIs could influence ISO quantum extensions by 2028.

Threat: Amazon Braket’s 30 000 active users create inertia that a new stack must overcome.

Timelines

- Q4 2026: university roll-outs expected to lift user base to 20 000; 15 extra hardware drivers pledged by community.

- 2027: integration target with Fujitsu’s 1 000-qubit system; calibration engine must prove sub-0.1 % error scaling.

- 2029: if drivers mature, Pilot could control China’s planned exascale quantum processor, managing 1 000+ qubits.

- 2030: API set may become baseline for quantum job languages, tilting software influence eastward.

Origin Pilot turns quantum computers from bespoke appliances into programmable devices. Whether global labs adopt the stack will decide if open-source becomes the lingua franca of tomorrow’s qubit clouds—or if the field splits into competing software spheres.

🚀 EU Launches €75M Edge Network: <5ms Latency Across 50+ Cities — But Can It Replace US and Chinese Tech?

€75M EU project just built a 50+ city AI edge network — faster than your home broadband — 🚀 It cuts latency to <5ms for surgery, factories, and holograms by running AI right on 5G towers. But 30% of Europe’s telcos still use US/Chinese gear — will they switch before 2030? — EU citizens: Who should control your data’s next millisecond?

Barcelona, 3 Mar 2026—The European Commission opened Mobile World Congress by launching EURO-3C, a €75 million programme that binds 90 telecom, cloud and research actors into a single federated edge-cloud fabric stretching across all 27 member states. The move operationalises last year’s Digital Networks Act and targets a 30 % cut in latency-critical traffic now routed through non-EU hyperscalers.

How does a continent build its own edge?

Each participating operator—Deutsche Telekom, Telefónica, EutelStat among them—will retrofit 5G/6G macro sites with sealed micro-data-halls (≈8 racks, <5 kW each) housing NPU/ASIC blades and 100 Gb/s RDMA NICs. An SDN-controlled backbone, already 60 % deployed on Europe’s research networks, will federate the sites via open APIs (ETSI MEC, OpenRAN) so that an AI inference job can hop from Madrid to Stockholm without leaving EU legal jurisdiction. A zero-trust security layer, audited by BEREC, encrypts every workload enclave.

Why it matters—three pressure points

Sovereignty: 52 % of current RAN-AI trials still rely on code resident in US or Asian clouds → EURO-3C keeps the data, models and metadata inside GDPR boundaries.

Latency: Factory-floor computer-vision pilots in Stuttgart show 4 ms round-trip on the pilot edge, one-third the 12 ms achieved via the nearest hyperscaler zone.

Market leverage: Europe’s €1 trillion digital sector invested €57.9 bn in 2023, down 2 % year-on-year; pooled edge capacity could unlock an extra €6–8 bn in industrial automation contracts by 2030, according to Commission estimates.

Gaps still on the map

- Hardware: no EU fab yet produces 5 nm AI accelerators; STMicro and ASML-led consortia must deliver first European NPU tape-outs by Q2 2027.

- Governance: 90 members risk “design-by-committee” delays → a slim steering board (9 seats) will gate each €10 million tranche against OpenRAN conformance scores.

- Funding: private match of €200 m is verbal only; release of the final 25 % EU grant is tied to signed operator capex contracts.

Timelines to watch

- 2026-2027: 10–15 pilot nodes (<5 ms latency) in Berlin, Paris, Milan, Madrid, Warsaw, Stockholm; first federated AI inference API certified.

- 2028-2029: 50+ live sites, 1.2 GW cumulative edge compute, 12 % of EU 5G core traffic processed locally.

- 2030-2032: <150 km inter-site spacing, sub-millisecond 6G holographic links, >30 % reduction in cross-border cloud hand-offs.

If the milestones hold, EURO-3C will hard-wire digital sovereignty into Europe’s network physics—turning policy rhetoric into racks, silicon and latency charts that competitors overseas cannot unplug.

In Other News

- Apple leaks MacBook Neo (Model A3404) with $599–$799 price point, USB-C, MagSafe, and WiFi 7 in EU regulatory filing

- ACME Greentech secures 450 MW solar PPAs in India with 300 MW and 150 MW contracts, advancing Maharashtra’s 19.1 GW solar capacity

Comments ()