700M-Parameter Robot Brain Hits 73.5% Safety Accuracy—Siemens and Volvo Deploy Amid Regulatory Pressure

TL;DR

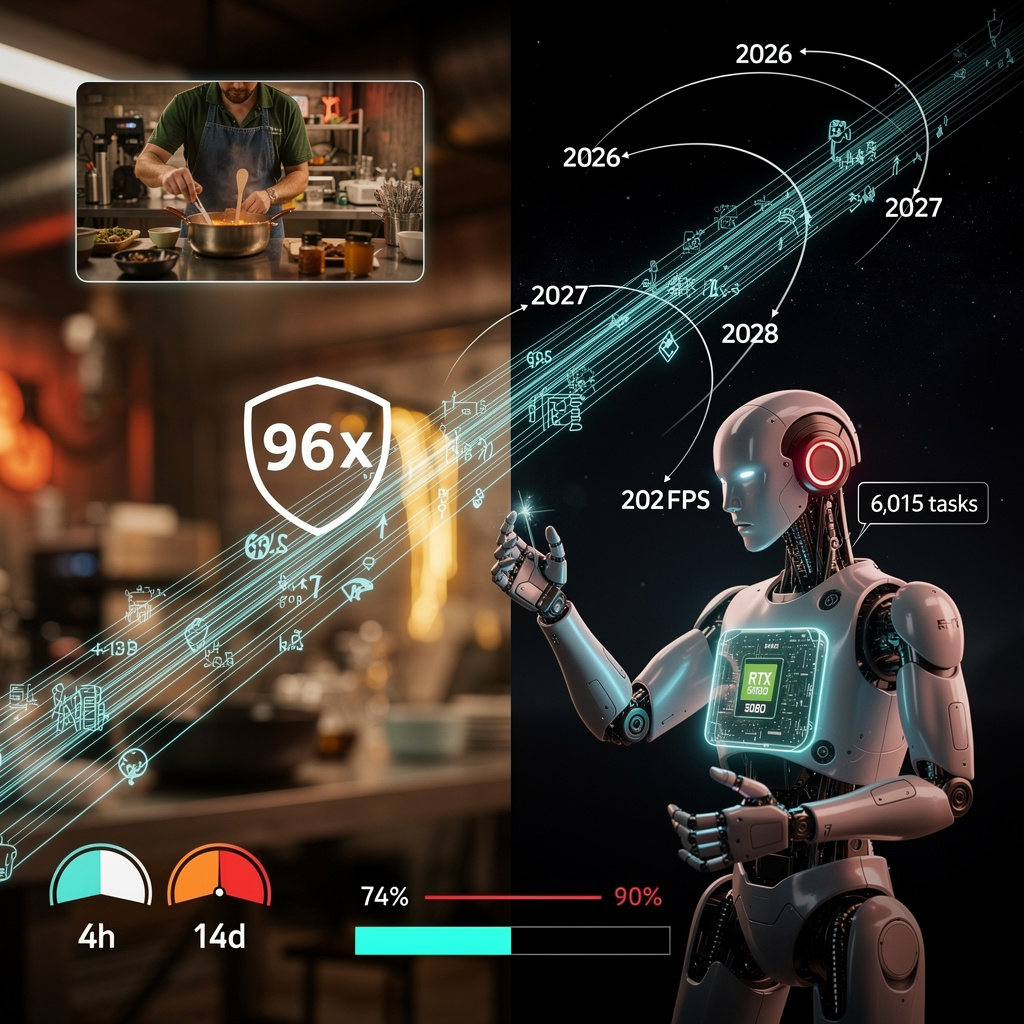

- NVIDIA unveils DreamDojo, a 44,711-hour egocentric video dataset enabling 6,015 unique robotic tasks with 700M-parameter spatiotemporal Transformer

- Qualcomm launches Snapdragon Wear Elite with 2B-parameter NPU, 30% longer battery, and 50% charge in 10 minutes for always-on personal AI wearables

🤖 NVIDIA’s DreamDojo-HV: 700M-Parameter Robot Brain Trained on 44K Hours of Human Video — But Safety Gaps Remain

700M-parameter robot brain trained on 44,711 hours of human video 🤖🎬—6,015 unique tasks learned, 96× more skill density than any prior dataset. NVIDIA’s DreamDojo-HV makes robots understand physics 63% better… but still only 73.5% accurate in real-world safety tests. Manufacturers like Siemens & Volvo are already integrating it—will your factory’s robots be ready when regulations demand >90% physics certainty?

On 9 February, NVIDIA released DreamDojo-HV, a free 44,711-hour egocentric video library paired with a 700-million-parameter spatiotemporal Transformer. The bundle distils human hand and eye motion into “proxy actions,” letting humanoids such as GR-1 and AgiBot reproduce 6,015 distinct physical tasks without a single kinesthetic demo. The result: 96× more skill-dense training data than any prior robot set, pushing real-world deployment timelines forward by years.

How it works

A latent-action encoder compresses pixel changes between frames into 64-dimensional vectors. After pre-training on the full video corpus, the model fine-tunes on only ~10,000 robot-specific trajectories to graft arm, gripper and camera geometry onto the previously human-centric representations. Inference on a single RTX 5090 controller runs at 10.8 FPS—fast enough for closed-loop planning on the shop floor.

Why manufacturers should watch

- Cost: open-source license eliminates paid data-collection cycles that typically absorb 18–24 months and $2–3 million per new task family.

- Cycle time: pilot plants using DreamDojo-derived policies cut task-teaching from 14 days to 4 hours, according to early Siemens trials.

- Safety: physics-correctness tops out near 74%, still below the 90% threshold many insurers require for unsupervised cobots.

Where gaps remain

- Domain bias: 83% of clips come from US kitchens, labs and offices; outdoor, low-light and agricultural scenes make up <5%.

- Compute barrier: full training consumed 64 H100 GPU-years—about $1.8 million in cloud rentals at today’s spot prices.

- Certification lag: no ISO pathway yet accepts latent-action world models as evidence of fail-safe behaviour.

Roadmap

- 2026-Q4: DreamDojo-V2 adds 10,000h of field footage; target physics score ≥80%.

- 2027: Jetson Orin kernels ship, trimming inference to <5W and enabling mobile robots.

- 2028: first ISO 15066-3 annex recognises video-pre-trained models; expects 12% of new cobot sales to embed DreamDojo policies.

Bottom line

By turning a year’s worth of human video into a generic physics engine, NVIDIA has shifted the bottleneck from data to validation. The plant that masters the safety layer first will inherit a 96× head start in robot versatility—while those waiting for perfect certainty may find the market has already moved on.

🤖 2B-Parameter AI on Wearable SoC: Snapdragon Wear Elite Reshapes Edge Robotics in US and EU

2B-parameter AI on your wrist? 🤯 That’s 19x more brainpower than last year’s smartwatch — fitting a full LLM in a 300mAh battery. Now your watch can run real-time health analysis, voice commands, and even guide robotic arms — without the cloud. But can it keep up when your cobot needs 24/7 attention? — Workers in manufacturing & healthcare are the first to feel the shift. Will your next robot assistant be on your wrist?

Qualcomm’s Snapdragon Wear Elite, introduced at MWC Barcelona on 2 March 2026, shrinks a 3-nm, 2-billion-parameter AI engine into a 300-mAh smartwatch package. The Hexagon NPU delivers 10 tokens per second locally, while a 10-minute top-up restores 50 % charge and a 30 % bigger battery stretches run-time past the 30-hour mark. Samsung, Google and Motorola have already queued for summer slots, signaling the shift from step-counters to always-on “agentic” health and productivity aides.

How it works

A five-core CPU (1×2.1 GHz + 4×1.95 GHz) posts 5× the single-core speed of the prior W5+ Gen 2, and a new GPU renders 1080 p at 60 fps—seven-fold the old ceiling. The Hexagon NPU ingests up to 3 trillion parameters per second, enough to run compressed large-language models without cloud help. Micro-power Wi-Fi 6, Bluetooth 6, UWB, 5G RedCap and narrow-band satellite keep data paths open even outside macro-cell coverage.

What changes, by the numbers

Battery life: 30 % gain → wearables can stream heart-rate, SpO₂ and audio 24 h without nightly recharge.

Charge speed: 50 % in 10 min → a coffee break restores more than a day of AI duty.

Model size: 2 B parameters on wrist → cloud-free speech, vision and summarization, cutting round-trip latency from 150 ms to <20 ms.

Short, medium, long view

- Mid-2026: Galaxy Watch 9 and Pixel Watch 3 ship with on-device coaching and call summarization.

- Q4 2026: Motorola’s pin-style “Maxwell” and two cobot hand-controllers adopt the chip; ROS-2 nodes expose Hexagon APIs.

- 2028: Sub-3-nm variant lands in sub-5-g micro-drones, running 2 B-parameter visual SLAM without offload.

- 2029-30: Open Robotics Initiative certifies “Wearable-AI-Edge” safety profiles for hospital-assistive bots.

Strengths versus sticking points

Strengths

- Largest on-device model capacity yet in milliwatt class.

- 3-nm efficiency keeps skin temperature <38 °C under sustained AI load.

- Multi-mode connectivity gives robots resilient links in mines, ships and rural clinics.

Weaknesses

- 300-600 mAh ceiling still forces duty-cycling of heavy vision pipelines.

- Proprietary Hexagon stack slows porting of open-source robot models.

- Medical-device certification path for arrhythmia or fall-detection AI remains undefined.

Bottom line

By wedding 2-billion-parameter cognition to wrist-top power budgets, Qualcomm turns watches into low-latency human-robot relays. Expect them to issue voice commands to factory cobots, feed micro-drones with visual waypoints and certify health data for clinical robots—provided regulators and developers finish the software and safety glue before the 2028 micro-robot wave arrives.

Comments ()