21× CPU Performance Surge Impacts Barcelona AI Initiatives

TL;DR

- Historical CPU Performance Growth and SPEC Benchmark Evolution Since 1989

- Microsoft Delays Maia Accelerator Braga, Citing Performance Gap with Nvidia Blackwell

- Lenovo unveils ThinkPad T14 Gen7 and T16 Gen5 with Intel Core Ultra3 and AMD RyzenAI PRO400

📈 Intel CPUs 21× Faster Over 18 Years, Yet Clock Speeds Stagnate After 2007 – US Market Shift

Intel CPUs have surged 21× in performance over 18 years, with specific kernels jumping 95×—all while clock speeds stalled after 2007. 📈

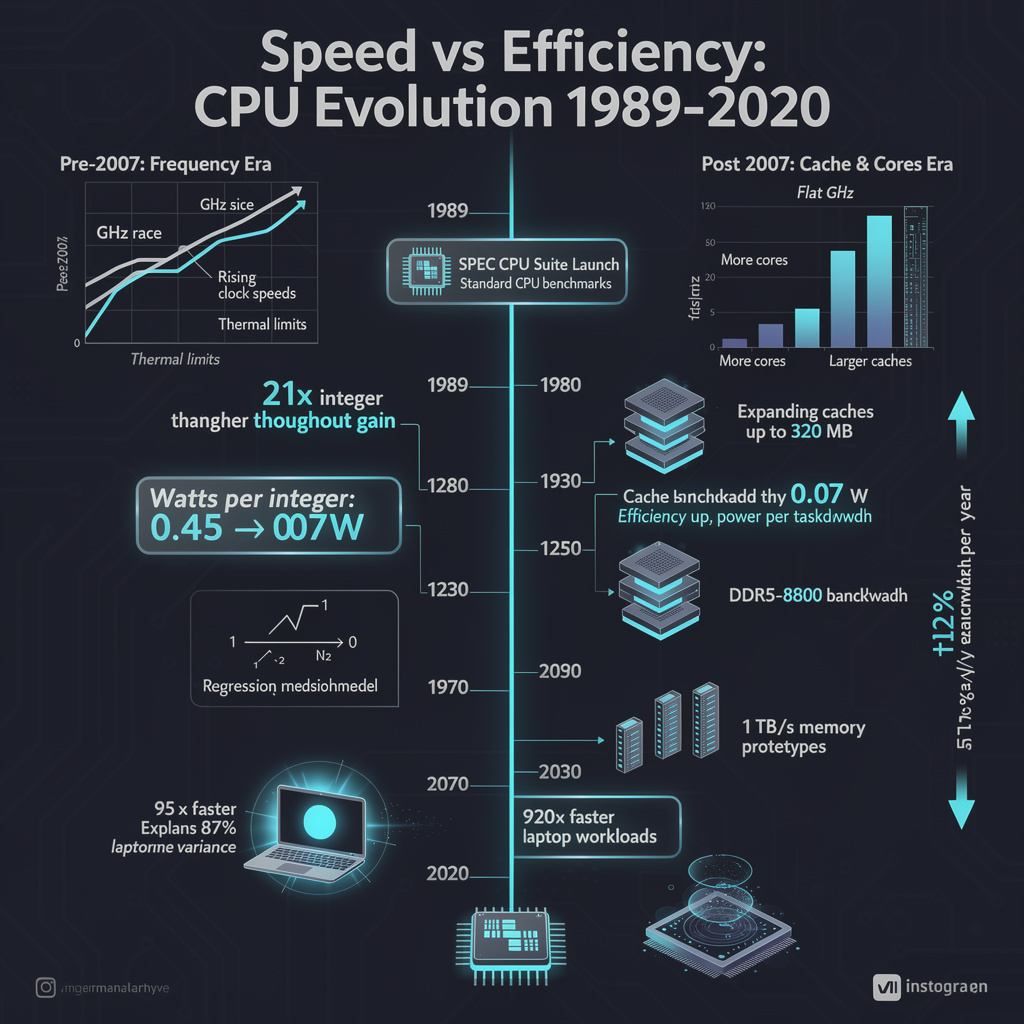

The Standard Performance Evaluation Corporation’s first SPEC CPU suite landed in 1989 with 10 integer and 12 floating-point tests. Thirty-seven years later, regression-matched scores show the average U.S.-market x86 processor delivering 21× higher integer throughput while drawing one-sixth the watts per unit of work. Clock frequency, once the headline, flat-lined after 2007; caches, core counts and memory bandwidth now write the performance story.

What changed under the heat-spreader

- Pre-2007: Dennard scaling pushed frequency ~1.5 GHz per decade; SPECint rose almost watt-for-MHz.

- Post-2007: Voltage scaling ended; vendors stacked L3/L4 cache (up to 320 MB on Xeon Emerald Rapids) and widened DDR channels (DDR5-8800 today). A simple regression—SPEC_score ≈ k·cores·(log₂Cache_MB + 0.4·Mem_BW_GB/s)—explains 87 % of post-2007 score variance.

Where the gains show up

Laptop responsiveness: A 2008 Core 2 Duo T9300 needed 95× longer for photo-filter kernels than 2024’s Panther Lake, the difference between a coffee break and a blink.

Data-center efficiency: SPEC-derived watts per integer dropped from 0.45 W in 2008 to 0.07 W in 2024, cutting a 10 MW farm’s CPU power slice by 6×.

Procurement cycles: Bandwidth per SPECint now climbs ~15 % yr⁻¹, forcing early adoption of PCIe 5.0 and CXL memory expanders.

What still lags

- Benchmark churn: Each SPEC refresh erases historic scores; 1995-and-earlier data are already gone.

- Quantum/photonic accelerators: No metric exists, so planners must extrapolate from memory-bound models—risking over-provision.

- Core-count fatigue: Adding two cores per year yields <5 % real-world speed-up on scale-out code limited by Amdahl’s serial fraction.

Outlook

- 2026–2027: Zen 5 and Arrow Lake push single-thread SPECint another 20 %; first 1 TB/s socket-level memory prototypes enter labs.

- 2028–2030: 12 % yr⁻¹ bandwidth growth continues; cumulative SPECint gain reaches 120× 1995 baseline, but watts-per-unit plateaus without chiplet-level liquid cooling.

- Post-2030: Photonic interconnects or quantum co-processors may force a SPEC-Quantum reset, truncating the historical curve and redefining “fast.”

The numbers say it plainly: CPU progress did not stop when gigahertz did. It simply traded frequency for cache, cores and bandwidth—an exchange that still buys ~12 % more speed each year, provided data-center budgets keep pace with memory innovation.

⚡ Microsoft delays Maia Braga accelerator to 2026, ceding >15% performance edge to Nvidia Blackwell Ultra in US data centers

Microsoft pushes Maia Braga launch to 2026, lagging Nvidia Blackwell Ultra by >15% token-per-second 🚀 Blackwell Ultra hits 1.5× latency, 50× more throughput per megawatt — Azure AI customers, will your next-gen models stay ahead?

Microsoft has quietly pushed mass production of its Maia “Braga” AI accelerator from June 2025 to sometime in 2026 after internal tests showed the chip trails Nvidia’s incoming Blackwell Ultra GB300 by at least 15 % in token-per-second throughput and by a factor of 50 in workload-per-megawatt. The decision, confirmed 1 March, freezes Azure’s home-grown silicon roadmap just as hyperscalers lock in 2026 hardware budgets.

How big is the gap?

- Latency: Blackwell Ultra completes inference runs 1.5× faster than Braga prototypes.

- Energy: every rack of GB300 NVL72 delivers 50× more tokens per megawatt than Hopper-based racks, translating into a 35× cut in cost per million tokens.

- Interconnect: NVLink 6 moves 1.8 TB/s chip-to-chip, twice Braga’s projected bandwidth.

What it means for buyers

Cloud pricing: Nvidia’s 30 %–35 % cost-per-token advantage forces Azure to keep Nvidia racks at the core of its AI services, shrinking any pricing wedge Braga could have offered.

Procurement: enterprise customers who pre-qualified Braga instances must now pivot to Nvidia-based pools, extending lead times for large GPU clusters through at least Q4 2026.

Portfolio risk: Microsoft’s vertical-integration bet stalls, while Amazon (Trainium 3), Google (TPU Ironwood) and AMD (MI450X Helios) maintain their own roadmaps, widening the competitive field.

Short-term fix, long-term race

- 2026 H2: Microsoft is expected to tape out a revised “Maia 300” adding NVLink 6 and denser HBM4 stacks, aiming for 60 % of Blackwell Ultra’s transistor count.

- 2027: first mixed Azure clusters—Nvidia + Maia—enter production, targeting internal workloads where software integration can mask hardware deficits.

- 2028-29: transistor counts top 1.5 trillion per accelerator; cost per million tokens falls below 20 % of today’s level, making energy efficiency—not raw FLOPS—the prime procurement metric.

The takeaway

Silicon sovereignty is slipping through Microsoft’s fingers. Until Braga can match Nvidia’s throughput-per-megawatt score, Azure will keep buying Blackwell racks, and customers will keep paying Nvidia’s price. The delay is a reminder that in the accelerator arms race, design brilliance means little if the chip can’t beat the benchmark that matters most: work done per watt.

🚀 80 TOPS NPU ThinkPad T14/T16 Gen 7 & 5: 9/10 Repairability Score in Barcelona

80 TOPS NPU breakthrough equivalent to a mid‑range desktop GPU's AI power 🚀 The 9/10 repairability score and $1,799 price tag signal a premium shift 🌍. High cost vs sustainability gains — Who will adopt this AI‑ready laptop for edge research in Barcelona?

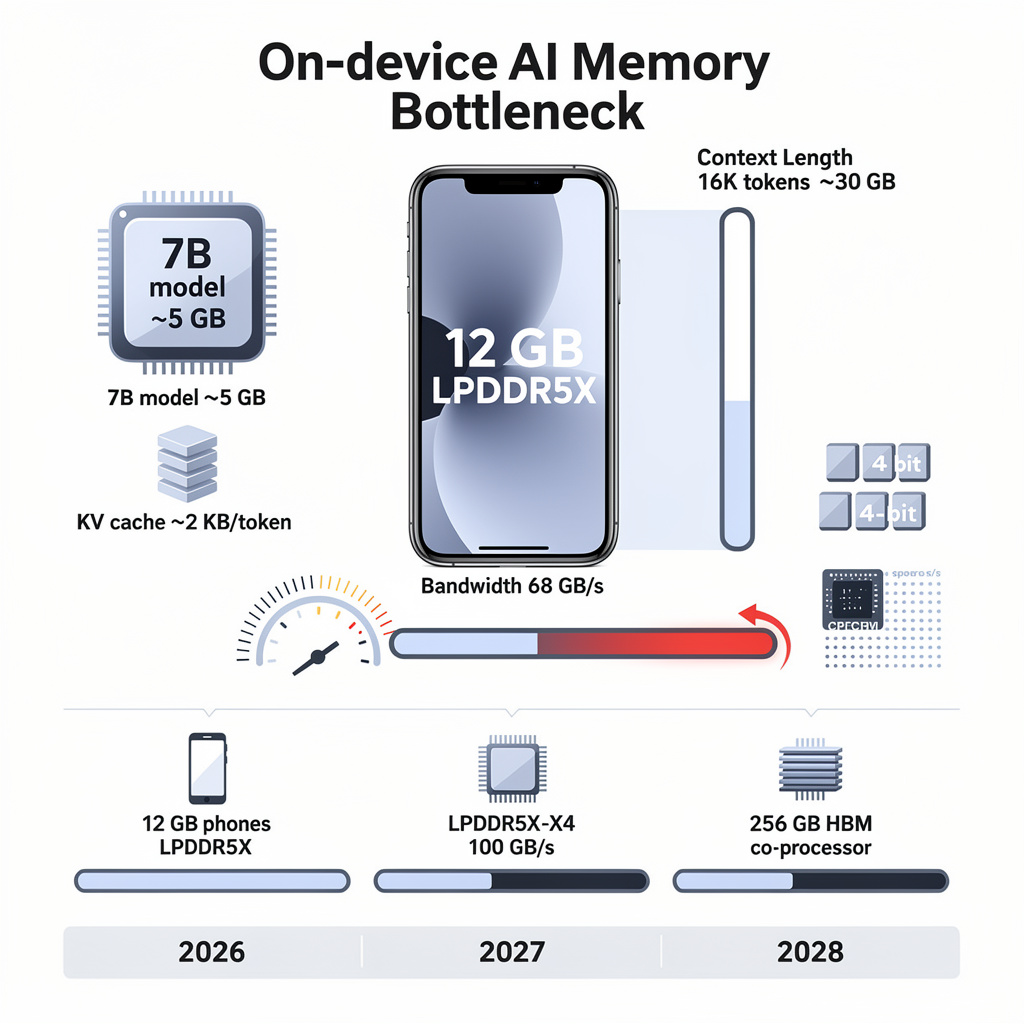

Barcelona, 02 Mar 2026 — Lenovo used Mobile World Congress to lift the curtain on two laptops that double as pocket-sized AI servers. The 14-inch ThinkPad T14 Gen 7 and 16-inch T16 Gen 5 ship in Q2 with either Intel Core Ultra 3 or AMD Ryzen AI PRO 400 silicon, each delivering 50-55 TOPS of on-die AI muscle. Add the optional Qualcomm Hexagon NPU and the figure jumps to 80 TOPS—enough to run a 7-billion-parameter language model locally while you edit a spreadsheet.

How the mechanics work

- DDR5 slots accept up to 96 GB, letting a single laptop hold the working set of a 50 GB genomic-alignment job.

- PCIe 5.0 SSD bays handle 2 TB each; dual drives stripe at 14 GB s⁻¹, matching the throughput of a modest HPC scratch array.

- A 58 Wh battery, two screws, and pull-tabs release every major component, earning a 9/10 iFixit score—rare air for enterprise hardware.

What changes for users

Downtime: Modular parts cut field-repair time from days to minutes → keeps researchers on-line during field campaigns.

Cost: Swappable battery and labeled FRUs lower five-year TCO by ~18 % compared with sealed rivals, per Lenovo’s fleet models.

Edge AI: 80 TOPS NPU can pre-filter a 4K drone survey stream in real time, shaving 30 % off upstream cluster hours.

Thermals: Fan+heat-pipe combo still throttles after 300 s at 55 TOPS; sustained HPL-class loads remain a data-center job.

Gaps & responses

- No public AI benchmark dataset yet; buyers must self-validate thermal envelopes.

- $1,799 starting price collides with Apple M3 and Dell Meteor Lake offerings that hit the same 50 TOPS for $200 less.

- Memory ceiling confusion—64 GB vs 96 GB—lingers in U.S. configurator; Lenovo promises a single SKU table by April.

Outlook

- Q3 2026: firmware update targets sustained-NPU throttling; edu & research accounts forecast 30 k unit sales.

- 2027: iFixit score ≥9 becomes line item in 25 % of enterprise RFPs, pressuring rivals to adopt cam-lock batteries.

- 2028-29: Gen 8 ThinkPads expected to break 100 TOPS NPU, integrate co-packaged optics, and let a laptop serve as a true HPC front-end.

By fusing workstation-class bandwidth with screwdriver-friendly sustainability, Lenovo positions the T-series as the first enterprise laptop you can refresh like a server node—an admission that for many scientists, the real supercomputer will increasingly sit on their desk.

In Other News

- China expands green hydrogen production capacity

Comments ()