AI Data Centers: 12% of US Power by 2028—Tech Giants Absorb $11.6B to Shield Households

TL;DR

- U.S. AI firms Anthropic, Microsoft, and OpenAI commit to covering data center electricity cost increases amid 6% national price surge

- Tesla rolls out Grok AI system to Model Y and Model 3 vehicles with Hardware 3 and 4, marking first consumer AI integration in EVs

- Salesforce Deploys AI-Powered MIPS Platform to Automate 95,000 Hyperforce Migrations

⚡ 12% Power Grab: AI Giants Pledge $11.6bn to Shield Households from Soaring Electricity Costs

AI data centers will eat 12% of US electricity by 2028—up from 4.4% today. That's like adding 50 GW, yet Anthropic, Microsoft & OpenAI just pledged to swallow $11.6bn in rate hikes rather than pass them to your bill. A 25% household spike? Blocked. But with $1tn in commitments and grid bottlenecks everywhere, can they actually build fast enough? — Is your state ready for the AI power surge?

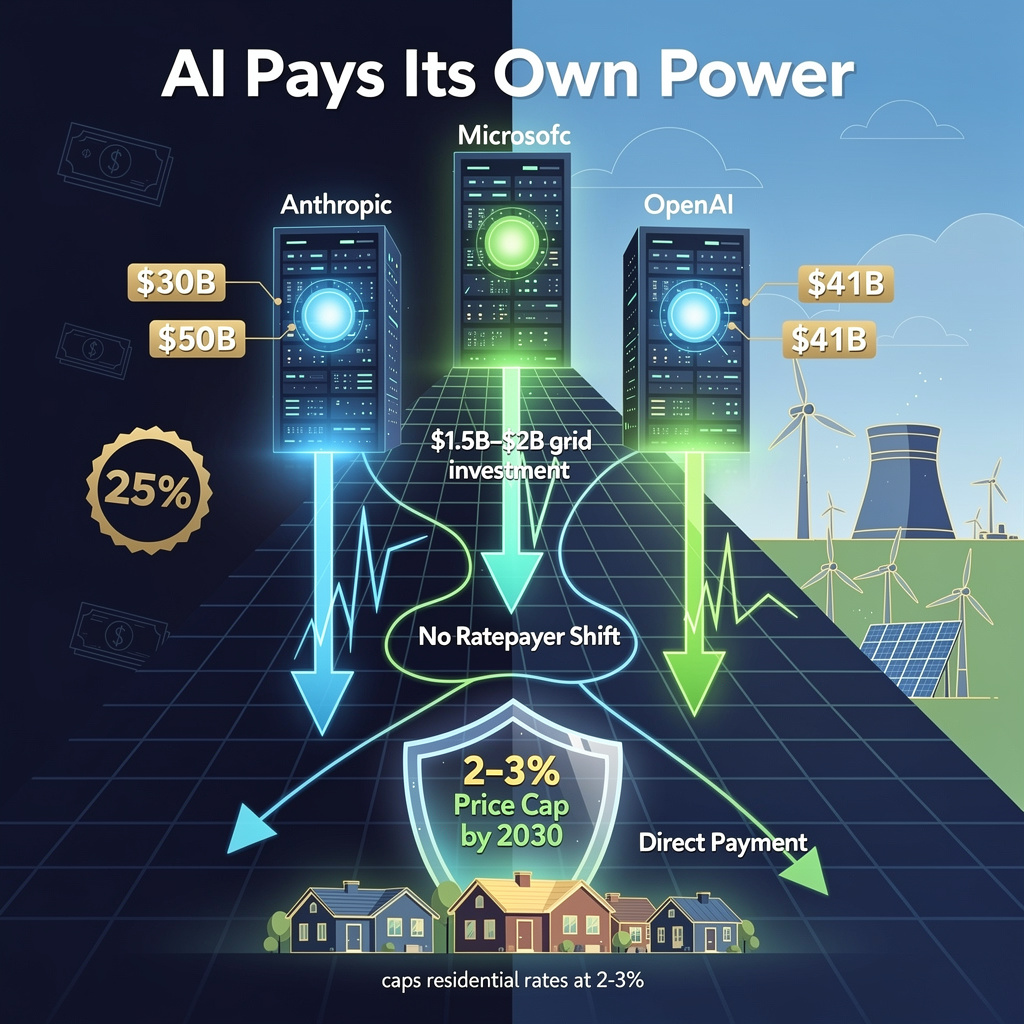

Three major U.S. AI firms have committed to absorbing their own data center electricity costs, marking a structural shift in how the industry addresses its ballooning energy footprint. Anthropic, Microsoft, and OpenAI have each pledged to internalize power expenses rather than pass them to ratepayers—a response to national electricity prices climbing 6.3% year-over-year and mounting political pressure following President Trump's February 26 State of the Union address.

How the commitments operate

Anthropic's pledge, announced February 11, covers 100% of electricity-price increases tied to its new data center construction and full financing of grid-interconnection upgrades. The company targets at least 10 gigawatts of dedicated AI capacity, implying potential capital outlays of $1.5–$2 billion for grid infrastructure alone. Microsoft, in a January policy reaffirmed February 26, blocks any residential pass-through of data center costs, absorbing operational electricity expenses in corporate OPEX. OpenAI committed January 26 to direct payment of all U.S. data center electricity bills without external subsidy or rate shifting.

Quantified impacts

- Consumer economics: Prevents projected 25% household electricity bill increases by 2030 if AI demand exceeded existing capacity

- Grid reliability: Dedicated generation and upgrade funding reduces localized overload risk

- Regulatory positioning: Early compliance accelerates permitting timelines and reduces litigation exposure

- Capital pressure: Cumulative firm commitments ($30 billion Anthropic Series G, $50 billion Microsoft data-center plan, $41 billion OpenAI round) strain cash flow against wholesale price spikes reaching 267% over five years in some forecasts

- Supply constraints: 50 GW of required new capacity demands coordination across utilities, renewables developers, and emerging modular nuclear projects

- Policy exposure: Potential rollback of federal incentives could erode cost-internalization feasibility

Institutional responses and gaps

Federal and state frameworks are crystallizing around these voluntary pledges. The White House prepares a voluntary agreement formalizing electricity-price spike responsibility. Senators Van Hollen and Hawley introduced the GRID Act February 12, establishing a "polluter-pays" prohibition on residential ratepayer shifts. New York legislators filed permit-pause legislation requiring grid-upgrade financing for new AI sites. However, no enforcement mechanism currently binds firms to generation-source commitments, and the 50 GW capacity target remains contingent on supply-chain coordination absent guaranteed timelines.

Timeline and projections

- Q2 2026: Anthropic begins utility contracts for regional grid upgrades

- Q3 2026: Anthropic signs power-purchase agreements for net-new generation

- 2026–2027: Residential rate hikes moderate 0.5–1% below baseline forecasts as firms absorb incremental costs

- 2028–2030: Cumulative private investment exceeding $1 trillion delivers ≥50 GW capacity, capping national electricity price growth near 2–3% annually; AI-related emissions potentially limited to <5% of U.S. electricity-sector emissions despite 12% consumption share

These commitments represent more than public relations positioning. By treating energy infrastructure as a direct cost of doing business rather than an externality, Anthropic, Microsoft, and OpenAI establish a precedent that may outlast current political cycles. The sector's credibility—and household electricity bills—now depends on executing capital deployment at unprecedented speed.

🔥 Tesla Grok Goes On-Device: 7B-Parameter LLM Hits 2.5M Vehicles, HW4 Delivers 200ms Latency

7B parameters in your glovebox. 60% sparsity. 200ms replies. Tesla just stuffed a ChatGPT rival into 2.5M cars—offline. 🔥 HW4 models get 10x the speed, HW3 owners wait for June. First mass-market EV with native LLM, no phone tethering. Your move, Volvo Gemini. — Would you pay $5/month for 'Hey Tesla' that works in tunnels?

Tesla has begun rolling out its Grok AI system to Model Y and Model 3 vehicles, embedding xAI's large language model directly into the infotainment stack of cars equipped with Hardware 3 and Hardware 4. The deployment, which started February 26 in Australia, represents the first native integration of a consumer-grade LLM into a mass-market electric vehicle—no smartphone tethering, no cloud dependency for core functions.

How Grok runs on wheels

The architecture splits across two hardware generations. HW3 vehicles rely on AMD's Ryzen-based MCU-3 delivering roughly 2 trillion operations per second, with Grok compressed to 7 billion parameters through int8 quantization and 60% weight sparsity. HW4 models, built into Model 3s from September 2023 and Model Ys from January 2024, deploy Tesla's custom AI accelerator at ~10 TOPS—enabling 200-300 millisecond response latency versus 0.8-1.2 seconds on older hardware. Core inference stays local; cloud contact is limited to live traffic, weather, and map refreshes.

What changes for drivers

Privacy: Queries process on-device → eliminates cloud audio retention and third-party data exposure.

Responsiveness: Sub-second answers (HW4) versus smartphone-dependent assistants → reduced cognitive load during driving.

Accessibility: Voice-native control eliminates screen interaction → safer command input at highway speeds.

Fragmentation: Intel Atom-based MCU-2 vehicles remain unsupported → creates a two-tier ownership experience pending retrofit kits.

Deployment trajectory

- February–June 2026: Australia, New Zealand, and nine European markets activate on HW3; ~2.5 million vehicles (12-13% of global fleet) receive compatible software.

- Q3 2026: HW4-exclusive Grok rollout completes for late-2023 Model 3 and late-2024 Model Y builds, unlocking 10-100x throughput gains.

- 2027: Projected subscription tier ($5-10/month) for multimodal extensions; 98% intent-recognition accuracy target via reinforcement learning from real-world usage.

Competitive positioning

Volvo's Google Gemini integration and other OEM assistants remain cloud-centric, introducing latency and connectivity dependencies. Tesla's edge-first approach establishes a technical moat—roughly 1.1% of the fleet currently runs HW4-level inference, but that share compounds with each new vehicle sale. The constraint: MCU-2 owners face exclusion unless Tesla delivers promised retrofit hardware by late 2026.

This rollout signals a shift in vehicle architecture. Cars become persistent AI endpoints, not occasional smart devices. For an industry racing to differentiate software-defined vehicles, Tesla's bet on dedicated neural compute and local inference sets a benchmark that pressures competitors toward similar edge-heavy designs—or leaves them dependent on data plans and distant servers.

🧠 Salesforce MIPS: 95K Orgs, 80% Auto-Approval—AI Migration Engine Sets Audit Standard

95K orgs migrated. 80% auto-approved in 3 seconds. That's 20% faster than human-led intake—yet 1 in 5 still needs human eyes. 🧠 Salesforce's MIPS proves AI can scale cloud migration without losing audit trails. But quarterly model retraining risks blind spots. Are compliance-ready AI pipelines becoming your cloud migration dealbreaker—or just table stakes?

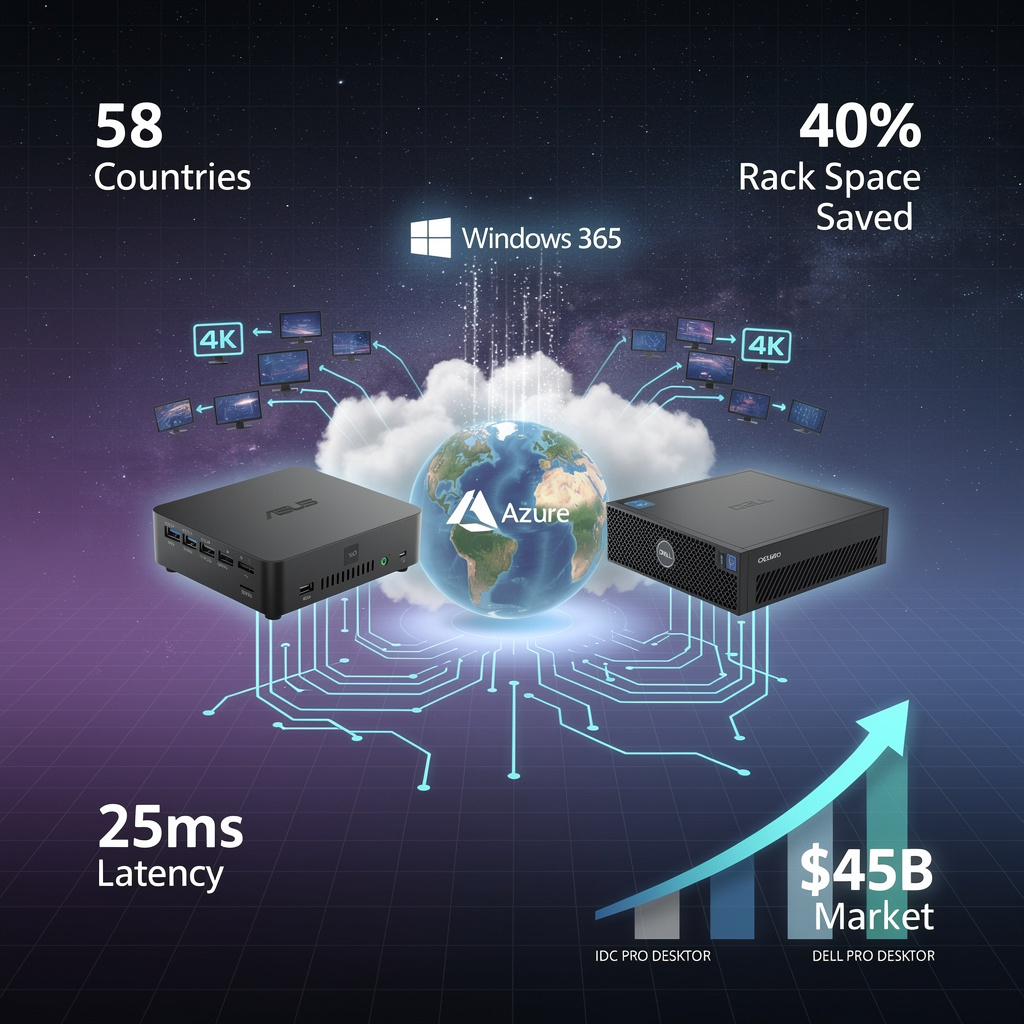

Salesforce has deployed an AI-powered migration engine that automates 80% of customer cloud transitions while preserving strict auditability, signaling a shift in how enterprise infrastructure moves at scale.

How MIPS automates 95,000 migrations

The Migration Intake and Processing Service (MIPS) combines rule-based filters with a supervised-learning classifier trained on 95,000 historical migration outcomes. The system processes 15,000 annual requests with a median decision latency of three seconds, routing 80% to automatic approval and escalating 20% to human specialists when confidence scores fall below 0.75 or data appears incomplete. Every decision—automated or escalated—generates an immutable audit log capturing input vectors, model confidence, and routing instructions.

Continuous data pipelines refresh metadata contracts hourly and run bulk quality checks nightly, ensuring the engine operates on current CRM, ERP, and custom object states. Parallel batch scheduling enables up to 5,000 organization migrations per window, with sandboxed Hyperforce containers isolating failures to individual batches.

What the metrics indicate

- Efficiency: 8,000 organizations migrated monthly, with 90%+ completed within schedule

- Accuracy: Continuous dataset refreshes reduced false-negative escalations by ~12% versus prior manual processes

- Governance: Immutable logs enable regulator and customer traceability of every decision to raw inputs

Where operational gaps persist

Specialist bandwidth: 20% escalation still requires dedicated migration staff, creating a persistent human bottleneck.

Model currency: Quarterly retraining cycles risk missing emerging migration patterns between updates.

Regulatory exposure: Automated decision-making faces intensifying scrutiny; any audit log breach would erode customer trust.

How adoption scales over time

- 2026–2027: Throughput rises to 12,000 monthly migrations as data refresh intervals tighten to 30 minutes, pushing annual volume above 150,000 organizations.

- Late 2027: Refined models incorporating synthetic edge-case data target ≤15% escalation rates, redirecting specialist capacity toward consulting engagements.

- 2028–2029: MIPS architecture extends to AppExchange deployments, data-lake ingestions, and multi-cloud orchestration under Salesforce's broader hyper-automation roadmap.

MIPS establishes that enterprise-scale cloud migration can achieve both velocity and verifiability when AI decision engines embed governance mechanisms from inception. The service's auditability model—cryptographically signed logs, third-party compliance validation—positions it as a benchmark competitors will be pressed to match.

In Other News

- CoCounsel Reaches 1 Million Users; Thomson Reuters Acquires AI Legal Assistant for $650M

- Perplexity releases pplX-embed multilingual embedding models with 32x memory reduction via binary quantization and Matryoshka Representation Learning

- Android 17 Beta 2 Released with ACCESS_LOCAL_NETWORK Permission, UWB DL-TDOA, and 3-Hour SMS OTP Delay

- EHC Investment and Supermicro Partner to Deploy $20B Sovereign AI Data Centers in UAE

Comments ()