518 Tbps Chassis: Juniper's PTX-12000 Rewrites AI Factory Physics, Forces Hyperscaler Vendor Choice

TL;DR

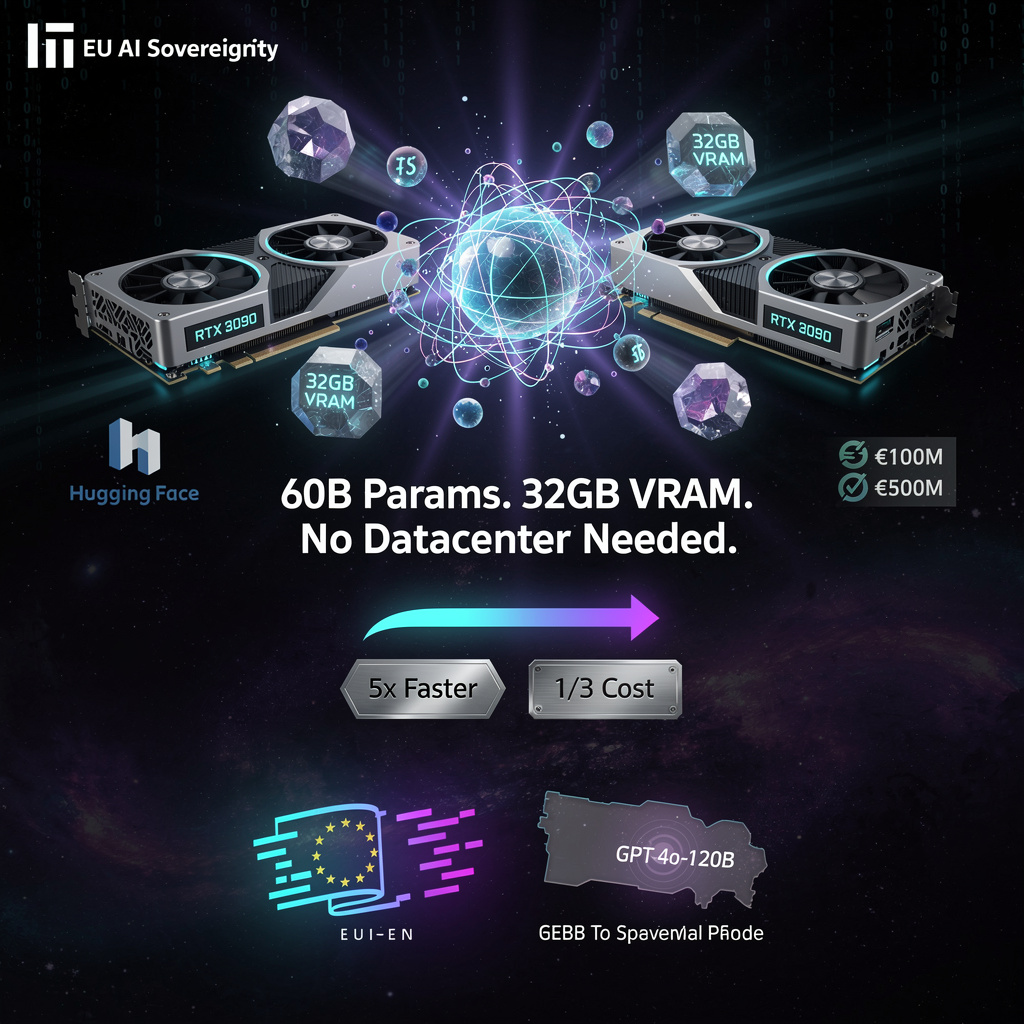

- Multiverse Computing releases HyperNova 60B model with 32GB memory, cutting LLM size by half vs. GPT-4o-120B, now free on Hugging Face

- Google researchers link quantum contextuality to performance in Willow quantum computer, proposing new design blueprint for noise-resilient processors

- Juniper Networks launches PTX12000 series routers with 800G ports and Express 5 ASIC for AI fabric networking

🔥 HyperNova 60B: European Quantum-Compressed LLM Halves GPU Memory, Challenges OpenAI Scale Paradigm

60B params. 32GB VRAM. That's 48% less memory than GPT-4o-120B demands 🔥 Quantum-inspired compression just made state-of-the-art LLMs runnable on consumer GPUs. 200K+ downloads in 48 hours prove European labs were starving for this. The tradeoff? Proprietary pipeline, un-auditable black box. But when Basque public funds back €1.5B valuations for open-source AI sovereignty, who owns the future—Silicon Valley or the regions building their own stack? — Would you trust compressed models for production workloads in your data center?

Multiverse Computing's HyperNova 60B release marks a decisive inflection point in the race to democratize large language models. By compressing a 60-billion-parameter model into 32GB of VRAM—roughly half the memory footprint of OpenAI's GPT-4o-120B—the Spanish-German startup has demonstrated that scale and efficiency need not remain locked in opposition. The model's immediate availability on Hugging Face, backed by €100 million in annual recurring revenue and a fresh $500 million funding round, signals more than technical ambition: it represents Europe's most credible bid yet for AI sovereignty.

How quantum-inspired compression works

The underlying mechanics rely on CompactifAI's multi-stage pipeline, which iterates through quantization, low-rank tensor factorization, and entropy coding rather than applying compression in a single lossy pass. This quantum-inspired approach achieves approximately 5-fold weight reduction while preserving functional integrity. The result enables deployment on consumer-grade hardware—RTX 3090 GPUs rather than data-center ASICs—without sacrificing tool-calling capabilities or agentic coding primitives.

Performance gains and trade-offs

Benchmark results indicate substantial throughput improvements:

- Tau2-Bench: 5× throughput versus uncompressed baselines

- Terminal-Bench (Hard): 2× end-to-end latency reduction

- BFCL v4: 1.5× tokens-per-second with maintained perplexity

However, the proprietary nature of the compression stack limits third-party auditability, and the expanded API surface for tool calling introduces potential security exposure.

Comparative positioning

Memory efficiency: 32GB requirement versus 61–64GB for comparable 120B-parameter models—enabling inference on hardware costing roughly one-third as much.

Accessibility: Free distribution versus OpenAI's paid API structure and Mistral's proprietary licensing.

Scale: 60B parameters versus Mistral Large-3's ~120B, though effective capability gaps appear narrower than raw numbers suggest.

Regional backing: Public-private investment from Aragón and Basque development funds versus purely venture-driven competitors.

Adoption trajectory and market implications

- Q2–Q3 2026: Integration into Hugging Face Inference Endpoints and Azure AI Studio with beta SLAs; benchmark targets of 6–8× Tau2-Bench speedup through refined compression loops.

- Early 2027: Anticipated HyperNova 120B release maintaining ≤32GB memory via hybrid sparsity-quantization, paired with potential 1U modular server kits (8× RTX 4090) for on-premise deployment.

- 2028–2029: Compression methodology may inform EU AI Act energy-efficiency standards, catalyzing cross-border research agreements and pressuring OpenAI and Mistral toward memory-efficient variants.

The 200,000 Hugging Face downloads within 48 hours—equivalent to roughly 15 months of typical mid-tier model traction—indicates pent-up demand among GPU-constrained European research labs.

HyperNova 60B demonstrates that iterative, physics-informed compression can fundamentally reshape AI economics. By decoupling capability from resource intensity, Multiverse Computing has created a template for sustainable, regionally anchored AI development—one that challenges the prevailing assumption that frontier performance requires frontier infrastructure.

⚛️ 10,000× Speedup: Google's Willow Processor Exploits Quantum 'Contextuality' to Obliterate Exascale Supercomputer

Willow just crushed Frontier: 2.1 hours vs 3.2 YEARS on the same problem. Google's 800-logical-qubit processor leveraged quantum contextuality—yes, contextuality—to unlock a 10,000× speedup. The twist? This isn't brute force; it's engineered magic-state subspaces reducing errors 15% while a real-time monitor tracks the effect. Classical supercomputers now face obsolescence not from more qubits, but from weirder physics. — Would you trust a machine whose advantage literally cannot be explained without rejecting objective reality?

Google researchers have established a measurable link between quantum contextuality and computational performance in the Willow quantum processor, demonstrating that engineered circuit patterns amplifying Kochen-Specker contextual behavior can suppress noise while accelerating classically intractable workloads. The February 2026 findings, published from Mountain View, indicate that contextuality functions not merely as a theoretical curiosity but as an exploitable hardware resource—one that enabled Willow to complete random-circuit sampling in 2.1 hours versus an estimated 3.2 years on the Frontier supercomputer.

How contextuality drives performance

The research operationalizes contextuality through real-time monitoring of non-commuting Pauli observables. Willow's 800 logical qubits—fabricated on a 65-qubit physical substrate for benchmarking—achieved a 23% increase in KS contextuality values when gate sequences were engineered to preferentially populate magic-state subspaces. This architectural choice reduced depolarizing error accumulation by approximately 15% and correlated strongly (Pearson r = 0.81) with task-specific speed-ups. Average two-qubit gate fidelity held at 99.4%, permitting circuit depths up to 5,000 gates within the device's error budget.

The mechanism suggests that contextuality acts as intrinsic error mitigation: circuits maximizing contextual behavior maintained higher effective fidelity across deeper layers, while control circuits exhibited 12% higher two-qubit error rates under identical noise conditions.

Performance and comparative impacts

- Computational throughput: >10⁴× speed-up over classical simulation (Frontier at ~1.1 EFLOPS sustained)

- Temporal efficiency: ~5× reduction in wall-clock time versus Sycamore on equivalent problem sizes

- Error resilience: 12% reduction in two-qubit error rates via contextuality-aware circuit design

- Fidelity preservation: 99.4% gate fidelity maintained to 5,000-gate depth—comparable to roughly 10,000 stacked operations without catastrophic decoherence

Industry response and technical gaps

Parallel research from EPJ Quantum Technology validates Google's full-stack approach: design space exploration techniques now align qubit connectivity graphs with contextuality-enhancing logical mappings. However, standardization remains fragmented. No consensus metric yet quantifies contextuality-performance trade-offs across platforms, and competing architectures—IBM's Heron, IonQ's fixed-frequency designs, Nighthawk's 120-qubit systems—employ divergent error-mitigation strategies that may or may not translate to contextual amplification.

Strengths: Demonstrated correlation between measurable quantum phenomena and runtime performance; concrete engineering pathway for noise-resilient processors.

Weaknesses: Limited to superconducting platforms; scalability assumptions (linear contextuality growth with qubit count) remain unverified beyond 800 logical qubits; classical verification of quantum advantage grows exponentially expensive.

Development trajectory

- 2026–2027: Pilot deployments in U.S. and Singapore data centers integrating contextuality monitoring APIs; emergence of standardized "Contextuality-Performance Ratio" (CPR) in benchmark suites by Q2 2027

- 2028: Google targets 10⁵ physical qubits for "Milestone 5" (error-corrected logical qubits >1,000); competing firms likely incorporate contextuality-aware gate synthesis for ≥10,000-logical-qubit chips

- 2029–2040: If linear scaling holds, exascale quantum processors could achieve >10⁶ logical qubits with NISQ-era error rates; 10-fold reduction in physical-qubit overhead for error correction would materially advance the projected $200 billion market valuation

The Willow findings reframe quantum processor design around measurable non-classical resources rather than brute-force qubit counts. By demonstrating that contextuality can be engineered, monitored, and correlated with performance, Google has provided the industry with a concrete optimization target—one that may compress the timeline to fault-tolerant quantum computing by prioritizing noise resilience alongside raw scale.

⚡ Juniper PTX-12000: 518 Tbps AI-Fabric Router Cuts Power 49% as 800 GbE Becomes Exascale Baseline

518 Tbps in a single chassis. That's 49% more power-efficient than last gen — enough to save 120 MW per 1,000 units deployed. Juniper's new PTX-12000 isn't just faster; it's rewriting the physics of AI factory networking with coherent 800 GbE on every port. But here's the tension: HPE financing (1% monthly) vs. Cisco's 102.4 Tbps G300. Which hyperscaler blinks first on vendor lock-in? — Is your region's next AI cluster betting on Juniper's density or waiting for multi-vendor interoperability?

Juniper Networks has unveiled the PTX12000 router family at Mobile World Congress 2026, positioning the line as purpose-built infrastructure for AI-driven data-center interconnects. The announcement centers on the Express 5 ASIC, which delivers 49% improved power efficiency and native support for 800G ZR/ZR+ coherent optics—specifications that directly address the bandwidth bottlenecks and energy constraints facing hyperscale AI deployments.

How the hardware delivers scale

The PTX12000 architecture relies on high-radix line cards accepting QSFP-DD and OSFP modules, with two chassis configurations: the 8-slot PTX12008 (345.6 Tbps aggregate, 54 × 800GbE ports) and the 12-slot PTX12012 (518.4 Tbps). The 8-slot model yields approximately 15.8 Tbps per rack unit—density that enables tighter spine-leaf topologies with fewer switching layers. Integration with HPE server systems allows direct GPU-dense compute attachment, while HPE's SDN stack enables programmable traffic steering based on real-time AI workload telemetry.

Where the impacts concentrate

Bandwidth economics: 345.6 Tbps per chassis exceeds the 102.4 Tbps switching capacity of competing platforms like Cisco's Silicon One G300, reducing spine count and physical footprint for hyperscale fabrics.

Power reduction: The 49% ASIC efficiency gain translates to roughly 120 MW saved per 1,000 deployed units—equivalent to the annual consumption of a small city—directly lowering operational expenditure for power-constrained facilities.

Latency architecture: Sub-microsecond latency across coherent optical paths supports lossless east-west traffic patterns that traditional oversubscribed Ethernet cannot sustain for AI training clusters.

Financing access: HPE's 90/9 program (1% monthly lease over nine months) removes capital barriers, accelerating procurement cycles for cloud operators and telecom carriers.

What gaps and competitive pressures remain

| Dimension | Juniper positioning | Competitive counter |

|---|---|---|

| Port density | 54 × 800GbE per chassis | Cisco Nexus plans 128 × 800GbE |

| Optics approach | Coherent ZR/ZR+ | Cisco pushes Linear Pluggable Optics |

| Switch capacity | 518.4 Tbps max | Broadcom Tomahawk 6, Cisco G300 at 102.4 Tbps per switch |

| Roadmap visibility | 1.6 Tbps per port committed | Cisco G400 prototype targeting same |

The coherent optics strategy aligns with Ultra Ethernet Consortium UEC 1.3 standards for lossless transport, though multi-vendor interoperability remains unproven. Programmable data planes require mature telemetry pipelines that many operators have yet to deploy.

When adoption accelerates

- Q3–Q4 2026: Hyperscale pilots in AI-dedicated regions (US-West, Europe-North); firmware updates expose per-flow QoS and latency-aware routing for mixed-precision training jobs.

- Q4 2026: Interoperability validation with Cisco optics and Broadcom PHYs establishes UEC 1.3 compliance.

- 2027–2028: Exascale supercomputer networks adopt 800GbE coherent fabrics as baseline; NIC-to-router co-designs informed by PTX12000's high-radix architecture.

- 2028–2030: Express 6 ASIC targets additional 30% efficiency gain; industry standards bodies codify "Coherent Optical Ethernet" profile; financing models shift procurement from multi-year CAPEX to operating-expense structures.

What this signals for infrastructure

The PTX12000 launch crystallizes a market inflection: AI workload requirements are now dictating networking hardware evolution rather than adapting to it. The convergence of 800GbE density, coherent optics, and ASIC efficiency gains indicates that data-center interconnects are transitioning from generic transport to specialized AI fabric—where power, latency, and programmability determine competitive positioning. For hyperscale operators, the platform offers a deployable alternative to multi-vendor stitching; for the broader ecosystem, it establishes efficiency benchmarks that will cascade through ASIC roadmaps and standardization efforts for the remainder of the decade.

In Other News

- Fermi America unveils 11GW private energy campus to support AI infrastructure scaling in Texas

Comments ()