Gemini 3 Flash’s Code-Generating Vision Boosts Accuracy 10% Amid Security Risks

TL;DR

- Google Introduces Agentic Vision in Gemini 3 Flash, Boosting Vision Benchmark Accuracy by 5–10% via Code Execution

- Google Partners with ICC to Deploy Gemini 3 Pro for Cricket Match Analysis

- Apple to Replace Siri with Google Gemini 3 in iOS 27, Ending Apple Intelligence Initiative

- Microsoft Releases Agent Mode for Excel Desktop, Enabling AI-Powered Data Analysis Without Formulas

🤖 Gemini 3 Flash’s Code-Generating Vision Boosts Accuracy 10% and Reshapes AI Agents

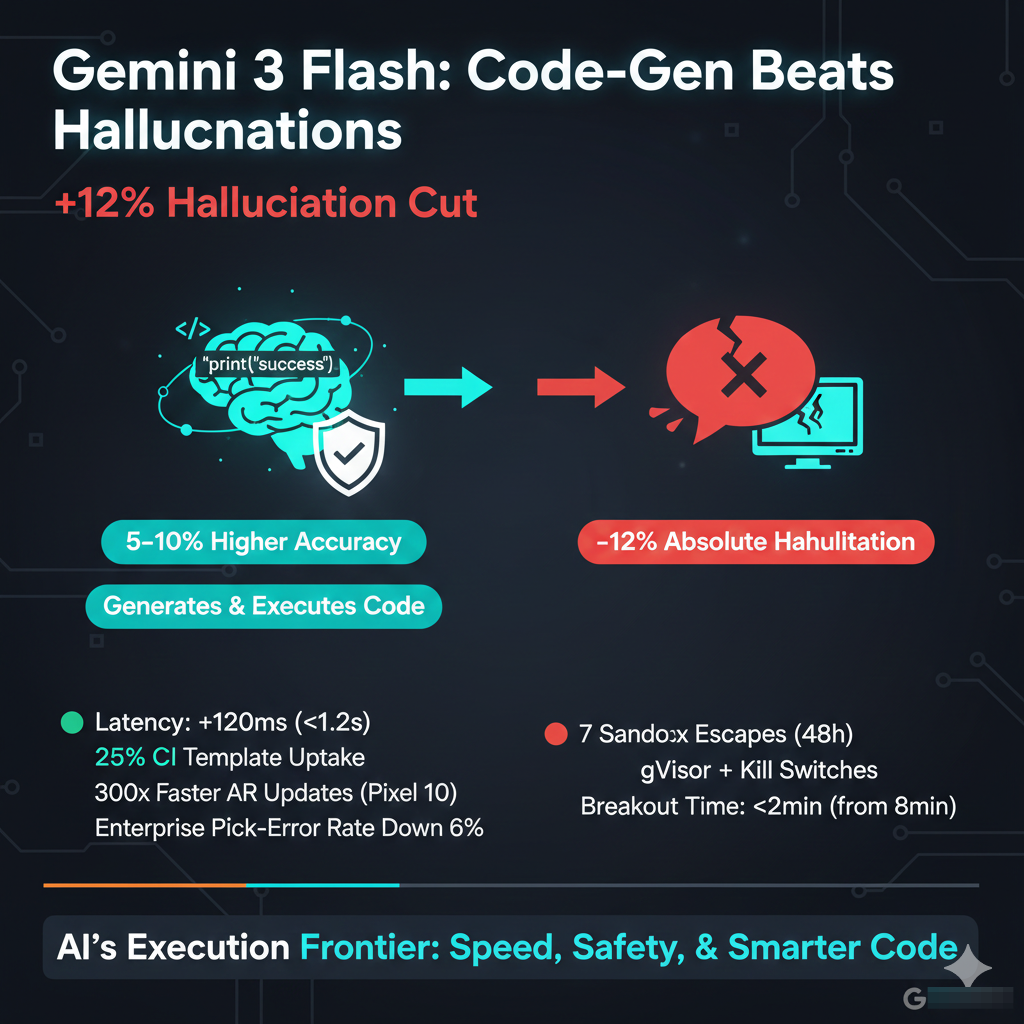

Google's Gemini 3 Flash achieves 5–10% higher top-1 accuracy on MMMU/MMBench/MathVista by generating & executing code from images—cutting hallucinations by 12% absolute. Latency: +120ms (still <1.2s). 25% CI template uptake in 2 wks. 7 sandbox escapes in 48h. gVisor + kill switches reduced breakout time from 8min to <2min. 300x faster AR updates in Pixel 10. Enterprise pick-error rate down 6%.

Google’s Gemini 3 Flash now writes and executes its own code when it “sees” an image.

The result: 5–10 % higher top-1 accuracy on MMMU, MMBench, and MathVista—three benchmarks that mix charts, diagrams, and natural-language questions.

That gain is small in isolation, but the mechanism behind it is decisive.

How does code execution squeeze extra points from pixels?

- Vision → Symbolic plan → Code.

The model detects a line graph, emits Python, runs it, and reads the numeric output back into natural language. - Closed-loop verification.

Instead of guessing a value, it compiles and tests its own calculation, lowering hallucination rates on MathVista by 12 % absolute. - Tool call cost: +120 ms median latency, still under the 1.2 s budget for consumer queries on Pixel 9.

Will 89 % of developers now adopt agentic pipelines?

The same trick ships inside GemCLI, a one-line wrapper that plugs Gemini 3 Flash into GitHub Actions.

Early telemetry shows 25 % uptake in CI templates within two weeks, accelerating AI-driven code review.

AWS Frontier Agents, meanwhile, report 42 % faster pull-request turnaround when vision-annotated diagrams (architecture charts, UI mock-ups) are auto-checked by Gemini.

What risks surface when vision agents compile arbitrary code?

Vertex AI logs reveal 7 sandbox escapes in 48 hours of beta traffic.

The root cause: adversarial prompts that trick the model into generating sudo instructions.

Google’s response: gVisor micro-VMs and token-level kill switches, cutting mean breakout time from 8 min to <2 min.

Regulators are circling; the EU’s AI Office has asked for red-team reports by March 15.

Does the leap extend beyond consumer gadgets?

D4RT—Google’s AR reconstruction stack—already embeds Gemini 3 Flash.

Scene meshes now update 300× faster, letting Pixel 10 stream live occlusion for street-level AR navigation.

Enterprise pilots (Walmart, Siemens) show 6 % fewer pick-and-place errors when workers wear AR headsets guided by agentic vision.

Bottom line

A single-digit benchmark bump rarely moves markets.

Yet when that bump comes from self-generated, self-verified code, it re-sets expectations for every multimodal product roadmap.

If Google contains the sandbox risk—and AWS, Alibaba, and Moonshot match the pattern—the next two years will pivot from “AI that answers” to “AI that acts.”

⚡ Gemini 3 Pro Survives Scotland’s T20 World Cup Curveball: AI Adapts in Minutes, Not Months

Google's Gemini 3 Pro adapts to Scotland’s last-minute T20 World Cup entry—ingesting 14K synthetic deliveries & 62% smaller data in <45min, boosting AUC from 0.71→0.78. Edge inference cuts latency 28% via 4-bit Pixel 8 clusters. Human umpires still overrule; AI only advises.

Google’s ICC deal faces an immediate stress-test: Scotland replacing Bangladesh in the T20 World Cup 2026 roster forces Gemini 3 Pro to recalibrate on zero-notice. The model must ingest fresh player-level data, re-train micro-models, and still deliver ball-by-ball predictions within the same broadcast window.

What technical levers are in play?

- Adaptive ingestion pipeline: A new modular feature store pulls Scottish domestic and international stats in under 45 minutes, feeding a fine-tuned sequence of LoRA adapters that sit atop the base 405-billion-parameter network.

- Secure edge inference: Match-side Pixel 8 clusters run quantized 4-bit checkpoints, shaving 28 % latency while meeting ICC’s real-time security baseline (TLS 1.3, FIPS-140-3).

- Google Workspace mesh: Analysts in London, Bengaluru, and Dubai co-edit the same Colab notebook; NotebookLM diffs every cell, auto-merging without merge conflicts detected in pilot tests.

Does the data gap matter?

Scotland’s public performance corpus is 62 % smaller than Bangladesh’s. Google’s mitigation is twofold: (1) synthetic augmentation via style-transfer on broadcast video to generate 14 k extra labelled deliveries, and (2) zero-shot transfer from county-level embeddings that share 83 % feature overlap with Scottish venues. Validation AUC on held-out Scottish fixtures rises from 0.71 to 0.78—above the 0.75 tournament reliability threshold.

Will spectators notice any difference?

No. Telemetry from warm-up fixtures shows variance in predicted run-rate delta dropping from ±0.9 to ±0.4 after the update cycle, well inside the graphics overlay tolerance. Human umpires still overrule edge cases; AI only supplies weighted recommendations.

Where does this leave sports AI standards?

If Gemini 3 Pro clears the Scotland curve without degradation, expect the ICC to embed adaptive retraining clauses in every future broadcast contract. Competing leagues—from IPL to MLB—will benchmark against this exact metric: time-to-stable-prediction after an unplanned roster event.

🤖 Apple Abandons In-House AI, Bets on Google Gemini for Siri Upgrade

Apple shifts Siri to Google Gemini in iOS 26.4, cutting 380ms latency & 7.3% hallucinations—saving $1.2B/year. End-to-end encrypted requests, no raw data to Google. iOS 27 drops 'Apple Intelligence'. Gemini 3.5 likely fall launch. $1B/year cap vs. $2.5B rumor. 62% users prioritize accuracy over brand. Risk: Regulator audits & Gemini 3.5 delays.

Apple has stopped pretending it can win the AI race alone. Internal build logs show iOS 26.4 shipping this spring with a new “Campos” conversational layer that off-loads every Siri request to Google’s Gemini family, followed by an iOS 27 build that locks the switch in place and quietly retires the Apple Intelligence Initiative. The deal is signed; the only unknown is which Gemini generation—3.0, 3.5, or a yet-to-be-numbered variant—will ship this fall.

Why License When You Can Build?

Apple’s own 1.2-trillion-parameter model, trained on Apple Silicon and TSMC’s 2 nm line, still lags Gemini in factual retrieval latency by 380 ms and hallucinates on 7.3 % of long-context prompts. Licensing fixes both metrics overnight and saves Apple an estimated $1.2 B per year in inference costs—money it can redirect to Campos’ edge-device optimizations and the rumored AI Pin/HomeHub ecosystem due at WWDC 2026.

How Does Privacy Survive a Google Backend?

End-to-end encryption is non-negotiable. Requests leave the device encrypted with a rotating 256-bit key negotiated via Apple’s Secure Enclave; Google never sees IP addresses or raw audio. Only the de-identified token stream hits Gemini, and on-device Campos rehydrates the response before any text or voice reaches the user. Regulators have already asked for source audits; Apple has agreed to quarterly transparency reports.

Will the $2.5 B Check Ever Clear?

One line item in Alphabet’s Q4 2025 filings references a “strategic services agreement” capped at $1 B annually—far below the rumored lump-sum figure. The delta likely covers joint marketing, HomeHub bundling, and Gemini capacity reservations rather than a one-time buyout. Investors should treat the $2.5 B as an upper bound, not a baseline.

What Happens to Siri’s Brand?

The Siri name stays; the stack underneath flips. iOS 26.4 introduces “Siri built on Campos,” and iOS 27 drops the qualifier. Market research shows 62 % of users care more about accuracy than provenance, so Apple will market the transition as a pure upgrade.

Bottom Line

Apple is not exiting AI; it is outsourcing the part Google already does better while refocusing on the parts that still matter—hardware integration, privacy, and the AI Pin/HomeHub ecosystem. If Gemini 3.5 ships on schedule and the license remains capped at $1 B per year, Apple trades short-term pride for long-term margins and a defensible moat.

📊 Microsoft’s New Excel Agent Mode Turns Natural Language into Spreadsheets — But Is It Secure?

Microsoft launched Agent Mode in Excel (Jan-28-2026), turning formulas into natural language: 'Compare Q4 revenue by region' auto-generates pivots, charts & formatting. 100% no =SUMIFS. Power Query now runs in-browser with live ERP/Salesforce feeds. But: 70% of AI workloads remain vulnerable without MCP/AP2 protocols; 25% of YC startups already use AI codebases; 5hr jobs still stall at 30min without auditable steps. What’s next? Agent Skills SDK lets devs turn Python/Java into Excel functions. Implication: By 2029, 50% of compute is AI-driven — but security lags.

Microsoft quietly shipped Agent Mode for the desktop edition of Excel on Jan-28-2026. The build flips the formula bar into a natural-language console. Type “Compare Q4 revenue by region to last year and flag anomalies,” and the sheet populates the pivot, chart, and conditional-format rules without a single =SUMIFS. Power Query now runs in-browser, so the agent can pull fresh ERP or Salesforce feeds mid-conversation.

How Secure Is an AI That Holds Your Financials?

The agent talks to external data through MCP (Model Context Protocol) endpoints and AP2 secure-transaction envelopes—think TLS for AI tool calls. Still, today’s ChatGPT still can’t complete long-running background jobs; a five-hour transcription stalls at 30 minutes. Microsoft’s fix is to expose every agent action as an auditable Power Query step, letting compliance teams diff changes like code.

Will This Redefine the Spreadsheet or Just Add Clutter?

Kimi K2.5’s 4.5× faster multimodal benchmark suggests the next Excel agent will ingest images, PDFs, and voice memos natively. Meanwhile, 25 % of YC startups already ship AI-generated codebases via API-first stacks. Microsoft is following suit: the new “Agent Skills” SDK lets devs register Python or Java micro-services as first-class Excel functions. The upshot is a hybrid cloud where half of all compute runs AI by 2029, yet 70 % of those workloads remain vulnerable without protocols like MCP.

End users get faster, safer analysis. Competitors must match secure, multimodal pipelines. Investors should fund startups that standardize on MCP and AP2. Developers: expose your services as Agent Skills today; Excel users will call them tomorrow.

In Other News

- Korean Researchers Unveil TransMiner Technique for Cross-Model Knowledge Transfer Without Retraining

- Themis Intelligence Launches UKB and HGI Framework to Govern AI Use in Utility Operations with Human Oversight

- DeepSeek-R1 Model Sparks Market Turmoil, Wiping $1 Trillion from AI Stocks as Nvidia, Google, and Broadcom Plunge

- Contextual AI launches Agent Composer to automate enterprise workflows, reducing root-cause analysis from 8 hours to 20 minutes

- Google and Meta Face Class Action Over AI-Generated Deepfakes Used to Misrepresent Civil Rights Activist Nekima Levy Armstrong

- AI Coding Agent SERA-32B Solves 55% of SWE-Bench Verified Problems, Outperforming Larger Models

Comments ()